Software, the millions of lines of code that endlessly run our cell phones, computers and servers, has become fundamental in our society. Although until not long ago, developers largely saw themselves as experts in the field of technology, but beyond other considerations, the voices advocating for viewing software from a social perspective are getting stronger.

Ethical software

The most blatant example is certainly the indiscriminate use of machine learning. This summer it was reported that the British government used these tools to extrapolate a student’s final grade based on a multitude of variables. The result: lower grades depending on the neighborhood where you live and the type of school you attend. For many students this determines if they can attend college and what kind of college – without a doubt, their future over the long-term.

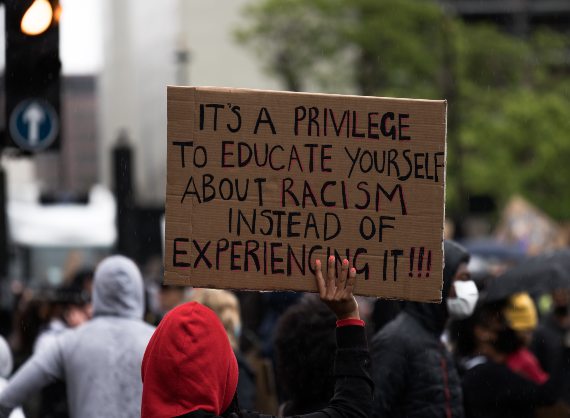

These algorithms also have race and gender biases. For example, a candidate for a position could receive a lower “professional” score simply because they are not Caucasian, or receive worse medical care. Online we can find many examples of how software is not able to recognize the faces of people of color, or how a person with a mop and a drill is classified as a “cleaner” or “carpenter”.

The explanation of these facts is simple: these systems learn automatically, yes, but they do so based on vast amounts of data provided by programmers. If these data are biased, for example students from this school tend to receive higher grades, women with a mop tend to be cleaners, etc., the algorithm will just reproduce these prejudices. That’s why good developers are increasingly making an effort to ensure that complete data are used, and one part of the population is not overrepresented.

There is also a problem of overconfidence in technologies. These automated systems work like recommendations; perfect for deciding what song you want to listen to or what clothes would look good on you. But when an algorithm is responsible for deciding who could receive social assistance or whether a person should go to jail, we are eliminating a personalized, more or less transparent system and replacing it with an opaque and inhuman one.

The development of COVID-19 tracking apps is an example of how times are changing. In most European Union countries (such as Spain, Germany, and many others) the app’s source code, or the instructions for how it works, are accessible to the public. This means that anyone can see, learn and help to develop them: software as part of society.

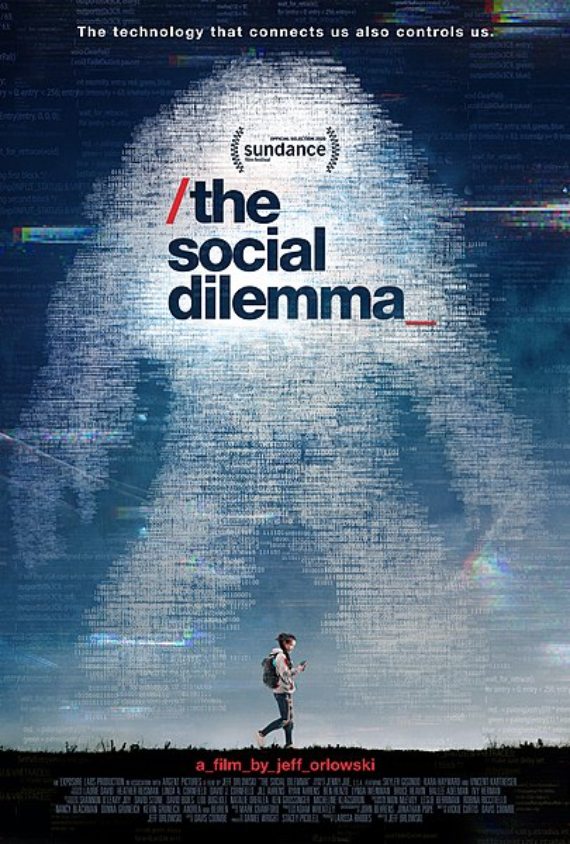

Accepting that software can help society means accepting that it can modify what we do. Big tech understands this well, as they attempt to capture our attention and our clicks. The recent documentary The Social Dilemma opens a door to this world. Those seeking a less sensationalist approach can opt for Lawrence Lessig’s Codev2 to understand how software modifies what we see and what we can do in today’s society. For several months now, more voices have been heard that are asking to what extent a good salary and nearly ideal work environment should make us forget the ultimate goal of the technologies that someone develops. Several years ago, working for Facebook was the dream for most programmers. Now people are asking more questions.

Sustainable software

Our generation’s biggest problem, climate change, is also not disconnected from software. After all, computing and sending information uses electricity. That’s why Google chooses the location of its servers, in part due to the electricity bill. Some developers try to cut code, not only to make it faster, but to reduce the amount of energy needed for its transmission and processing. The contribution to global warming of these emails with a simple emoticon is measured in tons of CO2 emitted to the atmosphere.

As software becomes more omnipresent in our lives, those involved in its development are becoming more aware of the society that surrounds us. Often the line that separates the programmers’ keyboard from its consequences is very short – too short to overlook. Ethics, sustainability and responsibility have become words that are too alienated from our profession. The change has already begun.

Comments on this publication