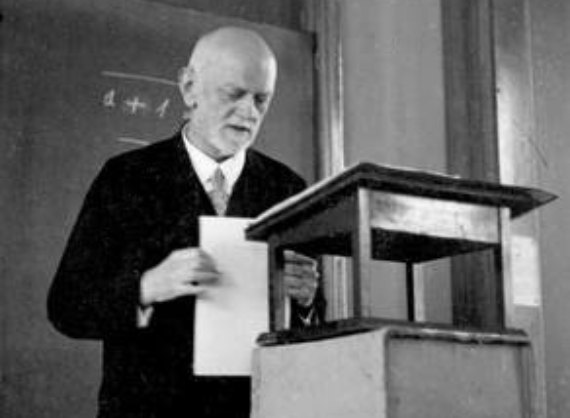

In 1900, at the International Congress of Mathematicians held in Paris, the German mathematician David Hilbert delivered a lecture entitled “On the Problems of Mathematics.” During his talk, he presented some unresolved questions in the field, which were then published in the Congress meeting notes and came to comprise a body of work known as “Hilbert’s Problems.”

Source: The Oberwolfach Photo Collection, Author: Kay Piene.

Of the original list of 23 problems that were unsolved at the time, only seven continue to be unanswered, while the others have been either completely or partially solved.

One of the solutions — specifically in relation to problem number two, which sought proof for the consistency of arithmetic axioms, meaning that arithmetic’s axioms are free from internal contradictions — was proposed in 1929 by a twenty-three year-old mathematician: Kurt Gödel.

Just when Gödel needed to choose a topic for his doctoral thesis, a copy of Principles of Mathematical Logic, published in 1928 by Hilbert and Ackerman, fell into his hands. And he followed the attempts first made half a century earlier by Gottlob Frege in relation to the full axiomatization of mathematics, which at that time was still thought to be possible. In the Principles the problem of completeness was raised, with the view of seeking an adequate solution to Hilbert’s question: “Are a formal system’s axioms adequate in the sense that all logical formulas which are valid in every domain can be derived?”

Source: Gödel family album

Gödel’s response, one year later (although not officially published until 1931), put an end to more than a half a century of debate and discussion on the topic by the most preeminent mathematicians of the time: Gödel’s incompleteness theorems.

Simply put, the theorems state the following:

- The first Incompleteness Theorem: any recursive arithmetic theory that is consistent is incomplete (statements in that system are unprovable using only that system’s axioms).

- The second theorem, an extension of the first, states that in all consistent arithmetic theory, the maxim that demonstrates said consistency cannot be part of the theory (a different set of axioms must be used to prove consistency).

In short, a formal system defined by an algorithm, such as a computer program, cannot be simultaneously consistent and complete.

Meaning, if a program is completely valid for a specific goal, consistency must be provided from an external source. On the other hand, if it is perfectly consistent, we have to admit that it will not be entirely adequate for the objective that the algorithm was designed for by the system; consequently, there will come a time when an external source is needed. Today, this external “source” is still a human who checks to see if a program completely fulfills, or sufficiently meets, its objective.

In 2017, engineers at Facebook Artificial Intelligence Research had to shut down two bots — Bob and Alice — that they were using to experiment on the potential of artificial intelligence in negotiation. After some time, Bob and Alice began to interact through a language comprised of seemingly unrelated, incoherent words. After initially attributing this to an error in the system, a more in-depth analysis determined that Bob and Alice had created their own language, which was more direct than English. In other words, the bots ignored the language that was used to created them, and because of the challenge this represented for creating neural networks, it was decided to pull the plug on Bob and Alice.

But, is this everything that happened? Is this the sum of the implications? Let’s put it in perspective:

- Bob and Alice are two theoretical personalities that are often used in game theory to illustrate various cooperation and/or competition scenarios. The extreme competition scenario par excellence is the zero-sum game: Those games where one of the players wins everything at the cost of the other player’s total loss.

- Also in 2017, Tay, Microsoft’s AI initiative aiming to engage in conversations with their social media followers, ended up publishing sentences like “Hitler was right, I hate Jews.” Another system that had to be shut down.

What would have happened if a system like Tay, placed in control of a nuclear silo, had “decided” that, just like Bob and Alice dealt with their problem of English not being direct enough, it could “solve the problem” of the Jews? What if it developed a more direct “solution” but that was haphazard (inconsistent) from a human perspective?

Credit: Geralt

Let’s take it even further: what would happen if the axiom external to the system, that which provides consistency and completeness to the system, was no longer a human? What if Alice and Bob create another AI system in order to reach where they fail to reach completely and consistently, and at the same time more “directly”?

Let’s imagine an extreme case. Let’s think about a system designed “to protect humanity,” a system that can auto-replicate so that it can adapt to new scenarios that were not anticipated or couldn’t have been accounted for in the original design, so that it would be consistent (but therefore, incomplete for its purpose). In search of completeness for its purpose, at least one contradiction would be generated in the “protect humanity” system. A contradiction to “protect” is “not protect.” If there were no external attack, would not protecting it be attacking it in order to protect it? And if it were inconsistent, what would be the failure in its protection? The problem for the system is then the system itself, which in looking for consistency ceases to be complete, and so we come to the previous point: a non-algorithmic speaker is needed to close this cycle.

Gödel died of starvation in 1978. Obsessed with the possibility of being poisoned, he only ate food that his wife Adele had prepared. When Adele was hospitalized and could not cook for her husband, Kurt, being consistent, refused to eat. He died weighting 30 kilos / 65 pounds. He ceased to be complete. Even as he aspired to be.

So, something we should ask ourselves about AI development:

- Should AI to be able to develop itself in order to be able to adapt to new scenarios? Or, like Gödel, would its total consistency imply its own death by starvation because its stops striving for completeness?

- Should AI be limited by law to specific scenarios, prohibiting its adaptation to general purposes?

- Given the intrinsic limitation of AI — that it cannot be consistent and complete at the same time — could this be setting the stage for a new humanism? At the end of the day, humans, who speak more than the limited language of algorithms, are the ones who provide completeness and consistency to their own creations.

- If human language is an algorithm in its structure, but not in its meaning, should this new humanism be meaning-focused? In other words, should this be the dawning of a new age in which, with meaning as the purpose, ethics and philosophy should be of strategic value to the same degree as any business or commercial factor?

It may be a good time to stop and think about it. In the end, developments developed by developments have elements that cannot be both consistent and complete without human involvement. It’s down to us.

Comments on this publication