If there is one prevailing stereotype in the majority of the depictions of artificial intelligence (AI) in fiction, it’s that the computers are completely lacking in imagination. This is a quality reserved for the human brain and unattainable for the silicon one, no matter how unlimited its processing capacity. Or perhaps not? If there’s one thing that technological progress has shown us, it’s that it’s our imagination that falls short when it comes to predicting the future. It’s exactly this quality—imagination—that’s now becoming available to machines thanks to a novel type of algorithm called a Generative Adversarial Network (GAN).

It was one evening back in 2014 when computer scientist Ian Goodfellow, then a PhD student in the field of machine learning at the University of Montreal (Canada), met with some of his colleagues at a bar to celebrate a graduation. During the evening, a discussion arose about how to teach machines to invent representations of real objects, without copying already existing ones and so that the result looks like a real photograph.

AI systems are experts at handling immense volumes of data in order to solve problems, and can even learn without human supervision. However, something as apparently simple as creating without assistance a plausible image of, say, a human face, is an impossibly complicated task.

Some neuroscientists note that the excellence of the human brain lies in our unsurpassed ability to process patterns: from a very young age we can identify images of faces that are very different from each other, because we know what makes a face a face. In recent years, the deep learning algorithms used in neural networks—computer systems inspired by the human brain—have given machines an amazing ability to recognize patterns, whether they are words in a conversation or the surroundings through which an autonomous vehicle moves.

A network with an opponent

However, when it comes to creating something new from what has been learned, machines fail; the images they produce are often flawed and don’t reach a convincing level of realism. How to teach a computer to invent a face that doesn’t exist in reality? During the discussion in that bar in Montreal, the suggestion was made to develop a statistical treatment of a multitude of essential details in the representation of an object. But that method would multiply the data in such a way that each new concrete application would require a monumental amount of work. Goodfellow had a better idea: why not get two neural networks to compete against each other to learn from their mistakes?

That night, Goodfellow began to write the code that would give rise to GANs: one of the networks, the generator, learns to create variations on images; the other, the discriminator, evaluates them to decide if they are real or not. The generating network continually improves its creations to try to deceive the discriminator, which in turn perfects its capacity to distinguish between the real and the artificial. Unlike generative networks without an opponent, GANs can be trained with only a few hundred images.

But if the concept of a GAN seems to resemble somewhat a Turing test, in which a machine tries to deceive a human evaluator into thinking it’s a person, that’s because in reality the idea of adversarial training has been under development for decades. In the early 1990s, Jürgen Schmidhuber, now scientific director of the Swiss AI Lab IDSIA, published a system composed of “two nets that fight each other, one maximizing the error minimized by the other,” Schmidhuber summarizes for OpenMind.

Celebrities who have never existed

In 2013, Roderich Gross, professor at the University of Sheffield (United Kingdom) and visiting scientist at the Massachusetts Institute of Technology (USA), led a project that also foresaw the idea of GANs in a system that allows a machine to learn the behaviour of an animal. Gross has coined the term Turing Learning, a generalization of GANs that does not necessarily use neural networks and that serves to “infer the behaviour of humans or other animals,” he explains to OpenMind. This application would allow the system, Gross continues, “to understand behaviour, which could be in the cyber world, like shopping on Amazon, but also the physical one.”

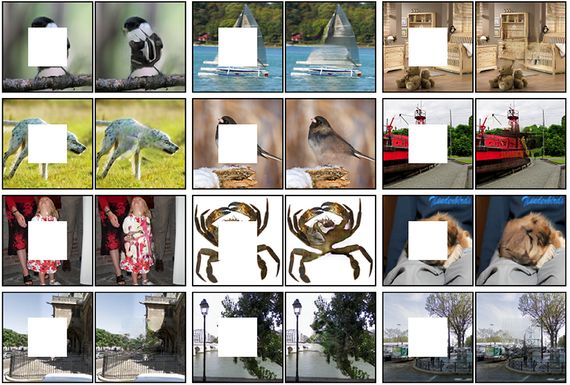

But since 2014, when GANs became popular through the work of Goodfellow (now a researcher at Google Brain), GANs have been used mainly to create realistic visuals with amazing results. In 2017, the graphics microprocessor company Nvidia trained a GAN, using photographs of celebrities, to produce its own ultra-realistic images of famous people who have never existed in the real world. GANs have been used to create videos that simulate the future few seconds of a scene, change day to night or summer to winter in landscape videos, fill in empty gaps in images or add years to faces, among many other spectacular demonstrations.

In a further twist, scientists at the University of Helsinki recorded the brainwaves of a group of volunteers as they looked at a series of faces in order to identify those they found attractive, then used a GAN to create new faces tailored to each individual’s tastes. But in addition to these more or less recreational applications, GANs are also providing services to science and medicine; for example, improving astronomical imaging or modelling the distribution of dark matter in the universe, or aiding diagnostic imaging.

All these applications are based on the great strength of GANs, which earned these networks a place among the 10 breakthrough technologies of 2018 listed by the MIT Technology Review. In the words of Schmidhuber, “the duelling networks concept is one way of giving the power of imagination to machines.” It seems clear that the time has come to tear down the stereotype. According to Schmidhuber, we already live in a time when artificial creativity and curiosity “may drive artificial scientists and artists.”

However, even these amazing tools have their limitations. In 2021, a study by the State University of New York revealed that GANs often ignore something even a child knows: that human pupils are round. Those seemingly perfect fictional faces created by GANs often have irregular pupils, because the machine has not yet grasped such a basic concept of human anatomy. A Google team found that other AI systems outperform GANs in tasks such as sharpening or enlarging a pixelated image, in the style of the device used by the fictional character of Deckard in Blade Runner. In this race of AI systems, many technologies are competing to break the boundaries of artificial imagination.

Comments on this publication