With the recent popularisation of artificial intelligence (AI) systems such as ChatGPT, the media and pundits are once again returning to one of the great classic debates in the discussion of these new technologies: which jobs are likely to disappear when AI is able to perform them as well or better than humans? These jobs typically include the writing of articles like this one, but also a wide range of positions in finance, law, education and, of course, the technology sector itself. Many of the professionals in the latter field have a background in theoretical or applied mathematics. Indeed, given that the ancestors of AI were actually calculators, one might ask: with all this computing power, will there still be mathematics left to solve? Will mathematician eventually become a profession of the past?

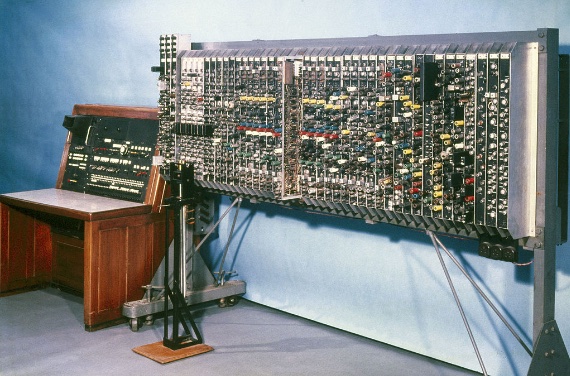

The man who is often regarded as the father of computer science, the Englishman Alan Turing, is popularly known for his role during the Second World War in deciphering Nazi Germany’s Enigma communications encryption machine (a feat previously achieved by the Polish mathematician and cryptologist Marian Rejewski). Turing introduced the idea of a programmable system that could act as a general computing machine, executing any algorithm that could be translated into mathematical expressions. His contribution to the birth of AI would open a path that would later be consolidated by John McCarthy, Allen Newell, Herbert Simon, Marvin Minsky and other pioneers.

The power of automatic proof assistants

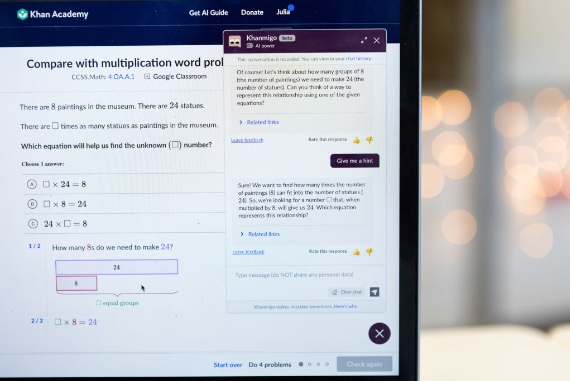

This initial advance of AI in the field of mathematics would give rise to the paradigm that has predominated during the first decades of its development, the so-called symbolic AI, which basically consists of using rules, calculations and logic in the same way that humans codify our reasoning process. In short, it is based on the manipulation of symbols. The fruit of this line is a system called Lean, launched in 2013 by computational scientist Leonardo de Moura of Microsoft Research. Lean is an interactive theorem prover and programming language, allowing mathematicians to check and refine their proofs in a reproducible way for their colleagues.

The power of Lean and other automatic proof assistants has surprised even mathematicians themselves: 2018 Fields Medal winner—often described as the Nobel Prize for mathematics —Peter Scholze wrote: “I find it absolutely insane that interactive proof assistants are now at the level that within a very reasonable time span they can formally verify difficult original research.” According to mathematician and populariser Jordan Ellenberg, of the University of Wisconsin and author of the bestselling book Shape: The Hidden Geometry of Information, Biology, Strategy, Democracy, and Everything Else (Penguin Press, 2021), the Lean community “has the ambitious goal of developing a computer language with the expressive capacity to capture the entirety of contemporary mathematics.”

Thus, the mathematical community can be said to be using AI as a valuable ally. As John Horgan wrote in Scientific American, theorem provers like Lean not only avoid endless discussions among mathematicians about whether a proof of a theorem or conjecture is correct, but also avoid the possibility that a particular proof will be accepted as valid by other mathematicians not because of its development, but because they trust the methods and author of the proof. And yet, although it is mathematicians themselves who have created AI, they do not seem to be taking full advantage of its potential. In Nature, the co-organiser of a seminar on AI and mathematics, Marijn Heule, a computational scientist at Carnegie Mellon University, said that “most mathematicians are completely unaware of these opportunities.”

Non-symbolic AI and neural networks

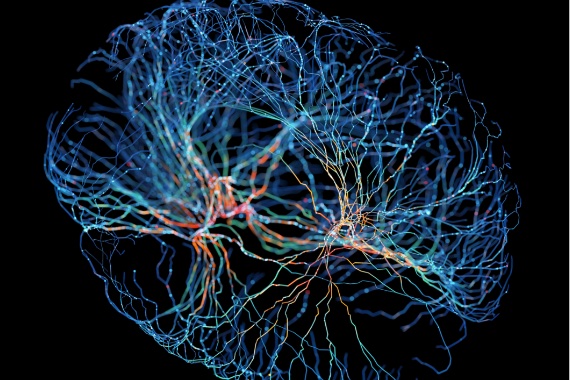

The divide between mathematicians and AI is mainly related to the new paradigm that has been imposed on symbolic AI in the last decade. Non-symbolic AI, also known as connectionist AI, replaces rigorous logic with statistical processing based on deep learning through neural networks. It works more like our own minds do, through principles and patterns born of learning, and therefore its processes are less comprehensible—less mathematical. This is the basis of Big Language Models such as ChatGPT, but also of systems such as AlphaGo from DeepMind (a subsidiary of Google’s parent company), which can defeat the best human players at the game of Go, or AlphaFold, which has solved the three-dimensional folding of proteins, one of the most complex problems in science.

According to Heule, it is in this field of non-symbolic AI that mathematicians have not yet exploited the full potential of AI. But systems are now moving in this direction. DeepMind’s AlphaTensor is inventing new algorithms to solve complex mathematical calculations. Minerva is a chatbot created by Google to solve mathematical problems. It works like ChatGPT, taking questions and answering them by combining natural language with mathematical notation.

But Minerva has its limitations, inherent in the way non-symbolic AI works compared to symbolic AI. According to its creators, Ethan Dyer and Guy Gur-Ari, “the model’s answers cannot be automatically verified. Even when the final answer is known and can be verified, the model can arrive at a correct final answer using incorrect reasoning steps, which cannot be automatically detected.” Minerva makes childish mistakes, such as cancelling a square root on one side of the equation with the roots added in the other term. She has not yet learned that this cannot be done.

The lack of creativity of algorithms

But while AI tools can already prove theorems, and are beginning to tackle the toughest mathematical problems, mathematicians are not yet worried about their jobs. The reason, as Ellenberg recalled, was predicted by the French polymath Henri Poincaré at the beginning of the 20th century, when he said that it was impossible “to attempt to replace the mathematician’s free initiative by a mechanical process of any kind.” Poincaré spoke of the “soul of the fact” as opposed to the “bare fact”; the former, he said, was beyond the reach of a machine. In more contemporary terms, for mathematician Chris Budd of the University of Bath, “mathematics is a creative activity, and perhaps it is a lack of creativity that stops machine learning algorithms from doing deep mathematics.”

In other words, the experts point out that the mathematician brings something different: not just the answers, but the questions; this requires a capacity for abstraction that AI does not yet possess. As Horgan explained, applied mathematics, its useful applications, can be handled by machines, but a different case is that of theoretical mathematics, which discovers the order of the universe. And at least until AI learns to be as creative as humans, mathematicians should be safe.

Javier Yanes

Comments on this publication