In this paper, I have tried to bring together a number of strands of work carried out over the last thirty years, both as an archaeologist and as a generalist social scientist, concerned with the very long-term history of human evolution and some of its implications for the challenges of the 21st century. The result is a very personal perspective that notably differs from the contributions of many colleagues in that I have from the outset posited that what characterizes modern human (Homo sapiens sapiens)1 behavior and modern human societies is information processing that includes learning and learning how to learn (second-order learning, see Bateson 1972), as well as categorization, abstraction, (hierarchical) organization and related phenomena. Moreover modern humans communicate between themselves by various kinds of symbolic means, and have the capacity to transform their natural and material environment in many different ways, and at many spatial and temporal scales. As a result, this paper does diverge from the usual population-based Darwinian thinking about human evolution (e.g. Boyd and Richerson, 1985 etc.) in that, for the later periods (cf. Lane et al., 2009), it focuses on ‘organization thinking’—studying the evolution of the ways in which human beings process information, organize themselves, and transform the world around them.

Necessarily, this paper takes the shape of an introductory summary of many of the underlying arguments about the trajectory of human evolution and the aspects of that history that are particularly relevant to the present and the future. Where possible, I have referred to papers and other publications that elaborate my main train of thought. However, I have kept other references to a minimum, not wanting to load the argument with the many doubts and discussions that have occurred in the anthropological and archaeological community over the period of gestation. I have thus been able to reserve space to point out some of the implications of this approach for present-day challenges, in particular the contradiction between two of today’s favorite buzzwords: ‘innovation’ and ‘sustainability’.

The evolutionary history of the human species, and in particular its cognitive and organizational capacity, is here seen as consisting of two parts, the first of which is essentially biological (the growth of our brain and its cognitive capacity), whilst the second is essentially cultural (learning to exploit the full capacity of the brain). Hence, this paper is divided into three major sections, describing respectively 1. the biological evolution, 2. the cultural evolution and 3. the implications of the species’ past history for our present-day challenges.

It should be emphasized that each of these three sections is based on insights and knowledge from different disciplines and sub-disciplines. The first part derives from arguments in evolutionary biology and evolutionary psychology, and therefore is based on an essentially life-science epistemology and argument, and data deriving from ethology, palaeo–anthropology and cognitive science. It attempts to reconstruct the evolution of the human species leading up to its present-day capabilities by comparing living primates, the fossil remains of—and the artifacts made by—humans at various stages of their development, and the physical and behavioral characteristics of modern human beings. This leads to a patchwork of data-points and ideas that, in so far as it coherently holds together, derives its principal interest from the fact that it raises new questions and provides a basis for the arguments in the second part.

That part, on the other hand, derives from arguments in archaeology and history, which are based on humanities and social science epistemologies respectively, and data and insights from archaeological, written historical and modern observational sources. It attempts to outline the development of societal organization from small roaming gatherer-hunter-fisher bands, via villages, urban systems and empires to the present day global society, with a focus on the role and forms energy and information play in that development. In doing so, I am using the constraints and opportunities afforded by the bio-social nature of our species to explain observed phenomena in human history, and couching the explanation in systemic terms, which many archaeologists and most historians would have difficulty recognizing. And to add insult to injury, I am doing so at a level of generalization that is beyond any commonly used in these disciplines.

My justification for doing this is the fact that most, if not all, trans-disciplinary research must aim to “constructively upset the practitioners of all the disciplines involved” in order to raise new questions and challenges to be considered by the communities practicing these disciplines as well as by others, and thus to ‘stretch the envelope’ of our knowledge and insights. The direction in which I have attempted to stretch that envelope is given by the fact that this paper intends to make a contribution to the current sustainability debate.

In the third part of the paper, I have tried to outline how the bio-social nature of human beings and the course of the history of the species over the last 12,000-15,000 years have conspired to create the dilemma that we face today: “How do we use the human capacity to innovate, the unbridled use of which during the last three centuries has caused the unsustainability of our current mode of life, to attain a more sustainable society?” The short answer is clearly: “We must use our capacity to innovate in a different way!” This third part of the paper therefore ends with some suggestions derived from observing a fundamental weakness of our current scientific thinking—the capacity to derive lessons from the past for the future.

The evolution of the human brain

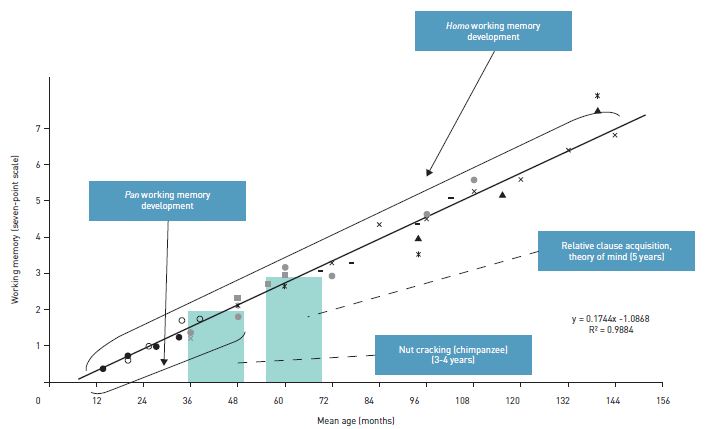

The first part of the story concerns the physical development of the human brain and its capacity to deal with an increasing number of simultaneous information sources. The core concept that is most relevant here is the evolution of the short-term working memory (hereafter STWM), which determines how many different sources of information can be processed together in order to follow a particular train of thought or course of action. There are different ways to reconstruct this evolution (Read and van der Leeuw, 2008, 2009). Indirectly, it can be interpolated by comparing the STWM of chimpanzees (our closest common ancestor in the evolutionary tree that produced modern humans) to that of modern human beings. 75% of chimpanzees are able to combine three elements (an anvil, a nut and a hammerstone) in the act of cracking the nut, which leads us to think that the STWM of chimpanzees is 2 ± 1 (because 25% of them never master this). Experiments with different ways of calculating the human capacity to combine information sources, on the other hand, seem to point to an STWM of 7 ± 2 for modern humans. This difference coincides nicely with the fact that chimpanzees reach adolescence after 3-4 years, and modern humans at age 13-14. It is therefore assumed that the growth of STWM occurs before adolescence in both species, and that the difference in age of adolescence explains the difference in STWM capacity (Figure 1, cf. Read and van der Leeuw, 2008: 1960).

Figure 1. The relationship between cognitive capacity and infant growth in Pan and in Homo sapiens sapiens. The trend line is projected

from the regression of time-delay response (Diamond and Doar, 1989) on infant age. Data are rescaled for each dataset to make

the trend line pass through the mean of that dataset. Working memory scaled to STWM = 7 at 144 months. The ‘Fuzzy’ vertical bars

compare the age of nut cracking among chimpanzees with the age for relative clause acquisition and theory of mind conceptualization

in humans. [Data on STWM are here represented by the following symbols: • = Imitation (Alp 1994); + = time delay (Diamond and Doar,

1989); ✳ = number recall (Siegel and Ryan 1989); x = total language score (Johnson et al. 1989); x = relative clauses (Corrêa, 1995; ■

= count label, span (Carlson et al., 2002); o = 6 month retest (Alp, 1989); ▲ = world recall (Siegel and Ryan, 1989); ● = spatial recall

(Kemps et al., 2000); ♦ = relative clauses (Kidd and Bavin, 2002); – = spatial working memory (Luciana and Nelson, 1998); — =linear time

delay (Diamond and Doar, 1989)]

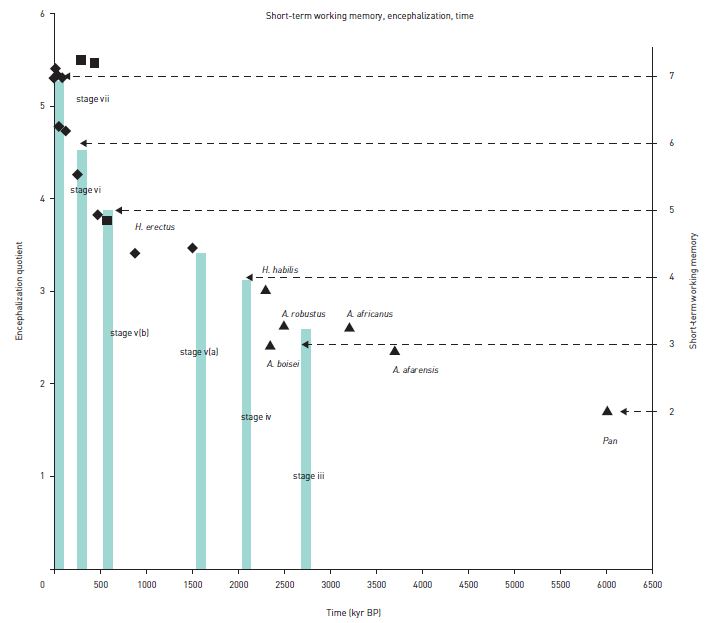

Figure 2. Graph of encephalization quotient (EQ) estimates based on hominid fossils and Pan (Chimpanzees). Early hominid fossils have been identified by taxon. Each data point is the mean for hominid fossils at that time period. Height of the ‘fuzzy’ vertical bars is the hominid EQ corresponding to the data for the appearance of the stage represented by the fuzzy bar. Right vertical axis represents STWM. Data are adapted from the following: triangles: Epstein 2002; squares: Rightmire, 2004; diamonds: Ruff et al., 2004. EQ= brain mass/(11.22 body mass0.76), cf. Martin, 1981.

Another approach to corroborating the growth of STWM is by measuring encephalization—the evolution of the brain-to-body-weight ratio of modern humans’ ancestors through time. The evolution of these ratios is based on the skeletal remains of each subspecies found and, as shown in Figure 3, corresponds nicely to the evolution of the STWM as has been established based on the way and extent to which these ancestors were able to shape stone tools (cf. Read and van der Leeuw, 2008: 164).

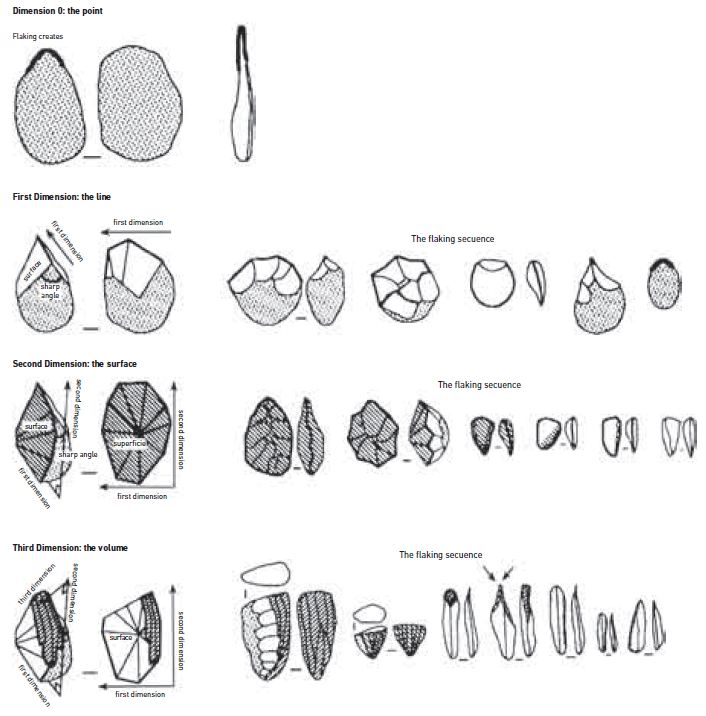

Figure 3. For humans to attain the capacity to conceive of a three-dimensional object (a pebble or stone tool) in three dimensions takes around 2 million years. a. Taking a flake off at the tip of the pebble is an action in 0 dimensions, and takes STWM 3; b. successively taking off several adjacent flakes creates a (1-dimensional) line, and requires STWM 4; c. stretching the line until it meets itself defines a surface by drawing a line around it and represents STWM 4.5; distinguishing between that line and the surface it encloses implies fully working in two dimensions, and requires STWM 5; c. preparing two sides in order to remove the flakes from the third side testifies to a three-dimensional conceptualization of the pebble, and requires STWM 7. (From: van der Leeuw, 2000)

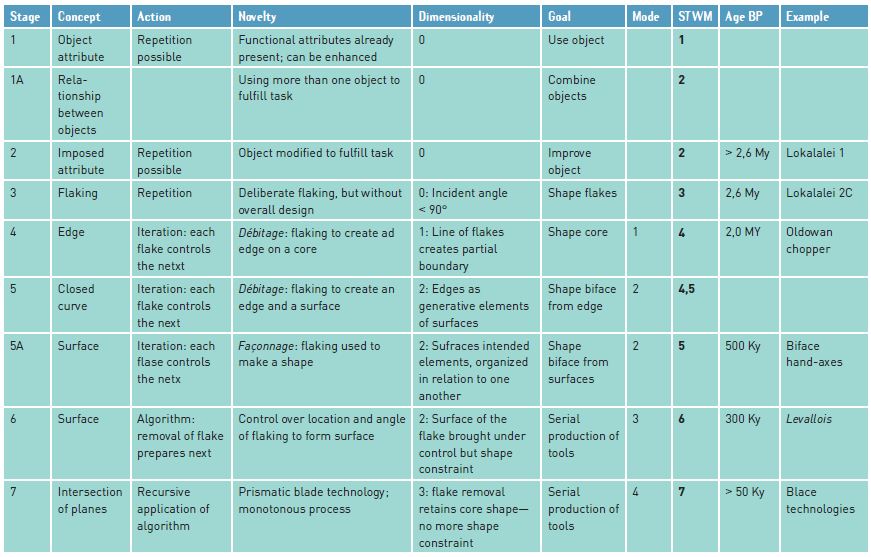

Whereas both these approaches depend in fact on extrapolation and therefore do not provide any direct proof for our thesis, the study of the way and extent to which the various subspecies and variants preceding modern humans have been able to shape stone tools does provide some direct evidence, which is summarized in Table 1. That table links the evolution of actions in stone tool making with the concepts that they define, the number of dimensions and the STWM involved with the stone tools that provide examples of each stage.

Table 1. Evolution of stone tool manufacture from the earliest tools (stage 2, > 2,6 M. years ago; found in Lokalalei 1) to the complex blade technologies (stage 7, found in most parts of the world c. 50,000 BP). Columns 2-5 indicate the observations leading us to assume specific STWM capacities; Column 8 (bold) indicates the stage’s STWM capacity and column 9 the approximate age of the beginning of each stage. Column 10 refers to the relevant artifact categories documenting the stages. For a more extensive explanation, see Read and van der Leeuw, 2008: 1961-1964).

In order to explain the development involved, I will use an example: the mastering of three-dimensional conceptualization and manufacture of stone tools (cf. figure 2 a-d) (Pigeot, 1991; van der Leeuw, 2000). The first tools are essentially pebbles from which at one point of the circumference (generally where the pebble is pointed) a chip has been removed to create a sharper edge (fig. 2a). Removing the flake requires three pieces of information: the future tool from which the flake is removed, the hammerstone with which that is done, and the need to maintain the two at an angle of less than 90º at the time of the blow. Here, we therefore have to do with proof of STWM 3. In the next stage, this action (flaking) is repeated along the edge of the pebble. That requires control over the above three variables, and a fourth one: the succession of the blows in a line. STWM is therefore 4 (figure 2b). Next, the edge is closed: the toolmaker goes all around the pebble until the last flake is adjacent to the first. By itself, this is not a complete new stage, and we have called this STWM 4.5. But once the closed loop is conceived as defining a surface the knapper has two options. Either to define a surface by knapping an edge around it and then taking off the centre, or to do the reverse—take off the centre first, and then refine the edge. The conceptual reversibility shows that the knapper has now integrated five dimensions, and that his or her STWM is 5 (figure 2c). The next stage again develops sequentiality, but in a more complex way. In the so-called ‘Levallois’ technique, making one artifact serves at the same time as preparation for the next, by dividing the pebble conceptually in two parts along its edge. And finally, the knapper works completely in three dimensions, preparing two surfaces and then taking flakes off the third. At this stage, STWM 7 (figure 2d), for the first time the knappers are able not only to work a three-dimensional piece of stone, but also to conceive it as three-dimensional and adapt their working techniques accordingly, greatly reducing loss and increasing efficiency.

Closely observing the tools and other traces of human existence available around 50,000 BP indicates that, after some 2,000,000 years, people at that time could (van der Leeuw, 2000):

- distinguish between reality and conception;

- categorize based on similarities and differences;

- in their thinking, feed-back, feed-forward and reverse in time (e.g. reverse an observed causal sequence, in order to conclude from the result what kind of action could achieve it);

- remember and represent sequences of actions, including control loops, and conceive of such sequences that could be inserted as alternatives in manufacturing sequences;

- create basic hierarchies, such as point-line-surface-volume, or hierarchies of size or inclusion;

- conceive of relationships between a whole and its constituent parts (including reversing these relationships);

- maintain complex sequences of actions in the mind, such as between different stages of a production process;

- represent an object in a reduced set of dimensions (e.g. life-like cave paintings).

The innovation explosion: mastering matter and learning how to put the brain to best use

After 50,000 BP2, and especially after around 15,000 BP, we see a true ‘innovation explosion’ occurring just about everywhere on Earth. The sheer multitude of inventions in every domain is truly astonishing, and accelerates up to the present day. There is no reason to assume further developments of the human STWM, as the experimental evidence indicates that modern humans currently have the capacity to deal simultaneously with at most seven, eight or sometimes nine dimensions or sources of information, but even a superficial scrutiny of modern technologies, languages and other achievements shows the wide variety of things that can be achieved with a STWM of 7±2. We would therefore argue that for this next phase, from about 50,000 BP to the present, the biology of the mind does no longer impose any constraints, and the emphasis is on acquiring the fullest possible range of techniques exploiting the STWM capacity available.

The emergence of improved technologies

We can distinguish several phases in that process. In the first, the toolkit explodes, but the gatherer-hunter-fisher mobile lifestyle remains the same. Some of the many cognitive operators that emerge in that first stage are (van der Leeuw, 2000):

- the use of completely new topologies (e.g. that of a solid around a void, such as in the case of a pot or basket);

- the use of many new materials to make tools with. Although it is difficult to prove that these materials were not used earlier, nevertheless, one observes from this time onwards objects in bone, as well as wood and other perishable materials;

- the combination of different materials into one and the same tool (e.g. hafting small sharpened stone tools into a wooden or bone handle);

- the inversion of the manufacturing sequence from reductive (one that begins with a big object (a block of stone) and successively takes smaller and smaller pieces off it) to gain control over the shape, to additive (where tiny particles (clay, fibers) are combined into larger, linear objects (threads, coils) and then into a two-dimensional object (such as a woven cloth), that is then given shape (by sewing) to fit a three-dimensional object (a piece of clothing), etc. This implies the cognition of a wide range of scales;

- stretching and chunking the sequence of actions kept in the mind: distinguishing between (complex) preparation stages (e.g. gathering of raw materials, preparing them, shaping of pottery, drying, decorating, firing) yet being able to link the logic of manufacture across these stages (adapt the clay to the firing technique, etc.),

The resulting explosion of new tools characterizes the period until about 13,000 BP (in East Asia) or 10,000 BP (in the Near East). But the subsistence mode was still characterized by a multi-resource strategy of harvesting various foodstuffs in the environment, but now including a wider range facilitated by the new toolkit, adapting to change (weather, availability of food) by moving around, albeit over increasingly limited distances, so as to always stay below the carrying capacity of the environment. In effect, people lacked the know-how to inter-act with their environment; they could only re-act to it. Uncontrollable change and risk were the order of the day, but people did minimize risk where they could (cf. van der Leeuw, 2000).

The first villages, agriculture and herding

In the next stage, c. 13,000-10,000 BP, the continued innovation explosion changed the whole lifestyle of many humans. The acceleration was so overwhelming that in a few thousand years the whole way of life of most humans on earth changed: rather than live in small groups that roamed around, people concentrated their activities in smaller territories, invented different subsistence strategies, and in some cases literally settled down in small villages (van der Leeuw, 2000, 2007 and references therein).

Together, these advances greatly increased the number of ways at people’s disposal to tackle the challenges posed by their environment. That rapidly increased our species’ capability to invent and innovate in many different domains, allowed it to meet more and more complex challenges in shorter and shorter timeframes, and thus substantively increased humans’ adaptive capacity. But the other side of the coin was that these solutions, by engaging people in the manipulation of a material world that they now partly controlled, ultimately led to new, often unexpected, challenges that required the mobilization of great effort in order to overcome them in due time.

As part of this process, a number of fundamental changes occurred. First of all, the relationship between societies and their environments became reciprocal: the terrestrial environment from now on did not only impact on society, but society also impacted on the terrestrial environment. As a result, sedentary societies tried to control environmental risk by intervening in the environment, notably by: 1. narrowing and optimizing the range of their dependencies on the environment; 2. simplifying or even homogenizing (parts of) their environments; and 3. spatial and technical diversification and specialization (cf. van der Leeuw, 2000). The new subsistence techniques introduced, including horticulture, agriculture and herding, narrowed the range of things people depended on for their subsistence. In the process, certain areas of the environment were ‘cleared’ and dedicated to the specific purpose of growing certain kinds of plants. This required investment in certain parts of the environment, devoting those areas to specific activities and delaying the rewards of the investment activities. Clearing the forest and sowing resulted only a year later in a harvest, for example.

The resulting increase of investment in the environment in turn anchored different communities more and more closely to the territory in which they chose to live. People now built permanent dwellings using the new topology (upside down containers), and devised many other new kinds of tools and tool–making technologies facilitating the new subsistence strategies practicable in their environment (e.g. the ard, the domestication of animals, baskets and pottery for storage, pottery for boiling). Without speaking of (full-time) ‘specialists’, certain people in a village began to dedicate more time, for example, to weaving or pottery-making, and in doing so provided the products of their work to others in exchange for some of the products these others produced. Differences in resource availability and technological know-how thus led to economic diversification and, in order to provide everyone with the things they needed, the emergence of trade.

The symbiosis that thus emerged between different landscapes and the ways invented and constructed by human groups to deal with them, by narrowing the spectrum of adaptive options open to the individual societies concerned, drove each of them to devise more and more complex solutions, with more and more unexpected consequences that then needed to be dealt with in turn.

In keeping with my fundamental tenet that information processing is crucial to such changes, I attribute the changes outlined in this section to the beginnings of a new dynamics, in which learning moved from the individual to the group because the dimensionality of the challenges to be met increased beyond the capability of individuals to deal with them. This involved the emergence of the following feedback loop (van der Leeuw, 2007):

Problem-solving structures knowledge —> more knowledge increases the information processing capacity —> that in turn allows the cognition of new problems —> creates new knowledge —> knowledge creation involves more and more people in processing information —> increases the size of the group involved and its degree of aggregation —> creates more problems —> increases need for problem-solving —> problem-solving structures more knowledge … etc.

It enabled the continued accumulation of knowledge, and thus of information-processing capacity, which in turn enabled a concomitant increase in matter, energy and information flows through the society, and thus the growth of interactive groups. But this growth was at all times constrained by the amount of information that could be communicated among the members of the group, as miscommunication would have led to misunderstandings and conflicts, and would thus have impaired the cohesion of the communities involved. Communication stress did in my opinion provide the incentive for 1. improvements in the means of communication (for example by ‘inventing’ new, more precise, concepts with which to communicate ideas (cf. van der Leeuw, 1982), and 2. a reduction in the search time needed to find those with which one needed to communicate (by adopting a sedentary lifestyle).

Finally, as the social system diversified, and people became more dependent on each other, the risk pattern increasingly also included social stresses caused by misunderstandings and miscommunications. Handling risks therefore came to rely increasingly on social skills, and the collective invention and acceptance of organizational and other tools to maintain social cohesion.

The first towns

From this point in time, I will no longer try to point out any novel innovations or cognitive operations emerging as human societies grew in size and towns spread over the surface of the earth. Instead, I will focus on how the feedback system that drove societal growth as well as the conquest of the material world through innovation posed some major challenges. Overcoming these ultimately enabled the emergence of true ‘world systems’ such as the colonial empires of the early modern period (van der Leeuw, 2007) or the current globalized world.

Throughout the third stage, from around 7,000 BP, communication remained a major constraint because more and more people were interactive with each other as the size of settlements involved grew to what we now call a town. This stage—again—sees the emergence of a host of new innovations, such as writing, periodic markets, administration, laws, bureaucracies, specialized full-time communities engaged in specific activities (priests, scribes, soldiers, different kinds of craftsmen and women, etc.). Many of these had either to do with improving communication (such as writing and scribes), social regulation (administration, bureaucracies, laws), the harnessing of more and more resources (mining) or the exchange of objects and materials in part over longer and longer distances (markets, long-distance traders, innovations in transportation). But as larger groups aggregated, the territory (‘footprint’ to use a modern term) upon which they depended for their material and energy needs expanded exponentially, and the effort required to transport foodstuffs and other materials did the same. This caused the emergence of energy as a major constraint that did handicap the evolution of societies for millennia to come.

To deal with this constraint, an interesting core-periphery dynamic emerged to exploit that ever-growing footprint—the exchange of organization against energy. Around towns, dynamic ‘flow structures’ emerged in which organizational capacity was generated in the towns and then spread around them, extending the town’s control over a wider and wider territory; in turn, the increasing quantities of energy collected in that territory (in the form of foodstuffs and other natural resources) flowed back towards the city to feed the ever-increasing population that kept the flow structure going by innovation (creation of new organization and information-processing capacity). These ‘flow structures’ became the ‘bootstrapping’ drivers that created larger and larger agglomerations of people and the territories to go with them.

What enabled the urban populations to keep innovating, and thus to maintain the flow structures, was—again—the growing capacity of more and more interacting minds to identify new needs, novel functions and new categories, as well as new artifacts and challenges. Underpinning that dynamic is one that we know well in the modern world. Invention is usually (and certainly in prehistoric and early historic times) something that involves either individuals, or very small teams. Hence, in its early stages it is related to a relatively small number of cognitive dimensions—it solves challenges that few people are aware of. As such inventions become the focus of attention of much larger numbers of people, they simultaneously become cognized in many more dimensions (people see more uses for them, ways to slightly improve them, etc.), and this in certain cases triggers an ‘innovation cascade’—a string of further innovations, including new artifacts, new uses of existing artifacts, and new forms of behavior and social and institutional organization. In this process, clearly, towns and cities are more successful than rural areas because of the greater number of interactive individuals in such aggregations. That is corroborated by the fact that when scaling a number of urban systems of different sizes against respectively metrics of population, energy and innovation, population scales linearly, energy sub-linearly and innovation capacity super-linearly (Bettencourt et al., 2006)

Empires

The above ‘flow structures’ kept growing (albeit with ups and downs) until, after several millennia (from about 2500 BC in the Old World, and about 500 BC in the New), they were able to cover very large areas, such as the prehistoric and early historic empires (The Chinese, Achaemenid and Macedonian and Roman Empires, for example, in the Old World, the Maya and Inca Empires in the New World, and later the European colonial Empires), which concentrated large numbers of people at their center (and, in order to feed them, gathered treasure, raw materials, crops and many other commodities from their hinterlands). Throughout this period communication and energy remained the main constraints, impacting on cities, states and empires. Thus we see advances in the harnessing of animal energy (including slavery), wind power (for transportation in sailing vessels and for driving windmills), falling water (for mills), etc., but also in the facilitation of communication, (e.g. long-distance ‘highways’ over land, the sextant and compass to facilitate navigation at sea), and in all kinds of ways to create and concentrate wealth serving to defray the costs of managing societal tensions, maintaining an administration and an army, etc.

Those costs effectively limited the extent of Empires in space and time. Tainter (1988), for example, argues convincingly that only the treasure accumulated outside the Roman Empire in the centuries before the Roman conquest enabled Rome to maintain the large armies and bureaucracies to keep its Empire. As soon as there was no more treasure to be gained by conquering, and the Empire was thrown back upon a dependency on recurrent (in essence solar) energy, he argues that it could no longer maintain the flow structure. This reduced the advantages of being part of the Empire, so that it began to lose control over its wide territory, causing people to fall back on smaller, regional or local networks. Thus disaffection, or even dispersion of the population, followed the cessation of the flows that generated the coherent socio-economic structure of an Empire in the first place.

The last three centuries

The last three centuries have seen the (provisional) culmination of the trajectory I have outlined in Part II. That trajectory shows how the constraints and opportunities afforded by the bio-social nature of our species explain a number of observed phenomena if human history is conceived in systemic terms. In that sense, these last three centuries do not differ from what went before, but they have seen an unbridled acceleration of our species’ innovative activity, initially because the ‘taming’ of fossil energy removed the energetic constraint from much human activity, and subsequently because the introduction of electronics enabled the separation of information from most of the substrates used for its transmission until then. These two developments together have engendered a ‘quantum jump’ or ‘state change’ in societal dynamics, which has been at the root of many of today’s challenges, but also introduces potential ways to deal with them that were not available thus far.

The introduction of fossil energy and society’s dependence on innovation

The (for the moment) last phase of this long-term process of social evolution through innovation involves the last two and a half centuries, in which first the energy constraint was removed by the introduction of plentiful fossil energy, and recently the communication and information- processing constraint is in the process of being removed due to the development of new technologies. The introduction of fossil energy first brought in its wake new technologies to enable, facilitate or reduce the cost of transportation (railroads steamers, cars, etc.), manufacturing (steam-driven factories), and energy itself, as well as (later) technologies to reduce the amount of energy needed to fulfill societal needs.

Without immediately having a clear explanation, however, I would like to signal another emergent driver that, in this period, transformed innovation from a demand-driven activity to a supply-driven one. For most of human (pre-) history, it seems that inventions were either the result of perceived needs, or were not really introduced on a large scale in societies until such a need emerged. It took, for example, roughly 1000 years after the invention of ironworking to actually see that technique spread throughout Europe at a fairly rapid pace (cf. Sørensen). In that case, the initial brake on the transformation of this invention into an innovation seems to have been related to the social structure of society. In the Bronze Age, hierarchies emerged that controlled wide exchange networks because they controlled the sources of bronze, which was relatively easy to do because accessible sources to this metal were relatively few and far between. That is not the case with iron—it can be found in virtually every water-rich place in Europe, and once the technology to use it spread, no one could any longer derive riches from controlling the manufacture of iron tools. The introduction of iron technology therefore enabled large numbers of people to manufacture and use much better tools and weapons and had, in a sense, a democratizing effect.

Between the 18th and the 20th centuries, and particularly in the second half of the latter, with respect to innovation, the balance between supply and demand shifted in favor of supply. Rather than societal needs driving innovation, innovation came to drive societal needs. Companies competed to lay their hands on inventions (or developed them internally), and then created markets for them, forcing their use on society in order to enhance their profit. This has led to a situation in which innovation has become endemic to our societies, and those societies, through their dependency on ever-increasing GDP and profit figures, have become dependent on innovation for their continued existence. This is a novel dynamic that has major consequences for the way we might deal with the challenges of the 21st century, sustainability among them. I will come back to this in a later section.

This phenomenon has emerged in a period that saw the transformation of our society’s perspective on time. Whereas until the 17th century, the most frequent vision explained the present by invoking ‘History’ or ‘The Past’ or ‘It has always been like this’, whereas invoking something ‘new’ or ‘an innovation’ was socially heavily frowned upon. With the enlightenment this changed, ultimately leading to our current attitude, in which the ‘new’ is mostly preferred over the ‘old’, the ‘proven’ or the ‘heritage’ (Girard, 1990). Interestingly enough, this change in perspective was accompanied by the institutionalization of the universities and academic disciplines as ‘research crucibles’, initially on the expectation that, ultimately, something useful would be invented, but increasingly with the expectation that such economic advantages are what research exists for.

Separating information from its material and energetic substrates

Although ‘information technology’ has been in existence for many thousands of years, in the form of gestures, language, writing, accounting, and many other things including North American smoke signals and African tamtams, the second half of the 20th Century saw the definition of the concept of ‘information (Shannon and Weaver, 1948) and rapidly thereafter the mechanization of information processing, initially in the domain of communication, but then also in the domains of calculation, representation and many others. Hence, the current emphasis in certain quarters on our present-day society as the ‘information society’ is misguided—every society since the beginning of human evolution has been an ‘information society’.

Clearly, as we are only at the beginning of a process that will eventually harness electronic and other forms of information processing throughout all aspects of our thinking and our society, and offer many new solutions to existing challenges and equally many new challenges, we cannot presently outline the higher-level ‘drivers’ that may emerge as a result of that process. However, we do note that, again, these will accelerate the dependency of our society on innovation. Indeed, massive information collection and treatment, as well as the application of the concept of information to physical, biological and societal processes, is emerging as a new challenge: the NBIC ‘revolution’, under which we understand the encounter (and potential interaction) of nano- bio- information- and communications technology.

However that may be, after the mastering of ‘matter’ by devising ways to conceptually separate manipulating it from the time/space in which that process occurred, which took humanity about two million years, and the mastering of energy by separating it conceptually from movement and change, which took the next 7000 years, it took only 200 years more to conceptualize information by separating it from its material or energetic substrate. Our collective capability to process information has therefore accelerated more or less exponentially, as has the size of Earth’s human population and—more important from our perspective—the size and number of the cities that are the principal source of new inventions and innovations. Having identified the driver behind this process, as with any such exponential growth, we have to ask: “How much longer can this go on?” In order to answer that question, we must look at the long-term consequences of the ‘innovation explosion’, from the Neolithic to the present.

The challenge of the future—Innovation, Sustainability and ‘Unanticipated Consequences’

One way to introduce this topic, to which we will devote the last part of this paper, is to point out the contradiction in the fact that innovation is seen as the way out of the present syndrome of overpopulation, looming or current resource shortage, omnipresent pollution, etc., even though two centuries of unbridled innovation are responsible for bringing about the consumer society as well as the current sustainability challenge. One must conclude that innovation as it is presently embedded in our societies is hardly the panacea to get us out of the sustainability predicament that many claim it is. That in turn prompts the question whether there are any alternatives to ‘innovating ourselves out of trouble’, and if there are, what could they be?

It seems to me that the root of this challenge lies in the relationship between the fundamental limitations of the human mind, whether collective or individual, and the complexity of the world outside us. I would argue that, over the millennia, that relationship has changed as a result of the innovation explosion itself. In order to understand the nature of that change, we need to look at the relationship between people and their environment.

Human cognition, powerful as it may have become in dealing with the environment, is only one side of the (asymmetric) interaction between people and their environment, the one in which the perception of the multidimensional external world is reduced to a very limited number of dimensions. The other side of that interaction is human action on the environment, and the relationship between cognition and action is exactly what makes the gap between our needs and our capabilities so dramatic. The crucial concept here is that of ‘unforeseen’ or ‘unanticipated’ consequences. It refers to the well-known and oft-observed fact that, no matter how careful one is in designing human interventions in the environment, the outcome is never what it was intended to be. It seems to me that this phenomenon is due to the fact that every human action upon the environment modifies the latter in many more ways that its human actors perceive, simply because the dimensionality of the environment is much higher than can be captured by the human mind. In practice, this may be seen to play out in every instance where humans have interacted in a particular way with their environment for a long time—in each such instance, ultimately the environment becomes so degraded from the perspective of the people involved that they either move to another place or change the way they are interacting with the environment.

How does this happen? Imagine a group of people moving into a new environment, about which they possess little knowledge, such as the European settlers into the Eastern North American forests (Cronon, 1983). After a relatively short time, they will observe challenges or opportunities to interact with this environment, and they will ‘do something’ about them. Their action upon these challenges is based on an impoverished perception of them, which mainly consists of observations concerning the short-term dynamics involved. Yet these same actions transform the environment in ways that affect not only the short-term, but also the long-term dynamics involved in unknown ways. Over time, little by little all the frequent challenges become known and are modified by the society’s interaction with the environment, while the unknown longer-term challenges that are introduced accumulate. Or to put this in more abstract terms, due to human interaction with the environment, the ‘risk spectrum’ of the socio-environmental system is transformed into one in which unknown, long-term (centennial or millennial) risks accumulate to the detriment of shorter-term risks.

Ultimately, this necessarily leads to ‘time-bombs’ or ‘crises’ in which so many unknowns emerge that the society risks being overwhelmed by the number of challenges it has to face simultaneously. It will initially deal with this by innovating faster and faster, as our society has done for the last two centuries or so, but as this only accelerates the risk spectrum shift, this ultimately is a battle that no society can win. There will inevitably come a time when the society drastically needs to change the way it interacts with the environment, or it will lose its coherence. In the latter case, after a time, the whole cycle begins anew—as one observes when looking at the rise and decline of firms, cities, nations, empires or civilizations.

What is the effect of an exponential increase in information-processing capacity on this asymmetry between human understanding and human action? Clearly, as the information-processing capacity increases, the total number of (collectively) cognized dimensions involved in the process does so more or less commensurately. The human actions on the environment therefore affect more and more dimensions of the processes going on in that environment. As the multiplier between cognized human dimensions and unknown environmental dimensions affected by human actions is large, this implies that due to the exponential increase in the number of human cognized dimensions, the number of affected environmental dimensions grows even more rapidly, posing ever more rapidly ever more complex environmental challenges for humankind to deal with.

This permanent, and increasing, tension between the total cognitive capacity of a society and the complexity of its environment has in itself been a, if not the, major driver behind the increase in information-processing capacity of human beings and societies. As such, it has had important consequences for the information-processing structure of the societies involved. Several of these have already been mentioned in this paper: population increase, aggregation of human populations in villages and then cities, the invention of writing, markets, administration and other phenomena accompanying urbanization, etc. But others have not been given much attention; such as its impact on our language and the way we have done (and often still do) science.

Let us look at language first. Initially, as small groups lived together most of the time, humans had the opportunity and time for multi-channel communication—spoken language, gestures, body language, eye contact and any other kind of communication. This allowed for the long-term accumulation of trust and understanding that allows for the reduction and correction of a wide range of communication errors. But as the groups involved grew, and the time devoted to each interaction therefore shortened, fewer channels of communication were available, and spoken language won out as the main channel of communication between people meeting each other infrequently and for short periods of time, mainly because spoken language is a relatively precise way to communicate concepts. Ultimately, as networks of communication grew even further, the need to avoid misunderstandings and errors must also have had an impact on language itself, requiring the communities concerned to develop more and more precise ways of expressing themselves in a shorter and shorter time. That impact, it seems to me, must have been visible in a proliferation of more and more, but ever narrower, concepts (categories) at any particular level of abstraction—thus reducing the number of dimensions in which these concepts could be interpreted. The multiplicity of meanings attached in different contexts to the same words—or the same roots—that one sees in any etymological dictionary bears testimony to this process, as does the proliferation of artifact categories through time, each with more and more precise and limited functions. Simultaneously, an increase in the number of levels of abstraction itself did compensate for this fragmentation, so that one could still find ways to ‘lump’ over these increasingly narrow concepts along crosscutting dimensions. ‘Information’ is but one of the last major abstractions introduced.

In western science, a similar process of fragmentation has been observable at least since the 14th century, and for very similar reasons (cf. Evernden, 1992). During these centuries, science has emphasized the need to solidify as much as possible the relationship between observations and interpretations, and thus between the realm of the real, with its infinite number of dimensions, and the realm of ideas, in which only a limited number of dimensions is cognized. Much scientific explanation therefore consisted of reducing the large number of dimensions involved in the processes observed into a much more limited number that was manageable in the (individual or collective) human brain, and could thus be shaped into a coherent and comprehensible narrative. Hence the fact that such science was generally ‘reductionist’. A corollary of this is the fact that, particularly in empirical science, each complex phenomenon was ‘broken up’ into component parts in the hope that once these components had been explained, they could be put together to explain the whole phenomenon in all its complexity. This led to the same kind of fragmentation that occurred in languages in general, observable at the highest level in the current division of human inquiry into disciplines, sub-disciplines, specializations, etc., each practiced by its own community that has developed its own epistemology, perspective, language, concepts, methods, techniques and values.

We now see that fragmentation as one of the main handicaps in our attempts to understand the full complexity of the processes going on around us. Moreover, the interpretations linked the phenomena investigated to processes that preceded the time at which these phenomena were observed, rather than to what was still to come (and therefore could not be observed). Scientific reasoning therefore emphasized the explanation of extant phenomena in terms of chains of cause-and-effect and (much later) an emphasis on feedback loops, in both cases linking the progress of processes through time to their antecedent trajectory. In particular, it has emphasized thinking about “origins” rather than “emergence”, about “feedback” rather than “feed-forward”, about “learning from the past” rather than “anticipating the future”. Hence, it is no surprise that ‘thinking about the future’, whether one calls it ‘futurology’, ‘forecasting’, ‘scenario construction’ or ‘foresighting’ is actually a stepchild in our current academic and research institutions, and is principally developed in industry or government.

As a result of these tendencies, both in our societies’ communication and culture, and in our scientific research, we have now come to a point where the unanticipated consequences of our interventions in the environment threaten to overwhelm us because of their complexity. So many unknown dimensions are involved in the dynamics of our socio-natural environment that we increasingly feel we no longer have any means to understand, limit or control their effects. That feeling is experienced as a ‘crisis’, and we encounter it more and more frequently—whether in the financial domain, or in those of food security, natural hazards, the security of our societies from terrorism or other undermining activities, etc.

One could effectively define such ‘crises’ as temporary incapacities of our society to process the information necessary to deal adequately with the external and internal dynamics it is engaged in. In our perspective, these incapacities are the result of the fact that the gap between the number of dimensions cognized in the society, and the number of dimensions playing a role in the socio-natural dynamics it is involved in, is crossing a threshold beyond which the former is inadequate to deal adequately with the latter. In the run-up to that threshold, a clear ‘early warning’ signal is the fact that society increasingly suffers from ‘short-termism’, a focus on the immediate challenges that it encounters, without taking the longer term into account: in other words, the fact that tactics come to prevail over strategy in much decision-making.

The core of the challenge seems to be that we must find ways to turn lessons from the past into lessons for the future! To do so, we must devise ways to argue coherently—and as far as possible falsifiably in Popper’s (1959) sense—from the simple to the complex in order to better anticipate the complex consequences of our actions. That would enable us to re-emphasize long term, strategic thinking and a holistic vision that favors intellectual fusion between different scientific communities and perspectives. To do so, we must crucially acquire the capacity to increase, rather than reduce, the number of dimensions that we can harness in order to understand complex phenomena, so that we may attain a better understanding of the consequences of our actions because we can consider more dimensions in our decision-making about interventions in the environment.

Conclusion: Is there a way out?

It initially seems as if our intellectual and scientific tradition, the size of our interactive population, the nature of many of our languages, the under-determination of our theories by our observations (cf. Atlan, 1992; van der Leeuw) and the limitations of our human short-term working memory are as many challenges to our capacity to fundamentally change the nature of our thinking, and more specifically to our capacity to explicitly focus on the future and extrapolate new dimensions from the ones we know at any particular point in time. There are many examples of individuals or (small) groups of people who have nevertheless done so with some degree of success, from classical Greek philosophers via Leonardo da Vinci to 18th and 19th century science-fiction authors (such as Jules Verne or Paul Deleutre3). They have been able to design utopias or to extrapolate positively from their lifetime observations into the future, even though some of these ideas were never implemented or only realized years or centuries later. Inventors have also been able to anticipate, and most of us call on our “intuition” when we need to do so.

Moreover, there are some (shy) beginnings of a wider trend in this direction that we can point to. The kind of reductionist, fragmented and ‘explanatory’ science that resulted from these tendencies has in the past twenty-five years come under increasing attack from the ‘Complex Systems’ perspective emerging in the 1980’s (e.g. Mitchell, 2009). It assumes that in order to get a realistic representation of reality, we need to study emergence, feed-forward and develop a generative perspective to which the amplification of the number of cognized dimensions is essential. In other quarters, ‘foresighting’ is spreading from the relatively limited field of industrial and economic decision-support tools to academic practitioners who actually delve into the epistemological and other challenges that need to be met for this kind of science to flourish (Wilkinson and Eidinow, 2008; Selin, 2006). And yet elsewhere, under pressure from the looming environmental challenges of the 21st century, the scientific community is beginning to look ahead at ‘unanticipated consequences’ and what these may imply for the challenges of the future (e.g. Ostrom, 2009). This seems to indicate that the current predicament is more due to over-investment in the long-standing reductionist approach than anything more fundamental, and that, at least in theory, it should be possible to transcend our relative incapacity to deal with the complexities of the dynamics we are involved in.

Overcoming the limitations of human STWM

Although I am not an expert in the field at all, it seems to me that the ICT revolution has indeed created the conditions for us to overcome the limitations to our cognitive capacities that are inherent in our short-term working memory. Present-day computers do have the capacity to deal with an almost unlimited number of dimensions and information sources in real time, and thus to overcome what appeared at first sight to be the most fundamental of the barriers mentioned above. But that capacity has not been fully exploited because of our long-standing and ubiquitous scientific and intellectual tradition, which has emphasized the use of such equipment as part of the process of dimension-reduction that provides acceptable explanations, rather than as a tool to increase the number of dimensions taken into account in our understanding of complex phenomena. Under the impact of complex systems science this is clearly changing (as seen, for example, in the increased use of high-dimensional Agent Based Models, but much more needs to be done, mainly in developing conceptual and mathematical tools as well as appropriate software.

Overcoming the under-determination of our theories by observations

Similarly, and with the same caveat that I am not a professional in this field, I am under the impression that the very recent revolution in IT capacity to continuously monitor processes on-line, and to treat and store the exponentially increased data streams that are generated by such monitoring, points to the fact that we may indeed be on the brink of (at least partly) overcoming the under-determination of our theories by our observations, and that this is the corollary of the dimension-reduction traditional science practices (Atlan, 1992). The reduction in the size and cost of the monitoring equipment is quickly bringing such massive data collection within reach. Simultaneously, the development of novel data-mining techniques is helping us to make sense of the data thus collected, or at least in selecting the appropriate data to be scrutinized in order to better inform our theories.

Transforming our scientific and intellectual tradition

Although I am not among those who fall easily for panaceas, I do believe that the complex (adaptive) systems approach is a useful first step on the way to fundamentally transforming our scientific and intellectual tradition from studying stasis and preferring simple to complex explanations, to studying dynamics, with an emphasis on emergence and inversion of Occam’s razor (increasing the number of dimensions taken into account). Clearly, we have a long way to go in this domain, but the rapid and substantive advances in certain fields, including physics, biology and economics, coupled with the rapid recent spread of this approach in universities in many parts of the world and the growing awareness of the need for more holistic approaches in such domains as sustainability and health, cause me to be moderately optimistic about our chances of transforming our scientific and intellectual tradition.

The communication challenge

The underlying communication challenge is how to communicate other than linearly and in writing or speech with an increasingly large number of partners at very variable distances. This is the trend that was, in my opinion, responsible for the particular development referred to above: narrower and narrower concepts, and the consequent fragmentation of our perspective on the world. Contrary to some, I do not think language is subject to deliberate change—it adapts itself to human needs and ideas in a ‘bottom-up’ process. But even if it were possible to transform the ways in which we speak and write, we would still have an essentially linear communication tool. The question is therefore whether the radically different ways of interactively communicating that are made possible by modern communications technologies, and in particular the collective building of knowledge using multimedia, as is made possible in web 2.0, will allow us to communicate non-linearly and in more dimensions. This would entail the directed use of visuals, which generally can communicate more dimensions simultaneously than spoken or written language.

Transforming our thinking

The kind of reductionist thinking that I am referring to is so heavily ingrained and so widely spread in our culture and our kinds of science that changing our thinking will require a major effort. Our world view, our language, our institutions all militate against such a change, and most importantly, we are for the moment lacking a coherent alternative way of thinking against which we can leverage our present-day science. By far the greatest challenge from the perspective of human and financial capital and effort therefore appears to me to be in the domain of education, from the earliest childhood throughout university and into adult life. The current education system in the developed world is, overall, no longer adapted to the challenges of the 21st century, among which sustainability looms so large. We have to move away from knowledge acquisition aimed at question-driven research towards challenge-focused education that aims to help deal with substantive challenges, from ‘linear explanation’ in terms of cause-and-effect to ‘multi-dimensional projection’ in terms of alternatives, from one-to-many teaching (in which an instructor tells students what to do, what is right and what is wrong), to many-to-many teaching in which instructors and students all interact, learn and teach. At the same time, we must develop education systems that stimulate the acquisition of creativity, risk-taking and diversity rather than conformity and risk-adverseness. In doing so we must harness the tools referred to above, but more than anything we must ‘bend’ minds around to thinking in new, uncharted, ways. In doing so, we are handicapped by the fact that economics, career structures, evaluations, disciplinary momentum and many other factors and dynamics are stacked against success in this area. There is a lot of work to be done!

References

Alp, I. E. (1994), “Measuring the size of working memory in very young children: the imitation sorting task”, International Journal of Behavioral Development 17, pp. 125–141.

Atlan, H. (1992), “Self-organizing networks: weak, strong and intentional. The role of their under-determination”, La Nuova Critica, N. S., 19-20(1/2), pp. 51-70.

Bateson, G. (1972), Steps to an Ecology of Mind, New York: Ballantine

Bettencourt, L. M. A., J. Lobo, D. Helbing, C. Kühnert and G.B. West (2007), “Growth, Innovation, Scaling and the Pace of Life in Cities”, Proceedings of the National Academy of Sciences (US) 104(17), pp. 7301-7306.

Boyd, R., and P. J. Richerson (1985), Culture and the Evolutionary Process, Chicago: University of Chicago Press

Carlson, S. M., L. J. Moses and C. Breton (2002), “How Specific is the Relation between Executive Function and Theory of Mind? Contributions of Inhibitory Control and Working Memory”, Infant-Child Development 1, pp. 73–92.

Corrêa, L. M. S. (1995), “An alternative assessment of children’s comprehension of relative clauses”, Journal of Psycholinguistic Research 24, pp. 183–203.

Cronon, W. (1983), Changes in the Land: Indians, Colonists, and the Ecology of New England, New York: Hill and Wang.

Diamond, A. and B. Doar (1989), “The Performance of Human Infants on a Measure of Frontal Cortex Function, the Delayed-Response Task”, Developmental Psychobiology 22, pp. 271–294.

Epstein, H. T. (2002), “Evolution of the Reasoning Brain”, Behavioral Brain Science 25, pp. 408–409.

Evernden, N. (1992), The Social Creation of Nature, Baltimore: Johns Hopkins Press.

Johnson, J., V. Fabian and J. Pascual-Leone (1989), “Quantitative Hardware Stages that Constrain Language Development”, Human Development, 32, pp. 245–271.

Kemps, E., S. De Rammelaere and T. Desmet (2000), “The Development of Working Memory: Exploring the Complementarity of Two Models”, Journal of Experimental Psychology 77, pp. 89–109.

Kidd, E. and E. L. Bavin (2002), English-speaking Children’s Comprehension of Relative Clauses: Evidence for General– Cognitive and Language-Specific Constraints on Development”, Journal of Psycholinguistic Research, 31, pp. 599–617.

Lane, D., R. Maxfield, D. W. Read and S. E. van der Leeuw (2009), “From Population Thinking to Organization Thinking”, in D. Lane, D. Pumain, S. E. van der Leeuw and G. West (eds.), Complexity Perspectives on Innovation and Social Change, pp. 11-42, Berlin: Springer (Methodos series).

Luciana, M. and C. A. Nelson (1998), “The Functional Emergence of Prefrontally-Guided Working Memory Systems in Four-to-Eight-Year-Old Children”, Neuropsychologia 36, pp. 273–293.

Martin, R. D. (1981), “Relative Brain Size and Basal Metabolic Rate in Terrestrial Vertebrates”, Nature 293, pp. 7–60.

Mitchell, M. (2009), Complexity: a Guided Tour, New York: Oxford University Press

Ostrom, E. (2009), “A General Framework for Analyzing Sustainability of Social-Ecological Systems”, Science 325(5939), pp. 419-422.

Pigeot, N. (1991), “Reflexions sur l’histoire technique de l’homme: De l’évolution cognitive à l’évolution culturelle”, Paléo 3, pp. 167-200.

Popper, K. (1959), The Logic of Scientific Discovery, London: Hutchinson.

Read, D. W., D. A. Lane and S. E. van der Leeuw (2009), “The Innovation Innovation”, in (D. A. Lane, D. Pumain, S. E. van der Leeuw and G. West (eds.) Complexity Perspectives on Innovation and Social Change Berlin: Springer Verlag.

Read, D. W. and S. E. van der Leeuw (2008), “Biology Is Only Part Of The Story…”, Philosophical Transactions of the Royal Society, Series B 363, pp. 1959-1968.

Read, D. W. and S. E. van der Leeuw (2009), “Biology Is Only Part Of The Story…”, in A. C. Renfrew and L. Malafouris (eds.), The Sapient Mind, Oxford: Oxford University Press, pp. 33-49.

Rightmire, G. P. (2004), “Brain Size and Encephalization in Early to Mid-Pleistocene Homo”, American Journal of Physical Anthropology 124, pp. 109–123.

Ruff, C. B., E. Trinkhaus and T. W. Holliday (1997), “Body Mass and Encephalization in Pleistocene Homo”, Nature 387, pp. 173–176.

Selin, C. (2006), “Trust and the Illusive Force of Scenarios”, Futures 38(1), pp. 1-14.

Shannon, C. E. and W. Weaver (1948), “A Mathematical Theory of Communication”, Bell System Technical Journal 27, pp. 379-423 and 623-656.

Siegel, L. S. and E. B. Ryan (1989), “The Development of Working Memory in Normally Achieving and Subtypes of Learning-Disabled Children”, Child Development 60, pp. 973–980.

Tainter, J. A. (1988), The Collapse of Ancient Societies, Cambridge (UK); Cambridge University Press.

van der Leeuw, S. E. (1986), “On Settling Down and Becoming a ‘Big-Man'”, in M. A. van Bakel, R. R. Hagesteijn and P. van de Velde (eds.), Private Politics: a Multi-Disciplinary Approach to ‘Big-Man’ Systems, Leiden: Brill Academics Publishers, pp. 33-47.

van der Leeuw, S. E. (1990), “Archaeology, Material Culture and Innovation”, SubStance 62-63, pp. 92-109.

van der Leeuw, S. E. (2000), “Making Tools from Stone and Clay”, in A. Anderson and T. Murray (eds.), Australian Archaeologist: Collected Papers in Honour of Jim Allen, Coombs: Academic Publishing.

van der Leeuw, S. E. (2007), “Information Processing and its Role in the Rise of the European World System”, in R. Costanza, L. J. Graumlich and W. Steffen (eds.), Sustainability or Collapse?, Cambridge, MA: The MIT Press, Dahlem Workshop Reports, pp. 213-241.

Wilkinson, A. and E. Eidinow (2008), “Evolving Practices in Environmental Scenarios: a New Scenario typology”, Environmental Research Letters, 3(4) 045017.

Notes

1 The distinction between humans (Homo sapiens) and modern humans (Homo sapiens sapiens) referred to here follows current custom among paleo-anthropologists. The transition is estimated to have occurred somewhere around 200,000 years BP.

2 All the dates mentioned in this paper are not only approximate, and differ between different parts of the world, but are also continually subject to revisions as archaeological research progresses.

3 Writing under the pseudonym Paul d’Ivoi, this French author anticipated the idea of modern telecommunications (wireless and television)

Comments on this publication