Humans like to brag about the amount of work our species has managed to offload on machines. Our tools, however, are only suited to the most specific tasks. None can rival the versatility of the brain, a lump of twisted flesh capable of producing movement, love, speech, pain and even angst about our place in the universe. While some technologists try to recreate specific brain skills using artificial intelligence, others have taken on the task of enhancing this organ, molding its shape and functions or extending its reach beyond the confines of the human body.

These are the challenges of neurotechnology. In order to harness the power of the brain, one must first know where to place the bridle: “We need detailed knowledge of the neurophysiology that goes on beneath the skull,” says Javier Mínguez, an engineering professor at the University of Zaragoza, Spain, and the inventor of one of the first mind-controlled wheelchairs. Neurons send near-instantaneous messages around the body through action potentials, electrical impulses that race across the cells’ surface. These telegrams are coordinated in the central nervous system—using medical imaging techniques, scientists can see which areas are activated during specific bodily tasks, such as walking. This sort of insight enabled surgeons to implant electrodes in the spine of the Swiss tetraplegic man David Mzee. At the end of 2008, Mzee managed to walk for the first time in eight years, thanks to artificial electrical stimulation of the spine.

Through ‘miracles’ such as this, neurotechnology has become a respected field in modern science. British cybernetics researcher Kevin Warwick, currently at the University of Coventry, has used implants in the nervous system to counter the effects of Parkinson’s disease and even for a proof-of-concept demonstration of electronic telepathy between two humans. But no matter how useful brain devices become, they will never be popular if cranial surgery is required. Present-day cerebral enhancement relies on the cheaper and more appealing non-invasive brain-machine interfaces (BMIs). These devices register brainwaves on the scalp’s surface, which are translated into mental orders directed outside the body. That is how Javier Mínguez created, in 2009, the mind-controlled wheelchair.

Non-invasive mind control

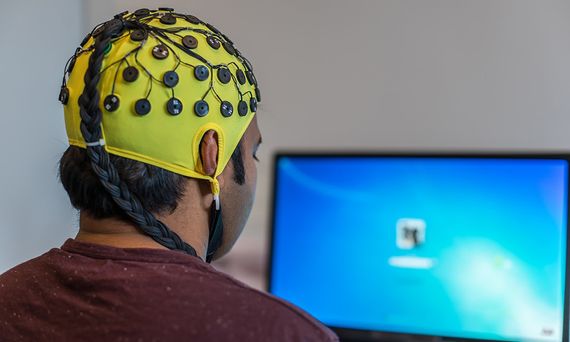

The chair’s user wears a hat-shaped electroencephalography sensor, much like the ones used by doctors to diagnose epilepsy, sleep disorders and coma. The device measures voltage fluctuations produced by synchronised electrical impulses beneath the skull. Depending on a person’s activity, these brainwaves—or neural oscillations, as experts call them—have a different origin and frequency. The machine learns to recognise such patterns, so when the user wills the wheelchair to move forward, it complies.

For the person using this device, the action is intuitive. Mínguez, who founded the neurotechnology company BitBrain in 2010, says “it’s the machine that must train and learn to grasp the brain signals’ meaning”. Mark Weiser, Chief Technologist at Xerox PARC in California, made the same point during the 1990s: “The more you can do by intuition, the smarter you are. The computer should extend the unconscious. Technology should create calm.” That can only be achieved by calibrating the brain-machine interface before each use. No two brains are equal, so the computer must familiarize itself with the mental patterns and emotional state of the wearer.

This level of personalisation raises a question: ¿Does the brain monitor invade the user’s privacy? Not yet, says Pablo Ortega, an expert in neurotechnology at Imperial College London, England. He is quick to point out that “electroencephalograms currently tell us far less about a user than what is revealed by the same person’s clicks on Facebook, or their conversations with their phone’s voice assistant”. Given the present pace of development, however, those same brainwaves may soon carry deeper secrets.

Artificial intelligence to decipher the mind

At Imperial’s Centre for Excellence in Neurotechnology, where Ortega works, scientists are developing mind-controlled robotic arms. “We use an artificial intelligence that learns which areas of the brain are activated when someone wants to produce a force with each hand,” he explains. If one or both arms are connected to a brain-machine interface, the algorithm knows when to activate each prosthetic limb in response to the user’s thoughts. Crucially, this process does not require the researchers to label every type of brainwave in advance.

The applications of this mature technology range far beyond medicine and healthcare. “Once the machine can decode different mental commands, those can be put to any task,” Ortega says. In fact, mere months after Javier Mínguez presented his pioneering mind-controlled wheelchair to the world, he reached another milestone in neurotechnology: controlling a telepresence robot with the brain. He only had to reroute the brainwaves from the wheelchair’s sensor to a robot in a different city, which received the signals in real time over the Internet.

Nowadays, brain-machine interfaces can be used to control operating systems and even to type on a computer with predictive text. “The bottleneck is squeezing all the letters of the alphabet out of the electroencephalogram’s signal”, Ortega explains. For all their virtues, non-invasive techniques still lack sensitivity. The company BitBrain produces sleek diadem-shaped brain sensors that rest elegantly on the user’s head (long-gone are the days of shower-hats and electrodes stuck to the scalp with gels), but these devices still can’t compete with the resolution of a brain implant. Mínguez says they “detect the sum of thousands of cells beneath the surface”.

For Ortega, surface brainwaves and inner-brain readings are “worlds apart”. He explains it like this: “Imagine you are on the top of the Eiffel Tower in Paris, trying to listen to 30.000 people shouting at you from the base”. That is the equivalent of reading an electroencephalogram. “It’s not the same as going down to interview those people one by one,” which would equate to the resolution of a surgical implant. Despite this limitation, the technology is racing forward in leaps and bounds, with recent applications in cognitive therapy and enhancement, remote control for drones, neuromarketing and even assisted driving. This last model, developed by Japanese firm Nissan, involves cars that will soon learn to anticipate their drivers’ decisions—as brainwaves can give away imminent actions, like a slam on the brakes, fractions of a second before they are carried out. The technology already exists, it just needs a streamlined production model to make it out of the lab and into our homes.

Comments on this publication