Introduction

In my last submission, “The Emergence of the Age of Artificial Intelligence (AI):Part 1”, I looked at examples of symbol manipulating programs in AI. Such programs operate by using explicitly stored symbols that are logically manipulated during the program execution.

Another class of AI software, called Artificial Neural Networks (ANNs), do not use explicit knowledge stored as rules of operation. Instead ANNs use implicit knowledge that is encoded in numeric parameters – called weights – and distributed over many connections. Unlike symbolic AI, ANNs have “black box” characteristics because they cannot explicate their reasoning in the same way that symbolic AI programs do. Nevertheless, they have become the dominant AI paradigm because of their “machine learning” capabilities. The learning paradigm underlying ANNs, closely resemble human learning. For example, they exhibit plasticity in that can adapt to learning new tasks because the nature of their connections can change. Also, ANN’s follow something known as Hebb’s Rule which says that every time every time a correct decision is made, the neural pathways are reinforced. This is often stated as “neurons that fire together wire together” – meaning practice makes perfect.

- Artificial Neural Networks (ANNs)

ANNs work by learning from the use of large amounts of training data. Implicit knowledge is encoded in numeric parameters – called weights – and distributed all over the system. This implicit knowledge is encoded as tens of thousands of numerical input weights that are calibrated as each example is read by the software. The concepts of ANNs are modeled on the workings of the biological human brain.

- The Biological Neuron

The human brain consists of special cells called neurons. Such cells are special because they have parts called dendrites and axons which enable them to communicate with other cells. Signals are propagated from one neuron to another by complex electrochemicalreactions. There are estimated to be about 100 billion neurons in a typical human brain. The neurons themselves are separated into groups called networks which are highly interconnected. The human brain can be viewed as a collection of neural networks. Learning is accomplished by using a “training set” of example cases, which is repeatedly analysed in order to extract patterns of data. The network can then be applied to other cases of the same type, in which it should be able to identify the same patterns and thus discriminate cases.

- The Perceptron

A single neuron (called a perceptron) works as follows. Each cell recieves a number of inputs from neighbouring cells, it then adjusts its weights (numerical values) to represent the strength of connections. The weights themselves are assigned initial values by the developer before the examples are analysed. It then sums the weights and fires the neuron which activates if this sum exceeds a threshold value – again assigned by the developer.

There are four inputs in this example. This can be expressed formally as, given that the inputs are x1,.…x 4 with weights w1…w 4 then:

- If x1 w1 + x2 w2+ x3 w3 + x4 w4 > y (where y is some threshold value usually greater than or equal to 0.5)

Then neuron is activated. Else neuron is not activated

Layers

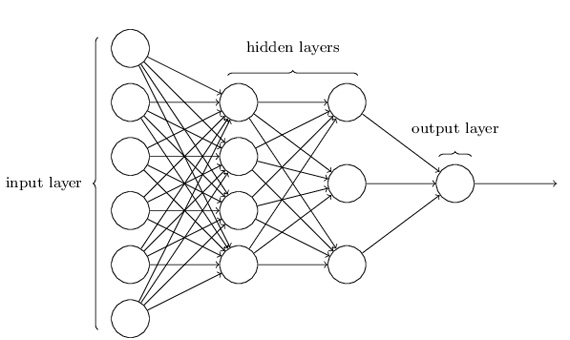

In a perceptron, there is only one weight for each input and only one output. It means that a single-layer perceptron can classify only linearly type patterns. Without getting too technical, this means that a perceptron, or a combination of them connected in one layer, will not provide the expressive power to describe the model at the correct level. However, in the multilayer network, there are many weights, each of which contributes to more than one output. That is why multi-layer networks are used in practical problem solving – they can model the system at the correct level. Fig.2 displays a segment of a multi-layer ANN.

Supervised Learning

In general, supervised learning algorithms work with the system programmer (or developer) providing the ANN what the correct answer is when each example is fed into the system. The ANN determines weights in such a way that once given the input data, it would produce the desired output. It is then repeatedly given more examples, one by one, with the weights adjusted in order to produce outputs closer to what is expected. Given a large enough training set, the inputs will become “fine tuned” so as to predict reaonably well the likely output. This means that even when the system is fed with a small number of examples of noisy or incomplete data, its impact will be small and therefore the robustness of the ANN will be preserved. Supervised learning in this example works by providing each row of data – including the output column for the training set and comparing the output expected with the actual output. The weights are then adjusted so that the outputs will become closer to those expected. But the weights must be given initial values and a value must be assigned for the comparison with the output and a value must be chosen for the amount by which the weight values are to be adjusted when required.

Unsupervised Learning

With unsupervised learning, the ANN receives only the input data but no information about the expected output. In this type of learning, the ANN learns to produce the pattern of insight. Deep learning algorithms are a very recently developed phenomenon that can do this through a combination of massive numbers of examples and several network layers – requiring a great deal of computational power. The rise of “big data” techniques make the former possible because data can be mined through so many sites daily, such as social networking, retailing, business and so on, in forms such as sound, text, and images. This means that, for example, networks can be fed images, without knowing much about them, and discover features themselves – instead of having to be told what those features are, such as perhaps the edges of a physical object. Rather than the developer identifying features, the network is trained by feeding it data and scoring how well it performs. The network uses layers of nodes that that look for features at different levels of abstraction. Deep learning systems are so-called because the learning algorithms operate in several (deep) layers. Each layer processes data from the predecessor layer, the output of which is passed to the next layer. The number of layers can vary. For example, GoogleNet, uses 22 layers in its computer vision systems for object recognition. Deep Learning systems have had a huge impact on the AI field in recent years

Applications of ANNs

ANN technology has been used successfully for many years. Commonly used applications during the last twenty years have been programs that detect email spam, approve mortgage and loan applications, and medical diagnostic applications. These applications used supervised learning algorithms. The success of ANNs stems from the way that they often provide insights beyond human capabilities – such as recognising patterns that would only be possible by machine learning analysis. For example, Chase Manhattan Bank used an ANN to examine data about the use of stolen credit cards. They discovered that the most suspicious sales were for women’s shoes costing between $40 and $80. This is one of a number of ANN applications that attempt to extract value from databases – known as Data Mining. It does this by discovering patterns in the data – leading to improved decision making. Data mining techniques are frequently used in other areas of retailing. For example, Market Basket Analysis (MBA) is a technique which is predicated on the theory that customers who buy certain groups of products, are likely to buy other types of products. Such information, when detected, might mean it will be in the retailer’s interest to change the layout of the store so that these products are adjacent to increase sales.

However, in recent years, ANNs using unsupervised algorithms have made a massive impact on the field of AI. Deep learning ANNs are being used in many domains including image recognition medical applications, driverless cars, and robotics. Amongst the most prominent current uses include intelligent personal assistant programs for smartphones, such as Amazon Alexa, Microsoft Cortana, and Apple Siri. Their features vary, but will typically recognise voice input and enable interaction, music playback, setting reminders, provided spoken voice links to customer database contacts and more. Furthermore, Apple is said to be incorporating a dedicated AI chip in its future generation of smartphones. This means that individual user behaviour can be learned leading to improvements in battery life and device performance. Deep learning ANNs is a field rapidly growing with applications likely to become ubiquitous in the years to come.

Comments on this publication