A historical perspective

Classically, information is considered to be human-to-human transactions. However, throughout history this concept has been expanded, not so much by the development of mathematical logic but by technological development. A substantial change occurred with the arrival of the telegraph at the beginning of the 19th century. Thus, “send” went from being strictly material to a broader concept, as many anecdotes make clear. Among the most frequent highlights the intention of many people to send material things by means of telegrams, or the anger of certain customers arguing that the telegraph operator had not sent the message when he returned them the message note.

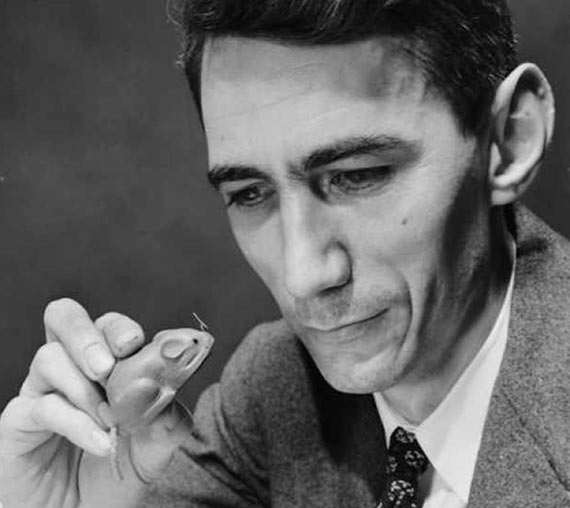

Currently, “information” is an abstract concept based on the theory of information, created by Claude Shannon in the mid-twentieth century. However, computer technology is what has contributed most to the concept of “bit” being something totally familiar. Moreover, concepts such as virtual reality, based on the processing of information, have become everyday terms.

The point is that information is ubiquitous in all natural processes, physics, biology, economics, etc., in such a way that these processes can be described by mathematical models and ultimately by information processing. This makes us wonder: What is the relationship between information and reality?

Information as a physical entity

It is evident that information emerges from physical reality, as computer technology demonstrates. The question is whether information is fundamental to physical reality or simply a product of it. In this sense, there is evidence of the strict relationship between information and energy.

Thus, the Shannon-Hartley theorem of information theory establishes the minimum amount of energy required to transmit a bit, known as the Bekenstein bound. In a different way and in order to determine the energy consumption in the computation process, Rolf Landauer established the minimum amount of energy needed to erase a bit, a result known as Landauer principle, and its value exactly coincides with the Bekenstein bound, which is a function of the absolute temperature of the medium.

These results allow determining the maximum capacity of a communication channel and the minimum energy required by a computer to perform a given task. In both cases, the inefficiency of current systems is evidenced, whose performance is extremely far from theoretical limits. But in this context, the really important thing is that Shannon-Hartley’s theorem is a strictly mathematical development, in which the information is finally coded on physical variables, leading us to think that information is something fundamental in what we define as reality.

Both cases show the relationship between energy and information, but are not conclusive in determining the nature of information. What is clear is that for a bit to emerge and be observed on the scale of classical physics requires a minimum amount of energy determined by the Bekenstein bound. So, the observation of information is something related to the absolute temperature of the environment.

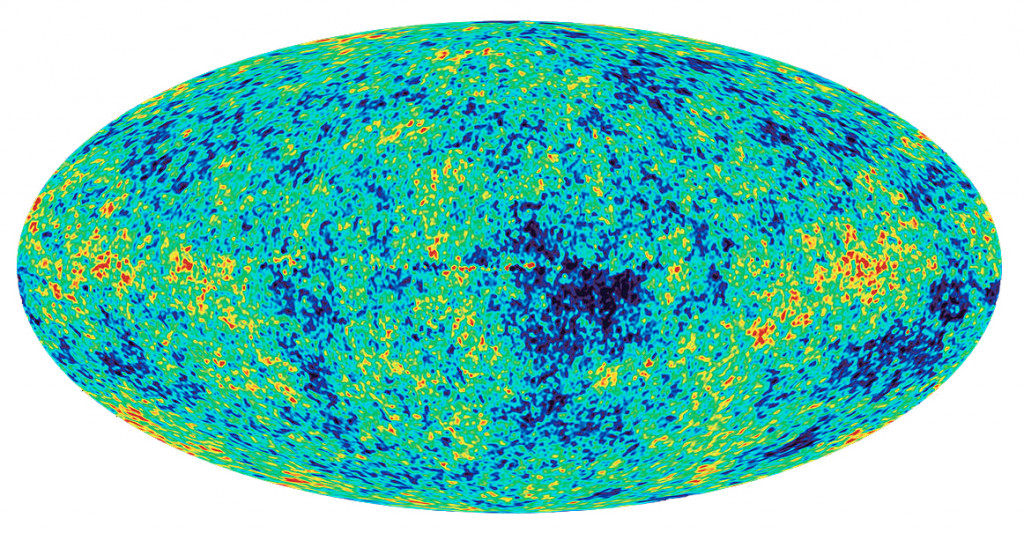

This behavior is fundamental in the process of observation, as it becomes evident in the experimentation of physical phenomena. A representative example is the measurement of the microwave background radiation produced by the big bang, which requires that the detector located in the satellite be cooled by liquid helium. The same is true for night vision sensors, which must be cooled by a Peltier cell. On the contrary, this is not necessary in a conventional camera since the radiation emitted by the scene is much higher than the thermal noise level of the image sensor.

This proves that information emerges from physical reality. But we can go further, as information is the basis for describing natural processes. Therefore, something that cannot be observed cannot be described. In short, every observable is based on information, something that is clearly evident in the mechanisms of perception.

From the emerging information it is possible to establish mathematical models that hide the underlying reality, suggesting a functional structure in irreducible layers. A paradigmatic example is the theory of electromagnetism, which accurately describes electromagnetism without relying itself on the photon’s existence, and the existence of photos cannot be inferred from it. Something that is generally extendable to all physical models.

Another indication that information is a fundamental entity of what we call reality is the impossibility of transferring information faster than light. This would make reality a non-causal and inconsistent system. Therefore, from this point of view information is subject to the same physical laws as energy. And considering a behavior such as particle entanglement, we can ask: How does information flow at the quantum level?

Is information the essence of reality?

Based on these clues, we could hypothesize that information is the essence of reality in each of the functional layers in which it is manifested. Thus, for example, if we think of space-time, its observation is always indirect through the properties of matter-energy, so we could consider it to be nothing more than the emergent information of a more complex underlying reality. This gives an idea of why the vacuum remains one of the great enigmas of physics. This kind of argument leads us to ask: What is it and what do we mean by reality?

From this perspective, we can ask what conclusions we could reach if we analyze what we define as reality from the point of view of information theory and, in particular, from the algorithmic information theory and the theory of computability. All this without losing sight of the knowledge provided by the different areas that study reality, especially physics.

Comments on this publication