In recent years, although physics has not experienced the sort of revolutions that took place during the first quarter of the twentieth century, the seeds planted at that time are still bearing fruit and continue to engender new developments. This article looks at some of them, beginning with the discovery of the Higgs boson and gravitational radiation. A deeper look reveals the additional need to address other discoveries where physics reveals its unity with astrophysics and cosmology. These include dark matter, black holes, and multiple universes. String theory and supersymmetry are also considered, as is quantum entanglement and its uses in the area of secure communications (quantum cryptography). The article concludes with a look at the presence and importance of physics in a scientifically interdisciplinary world.

Physics is considered the queen of twentieth-century science, and rightly so, as that century was marked by two revolutions that drastically modified its foundations and ushered in profound socioeconomic changes: the special and general theories of relativity (Albert Einstein, 1905, 1915) and quantum physics, which, unlike relativity, cannot be attributed to a single figure as it emerged from the combined efforts of a large group of scientists. Now, we know that revolutions, whether in science, politics, or customs, have long-range effects that may not be as radical as those that led to the initial break, but can nonetheless lead to later developments, discoveries, or ways of understanding reality that were previously inconceivable. That is what happened with physics once the new basic theories were completed. In the case of quantum physics, we are referring to quantum mechanics (Werner Heisenberg, 1925; Paul Dirac, 1925; Erwin Schrödinger, 1926). In Einstein’s world, relativistic cosmology rapidly emerged and welcomed as one possible model of the Universe the experimental discovery of the Universe’s expansion (Edwin Hubble, 1929). Still, the most prolific “consequences-applications” emerged in the context of quantum physics. In fact, there were so many that it would be no exaggeration to say that they changed the world. There are too many to enumerate here, but it will suffice to mention just a few: the construction of quantum electrodynamics (c. 1949), the invention of the transistor (1947), which could well be called “the atom of globalization and digital society”, and the development of particle physics (later called “high-energy physics”), astrophysics, nuclear physics, and solid-state or “condensed matter” physics.

The second half of the twentieth century saw the consolidation of these branches of physics, but we might wonder whether important novelties eventually stopped emerging and everything boiled down to mere developments—what Thomas Kuhn called “normal science” in his 1962 book, The Structure of Scientific Revolutions. I hasten to add that the concept of “normal science” is complex and may lead to error: the development of the fundaments—the “hard core,” to use the term introduced by Kuhn—of a scientific paradigm, that is, of “normal science,” can open new doors to knowledge of nature and is, therefore, of the greatest importance. In this article, I will discuss the decade between 2008 and 2018, and we will see that this is what has happened in some cases during the second decade of the twenty-first century, significantly after the “revolutionary years” of the early twentieth century.

The Discovery of the Higgs Boson

One of the most celebrated events in physics during the last decade was the confirmation of a theoretical prediction made almost half a century ago: the existence of the Higgs boson. Let us consider the context that led to this prediction.

High-energy physics underwent an extraordinary advance with the introduction of particles whose names were proposed by one of the scientists responsible for their introduction: Murray Gell-Mann. The existence of these quarks was theorized in 1964 by Gell-Mann and George Zweig. Until they appeared, protons and neutrons had been considered truly basic and unbreakable atomic structures whose electric charge was an indivisible unit. Quarks did not obey this rule, as they were assigned fractional charges. According to Gell-Mann and Zweig, hadrons—the particles subject to strong interaction—are made up of two or three types of quarks and antiquarks called u (up), d (down) and s (strange), whose electric charges are, respectively, 2/3, 1/3, and 1/3 of an electron’s (in fact, there can be two types of hadrons: baryons—protons, neutrons, and hyperions—and mesons, which are particles whose masses have values between those of an electron and a proton). Thus, a proton is made up of two u quarks and one d, while a neutron consists of two d quarks and one u. They are, therefore, composite structures. Since then, other physicists have proposed the existence of three more quarks: charm (c; 1974), bottom (b; 1977) and top (t; 1995). To characterize this variety, quarks are said to have six flavors. Moreover, each of these six can be of three types, or colors: red, yellow (or green), and blue. Moreover, for each quark there is an antiquark. (Of course, names like these—color, flavor, up, down, and so on—do not represent the reality we normally associate with such concepts, although in some cases they have a certain logic, as is the case with color).

Ultimately, quarks have color but hadrons do not: they are white. The idea is that only the “white” particles are observable directly in nature. Quarks are not, as they are “confined,” that is, associated to form hadrons. We will never be able to observe a free quark. Now, in order for quarks to remain confined, there must be forces among them that differ considerably from electromagnetic or other forces. As Gell-Mann put it (1995: 200): “Just as the electromagnetic force among electrons is measured by the virtual exchange of photons, quarks are linked to each other by a force that arises from the exchange of other types: gluons (from the word, glue) bear that name because they stick quarks together to form observable white objects such as protons and neutrons.”

Physics is considered the queen of twentieth-century science, and rightly so, as that century was marked by two revolutions that drastically modified its foundations and ushered in profound socioeconomic changes: the special and general theories of relativity and quantum physics

About a decade after the introduction of quarks, a new theory—quantum chromodynamics—emerged to explain why quarks are so strongly confined that they can never escape from the hadronic structures they form. Coined from chromos, the Greek word for color, the term chromodynamics alludes to the color of quarks, while the adjective quantum indicates that it meets quantum requirements. Quantum chromodynamics is a theory of elementary particles with color, which is associated with quarks. And, as these are involved with hadrons, which are the particles subject to strong interaction, we can affirm that quantum chromodynamics describes that interaction.

So quantum electrodynamics and quantum chromodynamics function, respectively, as quantum theories of electromagnetic and strong interactions. There was also a theory of weak interactions (those responsible for radioactive processes such as beta radiation, the emission of electrons in nuclear processes), but it had some problems. A more satisfactory quantum theory of weak interaction arrived in 1967 and 1968, when US scientist Steven Weinberg and British-based Pakistani scientist Abdus Salam independently proposed a theory that unifies electromagnetic and weak interactions. Their model included ideas proposed by Sheldon Glashow in 1960. The Nobel Prize for Physics that Weinberg, Salam, and Glashow shared in 1979 reflects this work, especially after one of the predictions of their theory—the existence of “weak neutral currents”—was corroborated experimentally in 1973 at CERN, the major European high-energy laboratory.

Electroweak theory unified the description of electromagnetic and weak interactions, but would it be possible to move further along this path of unification and discover a formulation that would also include the strong interaction described by quantum chromodynamics? The answer arrived in 1974, and it was yes. That year, Howard Georgi and Sheldon Glashow introduced the initial ideas that came to be known as Grand Unification Theories (GUT).

The combination of these earlier theories constituted a theoretical framework for understanding what nature is made of, and it turned out to have extraordinary predictive capacities. Accordingly, two ideas were accepted: first, that elementary particles belong to one of two groups—bosons or fermions, depending on whether their spin is whole or fractional (photons are bosons, while electrons are fermions)—that obey two different statistics (ways of “counting” groupings of the same sort of particle). These are the Bose-Einstein statistic and the Fermi-Dirac statistic. Second, that all of the Universe’s matter is made up of aggregates of three types of elementary particles: electrons and their relatives (particles called muons and taus), neutrinos (electronic, muonic, and tauonic neutrinos), and quarks, as well as the quanta associated with the fields of the four forces that we recognize in nature (remember that in quantum physics the wave-particle duality signifies that a particle can behave like a field and vice versa): the photon for electromagnetic interaction, Z and W particles (gauge bosons) for weak interaction, gluons for strong interaction, and, while gravity has not yet been included in this framework, supposed gravitons for gravitational interaction. The subgroup formed by quantum chromodynamics and electroweak theory (that is, the theoretical system that includes relativist theories and quantum theories of strong, electromagnetic, and weak interactions) is especially powerful, given the balance between predictions and experimental proof. This came to be known as the Standard Model, but it had a problem: explaining the origin of the mass of the elementary particles appearing therein called for the existence of a new particle, a boson whose associated field would permeate all space, “braking,” so to speak, particles with mass so that, through their interaction with the Higgs field, they showed their mass (it particularly explains the great mass possessed by W and Z gauge bosons, as well as the idea that photons have no mass because they do not interact with the Higgs boson). The existence of such a boson was predicted, theoretically, in three articles published in 1964—all three in the same volume of Physical Review Letters. The first was signed by Peter Higgs (1964a, b), the second by François Englert and Robert Brout (1964), and the third by Gerald Guralnik, Carl Hagen, and Thomas Kibble (1964a). The particle they predicted was called “Higgs boson.”

One of the most celebrated events in physics during the last decade was the confirmation of a theoretical prediction made almost half a century ago: the existence of the Higgs boson

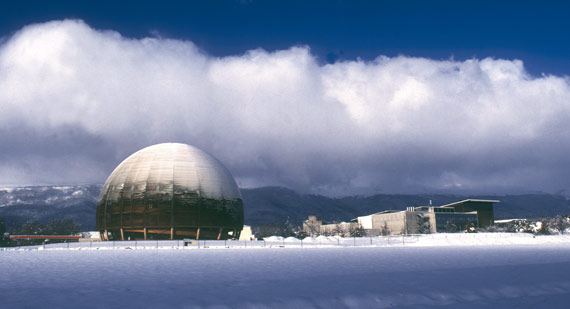

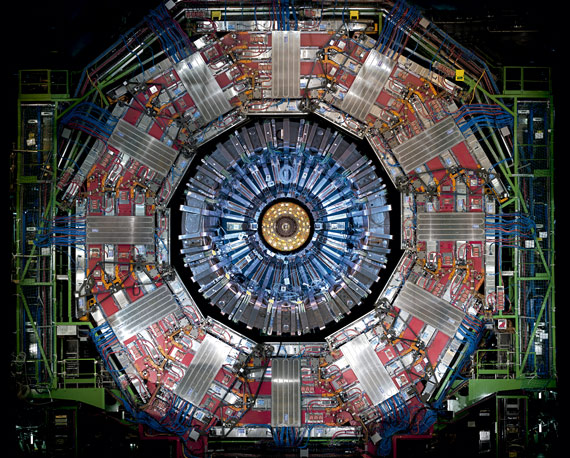

Detecting this supposed particle called for a particle accelerator capable of reaching sufficiently high temperatures to produce it, and it was not until many years later that such a machine came into existence. Finally, in 1994, CERN approved the construction of the Large Hadron Collider (LHC), which was to be the world’s largest particle accelerator, with a twenty-seven-kilometer ring surrounded by 9,600 magnets of different types. Of these, 1,200 were two-pole superconductors that function at minus 217.3ºC, which is even colder than outer space, and is attained with the help of liquid helium. Inside that ring, guided by the magnetic field generated by “an escort” of electromagnets, two beams of protons would be accelerated until they were moving in opposite directions very close to the speed of light. Each of these beams would circulate in its own tube, inside of which an extreme vacuum would be maintained, until it reached the required level of energy, at which point the two beams would be made to collide. The theory was that one of these collisions would produce Higgs bosons. The most serious problem, however, was that this boson almost immediately breaks down into other particles, so detecting it called for especially sensitive instruments. The detectors designed and constructed for the LHC are called ATLAS, CMS, ALICE, and LHCb, and are towering monuments to the most advanced technology.

Following construction, the LHC was first tested by circulating a proton beam on September 10, 2008. The first proton collisions were produced on March 30, 2010, producing a total energy of 7·1012 eV (that is, 7 tera-electron volts; TeV), an energy never before reached by any particle accelerator. Finally, on July 4, 2012, CERN publicly announced that it had detected a particle with an approximate mass of 125·109 eV (or 125-giga-electron volts; GeV) whose properties strongly suggested that it was a Higgs boson (the Standard Model does not predict its mass). This was front-page news on almost all newspapers and news transmissions around the world. Almost half a century after its theoretical prediction, the Higgs boson’s existence had been confirmed. It is therefore no surprise that the 2013 Nobel Prize for Physics was awarded to Peter Higgs and François Englert “for the theoretical discovery of a mechanism that contributes to our understanding of the origin of mass of subatomic particles, and which recently was confirmed through the discovery of the predicted fundamental particle, by the ATLAS and CMS experiments at CERN’s Large Hadron Collider,” as the Nobel Foundation’s official announcement put it.

Cleary, this confirmation was cause for satisfaction, but there were some who would have preferred a negative outcome—that the Higgs boson had not been found where the theory expected it to be (that is, with the predicted mass). Their argument, and it was a good one, was expressed by US theoretical physicist and proponent Jeremy Bernstein (2012 a, b: 33) shortly before the discovery was announced: “If the LHC confirms the existence of the Higgs boson, it will mark the end of a long chapter of theoretical physics. The story reminds me of that of a French colleague. A certain parameter had been named after him, so it appeared quite frequently in discussions about weak interactions. Finally, that parameter was measured and the model was confirmed by experiments. But when I went to congratulate him, I found him saddened that his parameter would no longer be talked about. If the Higgs boson failed to appear, the situation would become very interesting because we would find ourselves in serious need of inventing a new physics.”

Nonetheless, the fact, and triumph, is that the Higgs boson does exist, and has been identified. But science is always in motion, and, in February 2013, the LHC stopped operations in order to make adjustments that would allow it to reach 13 TeV. On April 12, 2018, it began its new stage with the corresponding proton-collision tests. This involved seeking unexpected data that reveal the existence of new laws of physics. For the time being, however, we can say that the Standard Model works very well, and that it is one of the greatest achievements in the history of physics, an accomplishment born of collective effort to a far greater degree than quantum mechanics and electrodynamics, let alone special and general relativity.

Despite its success, however, the Standard Model is not, and cannot be “the final theory.” First of all, it leaves out gravitational interaction, and second, it includes too many parameters that have to be determined experimentally. These are the fundamental, yet always uncomfortable whys. “Why do the fundamental particles we detect even exist? Why are there four fundamental interactions, rather than three, five or just one? And why do these interactions exhibit the properties (such as intensity and range of action) they do?” In the August 2011 issue of the American Physical Society’s review, Physics Today, Steven Weinberg (2011: 33) reflected upon some of these points, and others:

Of course, long before the discovery of neutrino masses, we knew of something else beyond the standard model that suggests new physics at masses a little above 1016 GeV: the existence of gravitation. And there is also the fact that one strong and two electroweak coupling parameters of the standard model, which depends only logarithmically on energy, seem to converge to get a common value at an energy of the order of 1015 GeV to 1016 GeV.

There are lots of good ideas on how to go beyond the standard model, including supersymmetry and what used to be called string theory, but no experimental data yet to confirm any of them. Even if governments are generous to particle physics to a degree beyond our wildest dreams, we may never be able to build accelerators that can reach energies such as 1015 to 1016 GeV. Some day we may be able to detect high-frequency gravitational waves emitted during the era of inflation in the very early universe, that can tell us about physical processes at very high energy. In the meanwhile, we can hope that the LHC and its successors will provide the clues we so desperately need in order to go beyond the successes of the past 100 years.

Weinberg then asks: “What is all this worth? Do we really need to know why there are three generations of quarks and leptons, or whether nature respects supersymmetry, or what dark matter is? Yes, I think so, because answering this sort of question is the next step in a program of learning how all regularities in nature (everything that is not a historical accident) follow from a few simple laws.”

In this quote by Weinberg we see that the energy level at which this “new physics” should clearly manifest, 1015-1016 GeV, is very far from the 13 TeV, that is, the 13·103 GeV that the revamped LHC should reach. So far, in fact, that we can perfectly understand Weinberg’s observation that “we may never be able to construct accelerators that can reach those energies.” But Weinberg also pointed out that by investigating the Universe it might be possible to find ways of attaining those levels of energy. He knew this very well, as in the 1970s he was one of the strongest advocates of joining elementary particle physics with cosmology. In that sense, we should remember his book, The First Three Minutes: A Modern View of the Origin of the Universe (1977), in which he strove to promote the mutual aid that cosmology and high-energy physics could and in fact did obtain by studying the first instants after the Big Bang. For high-energy physics that “marriage of convenience” was a breath of fresh air.

Rather than the style and techniques that characterized elementary particle physics in the 1970s, 1980s, and 1990s, Weinberg was referring to something quite different: the physics of gravitational waves or radiation. Besides its other differences, gravitational radiation’s theoretical niche does not lie in quantum physics, but rather in the theory that describes the only interaction that has yet to fit quantum requisites: the general theory of relativity, where the world of basic physics mixes with those of cosmology and astrophysics. And in that plural world, there has also been a fundamental advance over the last decade.

Gravitational Radiation Exists

Years of intense mental effort began in 1907, with the identification of the so-called “equivalence principle” as a key element for constructing a relativist theory of gravitation. After those years, which included many dead-ends, in November 1915, Albert Einstein completed the structure of what many consider physics’ most elegant theoretical construction: the general theory of relativity. This is a “classic” theory, in the sense that, as I pointed out above, it does not include the principles of quantum theory. And there is consensus about the need for all theories of physics to share those principles. Still, Einstein’s relativist formulation of gravitation has successfully passed every single experimental test yet conceived. The existence of these waves is usually said to have been predicted in 1916, as that is when Einstein published an article concluding that they do, indeed, exist. Still, that work was so limited that Einstein returned to it some years later. In 1936, he and collaborator Nathan Rosen prepared a manuscript titled: “Do Gravitational Waves Exist?” in which they concluded that, in fact, they do not. There were, however, errors in that work, and the final published version (Einstein and Rosen, 1937) no longer rejected the possibility of gravitational waves.

The problem of whether they really exist—essentially, the problem of how to detect them—lasted for decades. No one put more time and effort into detecting them than Joseph Weber, from the University of Maryland, who began in 1960. He eventually came to believe that he had managed to detect them, but such was not the case. His experiment used an aluminum cylinder with a diameter of one meter and a weight of 3.5 tons, fitted with piezoelectric quartz devices to detect the possible distortion of the cylinder when gravitational waves passed through it. When we compare this instrument with the one finally used for their detection, we cannot help but admire the enthusiasm and ingenuousness that characterized this scientist, who died in 2000 without knowing whether his lifelong work was correct or not. Such is the world of science, an undertaking in which, barring exceptions, problems are rarely solved by a single scientist, are often wrought with errors, and take a very long time indeed.

On February 11, 2016, a LIGO representative announced that they had detected gravitational waves corresponding to the collision of two black holes. This announcement also constituted a new confirmation of the existence of those singular cosmic entities

The detection of gravitational waves, which required detecting distortion so small that it is equivalent to a small fraction of an atom, finally occurred in the last decade, when B. P. Abbott (Abbott, et al., 2016) employed a US system called LIGO (Laser Interferometer Gravitational-Wave Observatory) that consisted of two observatories 3,000 kilometers apart (the use of two made it possible to identify false signals produced by local effects), one in Livingston (Louisiana) and the other in Hanford (Washington). The idea was to use interferometric systems with two perpendicular arms in vacuum conditions with an optical path of two or four kilometers for detecting gravitational waves through the minute movements they produce in mirrors as they pass through them. On February 11, 2016, a LIGO representative announced that they had detected gravitational waves and that they corresponded to the collision of two black holes, which thus also constituted a new confirmation of the existence of those singular cosmic entities. While it did not participate in the initial detection (it did not then have the necessary sensitivity, but it was being improved at that time), there is another major interferometric laboratory dedicated to the detection of gravitational radiation: Virgo. Born of a collaboration among six European countries (Italy and France at the helm, followed by Holland, Hungary, Poland, and Spain), it is located near Pisa and has agreements with LIGO. In fact, in the “founding” article, the listing of authors, B. P. Abbott et al., is followed by the statement: “LIGO Scientific Collaboration and Virgo Collaboration.” Virgo soon joined this research with a second round of observations on August 1, 2017.

The detection of gravitational radiation has opened a new window onto the study of the Universe, and it will certainly grow wider as technology improves and more observatories like LIGO and Virgo are established. This is a situation comparable to what occurred in 1930 when Karl Jansky’s pioneering radio astronomy (astronomy based on radio waves with wavelengths between a few centimeters and a few meters) experiments radically broadened our knowledge of the cosmos. Before that, our research depended exclusively on the narrow band of wavelengths of the electromagnetic spectrum visible to the human eye. In fact, things have moved quite quickly in this sense. On October 16, 2017, LIGO and Virgo (Abbott et al., 2017) announced that on August 17 of that same year they had detected gravitational radiation from the collision of two neutron stars with masses between 1.17 and 1.60 times the mass of our Sun (remember that neutron stars are extremely dense and small, with a radius of around ten kilometers, comparable to a sort of giant nucleus formed exclusively by neutrons united by the force of gravity). It is particularly interesting that 1.7 seconds after the signal was received, NASA’s Fermi space telescope detected gamma rays from the same part of space in which that cosmic collision had occurred. Later, other observatories also detected them. Analysis of this radiation has revealed that the collision of those two stars produced chemical elements such as gold, silver, platinum, and uranium, whose “birthplaces” had been previously unknown.

The detection of gravitational waves also reveals one of the characteristics of what is known as Big Science: the article in which their discovery was proclaimed (Abbott et al., 2016) coincided with the LIGO announcement on February 11 and was signed by 1,036 authors from 133 institutions (of its sixteen pages, six are occupied by the list of those authors and institutions).

The importance of LIGO’s discovery was recognized in 2017 by the awarding of the Nobel Prize for Physics in two parts. One half of the prize went to Rainer Weiss, who was responsible for the invention and development of the laser interferometry technique employed in the discovery. The other half was shared by Kip Thorne, a theoretical physicist specialized in general relativity who worked alongside Weiss in 1975 to design the project’s future guidelines and remains associated with him today; and Barry Barish, who joined the project in 1994 and reorganized it as director. (In 2016, this prize had been awarded to David Thouless, Duncan Haldane, and Michael Kosterlitz, who used techniques drawn from a branch of mathematics known as topology to demonstrate the existence of previously unknown states, or “phases,” of matter, for example, superconductors and superfluids, which can exist in thin sheets—something previously considered impossible. They also explained “phase transitions,” the mechanism that makes superconductivity disappear at high temperatures.)

Black Holes and Wormholes

The fact that the gravitational waves first detected in LIGO came from the collision of two black holes also merits attention. At the time, there were already numerous proofs of the existence of these truly surprising astrophysical objects (the first evidence in that sense arrived in 1971, thanks to observations made by instruments installed on a satellite that the United States launched on December 12, 1970, and since then, many more have been identified, including those at the nucleus of numerous galaxies, one of which is our own Milky Way). It is worth remembering that in the 1970s many scientists specializing in general relativity thought that black holes were nothing more than “mathematical ghosts” generated by some solutions to Einstein’s theory and thus dismissible. After all, the equations in a physics theory that describes an area of reality can include solutions that do not exist in nature. Such is the case, for example, with relativist cosmology, which includes multiple possible universes. As it happens, black holes do exist, although we have yet to understand such fundamental aspects as where mass goes when they swallow it. The greatest advocates of the idea that black holes are the inevitable consequence of general relativity and must therefore exist were Stephen Hawking and Roger Penrose, who was initially trained in pure mathematics. Their arguments were first proposed in a series of works published in the 1960s, and they were later followed by other scientists, including John A. Wheeler (the director of Thorne’s doctoral dissertation) who, in fact, invented the term “black hole.” Moreover, before his death on March 14, 2018, Hawking had the satisfaction of recognizing that this new confirmation of the general theory of relativity, to whose development he had dedicated so much effort, also constituted another proof of the existence of black holes. Given that no one—not even Penrose or Wheeler (also now dead)—had contributed as much to the physics of black holes as Hawking, if the Nobel Foundation’s statutes allowed its prizes to be awarded to a maximum of four rather than three individuals, he would have been a fine candidate. (In 1973, he presented what is considered his most distinguished contribution: a work maintaining that black holes are not really so “black,” because they emit radiation and therefore may end up disappearing, although very slowly. This has yet to be proven.) But history is what it is, not what some of us might wish it were.

The greatest advocates of the idea that black holes are the inevitable consequence of general relativity and must therefore exist were Stephen Hawking and Roger Penrose in a series of works published in the 1960s

In the text quoted above, Weinberg suggests that: “Someday we may be able to detect high-frequency gravitational waves emitted during the very early universe’s period of inflation, which could offer data on very high-energy physical processes.” This type of gravitational wave has yet to be detected, but it may happen quite soon, because we have already observed the so-called “wrinkles in time,” that is, the minute irregularities in the cosmic microwave background that gave rise to the complex structures, such as galaxies, now existing in the Universe (Mather et al., 1990; Smoot et al., 1992). We may recall that this occurred thanks to the Cosmic Background Explorer (COBE), a satellite placed in orbit 900 kilometers above the Earth in 1989. Meanwhile, the future of gravitational-radiation astrophysics promises great advances, one of which could be the identification of cosmological entities as surprising as black holes: the “wormholes,” whose name was also coined by John Wheeler. Simply put, wormholes are “shortcuts” in the Universe, like bridges that connect different places in it. Take, for example, two points in the Universe that are thirty light-years apart (remember, a light-year is the distance a ray of light can travel in one year). Given the curvature of the Universe, that is, of space-time, there could be a shortcut or bridge between them so that, by following this new path, the distance would be much less: possibly just two light-years, for example. In fact, the possible existence of these cosmological entities arose shortly after Albert Einstein completed the general theory of relativity. In 1916, Viennese physicist Ludwig Flamm found a solution to Einstein’s equations in which such space-time “bridges” appeared. Almost no attention was paid to Flamm’s work, however, and nineteen years later Einstein and one of his collaborators, Nathan Rosen, published an article that represented physical space as formed by two identical “sheets” connected along a surface they called “bridge.” But rather than thinking in terms of shortcuts in space—an idea that, in their opinion, bordered on the absurd—they interpreted this bridge as a particle.

Decades later, when the general theory of relativity abandoned the timeless realm of mathematics in which it had previously been cloistered, and technological advances revealed the usefulness of understanding the cosmos and its contents, the idea of such shortcuts began to be explored. One result obtained at that time was that, if they indeed exist, it is for a very short period of time—so short, in fact, that you cannot look through them. In terms of travel, they would not exist long enough to serve as a shortcut from one point in the Universe to another. In his splendid book, Black Holes and Time Warps (1994), Kip Thorne explains this property of wormholes, and he also recalls how, in 1985, he received a call from his friend, Carl Sagan, who was finishing work on a novel that would also become a film, Contact (1985). Sagan had little knowledge of general relativity, but he wanted the heroine of his story, astrophysicist Eleanor Arroway (played by Jodie Foster in the film), to travel rapidly from one place in the Universe to another via a black hole. Thorne knew this was impossible, but to help Sagan, he suggested replacing the black hole with a wormhole: “When a friend needs help,” he wrote in his book (Thorne 1994, 1995: 450, 452), “you are willing to look anywhere for it.” Of course, the problem of their very brief lifespan continued to exist, but to solve it, Thorne suggested that Arroway was able to keep the wormhole open for the necessary time by using “an exotic matter” whose characteristics he more-or-less described. According to Thorne, “exotic matter might possibly exist.” And in fact, others (among them, Stephen Hawking) had reached the same conclusion, so the question of whether wormholes can be open for a longer period of time than originally thought has led to studies related to ideas that make sense in quantum physics, such as fluctuations in a vacuum: considering space as if, on an ultramicroscopic scale, it were a boiling liquid.

Simply put, wormholes are “shortcuts” in the Universe, like bridges that connect different places in it. For example, if two points in the Universe are thirty light-years apart, the curvature of the Universe (of space-time) might permit the existence of a shortcut between them—possibly only two light-years in length

Another possibility recently considered comes from a group of five scientists at Louvain University, the Autonomous University of Madrid-CSIC, and the University of Waterloo, whose article in Physical Review D (Bueno, Cano, Goelen, Hertog, and Vernocke, 2018) proposes the possibility that the gravitational radiation detected by LIGO, which was interpreted as coming from the collision of two black holes, might have a very different origin: the collision of two rotating wormholes. Their idea is based on the existence of a border or event horizon around black holes, which makes the gravitational waves produced by a collision such as the one detected in 2016 cease in a very short period of time. According to those scientists, this would not happen in the case of wormholes, where such event horizons do not exist. There, the waves should reverberate, producing a sort of “echo.” Such echoes were not detected, but that may be because the instrumentation was unable to do so, or was not prepared for it. This is a problem to be solved in the future.

Multiple Universes

Seriously considering the existence of wormholes—“bridges” in space-time—might seem like entering a world in which the border between science and science fiction is not at all clear, but the history of science has shown us that nature sometimes proves more surprising than even the most imaginative human mind. So, who really knows whether wormholes might actually exist? After all, before the advent of radio astronomy, no scientist could even imagine the existence of astrophysical structures such as pulsars or quasars. Indeed, the Universe itself, understood as a differentiated entity, could end up losing its most fundamental characteristic: its unicity. Over the last decade scientists have given increasingly serious consideration to a possibility that arose as a way of understanding the collapse of the wave function, the fact that, in quantum mechanics, what finally decides which one of a system’s possible states becomes real (and how probable this occurrence is) is observation itself, as before that observation takes place, all of the system’s states coexist. The possibility of thinking in other terms was presented by a young doctoral candidate in physics named Hugh Everett III. Unlike most of his peers, he was unconvinced by the Copenhagen interpretation of quantum mechanics so favored by the influential Niels Bohr, especially its strange mixture of classical and quantum worlds. The wave function follows its quantum path until subjected to measurement, which belongs to the world of classical physics, at which point it collapses. Everett thought that such a dichotomy between a quantum description and a classical one constituted a “philosophical monstrosity.”1 He therefore proposed discarding the postulated collapse of the wave function and trying to include the observer in that function.

Before the advent of radio astronomy, no scientist could even imagine the existence of astrophysical structures such as pulsars or quasars. Indeed, the Universe itself, understood as a differentiated entity, could end up losing its most fundamental characteristic: its unicity

It is difficult to express Everett’s theory in just a few words. In fact, John Wheeler, who directed his doctoral dissertation, had trouble accepting all its content and called for various revisions of his initial work, including shortening the first version of his thesis and limiting the forcefulness of some of his assertions, despite the fact that he recognized their value. Here, I will only quote a passage (Everett III, 1957; Barrett and Byrne (eds.), 2012: 188–189) from the article that Everett III (1957) published in Reviews of Modern Physics, which coincides with the final version of his doctoral dissertation (successfully defended in April 1957):

We thus arrive at the following picture: Throughout all of a sequence of observation processes there is only one physical system representing the observer, yet there is no single unique state of the observer […] Nevertheless, there is a representation in terms of a superposition […] Thus with each succeeding observation (or interaction), the observer state ‘branches’ into a number of different states. Each branch represents a different outcome of the measurement and the corresponding eigenstate for the object-system state. All branches exist simultaneously in the superposition after any given sequence of observations.

In this quote, we encounter what would become the most representative characteristic of Everett’s theory. But it was Bryce DeWitt, rather than Everett, who promoted it. In fact, DeWitt recovered and modified Everett’s theory, turning it into “the many-worlds interpretation” (or multiverses), in a collection of works by Everett that DeWitt and Neill Graham edited in 1973 with the title The Many-Worlds Interpretation of Quantum Mechanics (DeWitt and Graham [eds.], 1973). Earlier, DeWitt (1970) had published an attractive and eventually influential article in Physics Today that presented Everett’s theory under the provocative title “Quantum Mechanics and Reality.” Looking back at that text, DeWitt recalled (DeWitt-Morette, 2011: 95): “The Physics Today article was deliberately written in a sensational style. I introduced terminology (‘splitting’, multiple ‘worlds’, and so on.) that some people were unable to accept and to which a number of people objected because, if nothing else, it lacked precision.” The ideas and the version of Everett’s theory implicit in DeWitt’s presentation, which have been maintained and have even flourished in recent times, are that the Universe’s wave function, which is the only one that really makes sense according to Everett, splits with each “measuring” process, giving rise to worlds and universes that then split into others in an unstoppable and infinite sequence.

The ideas and the version of Everett’s theory implicit in DeWitt’s presentation are that the Universe’s wave function splits with each “measuring” process, giving rise to worlds and universes that then split into others in an unstoppable and infinite sequence

In his Physics Today article, DeWitt (1970: 35) wrote that: “No experiment can reveal the existence of ‘other worlds.’ However, the theory does have the pedagogical merit of bringing most of the fundamental issues of measurement theory clearly into the foreground, hence providing a useful framework for discussion.” For a long time (the situation has begun to change in recent years) the idea of multiverses was not taken very seriously, and some even considered it rather ridiculous, but who knows whether it will become possible, at some future time, to imagine an experiment capable of testing the idea that other universes may exist, and, if they do, whether the laws of physics would be the same as in our Universe, or others. Of course, if they were different, how would we identify them?

Dark Matter

Until the end of the twentieth century, scientists thought that, while there was still much to learn about its contents, structure, and dynamics, we knew what the Universe is made of: “ordinary” matter of the sort we constantly see around us, consisting of particles (and radiations/quanta) that are studied by high-energy physics. In fact, that is not the case. A variety of experimental results, such as the internal movement of some galaxies, have demonstrated the existence of matter of an unknown type called “dark matter,” as well as something called “dark energy,” which is responsible for the Universe expanding even faster than expected. Current results indicate that about five percent of the Universe consists of ordinary mass, twenty-seven percent is dark matter and sixty-eight percent is dark energy. In other words, we thought we knew about what we call the Universe when, in fact, it is still largely unknown because we have yet to discover what dark matter and dark energy actually are.

At the LHC, there were hopes that a candidate for dark mass particles could be detected. The existence of these WIMPs (weakly interacting massive particles) is predicted by what is called supersymmetry, but the results have so far been negative. One specific experiment that attempted to detect dark matter used a Large Underground Xenon or LUX detector at the Stanford Underground Laboratory and involved the participation of around one hundred scientists and engineers from eighteen institutions in the United States, Europe, and, to a lesser degree, other countries. This laboratory, located 1,510 meters underground in a mine in South Dakota, contains 370 kilos of ultra-pure liquid xenon and the experiment sought to detect the interaction of those particles with it. The results of that experiment, which took place between October 2014 and May 2016, were also negative.

Supersymmetry and Dark Matter

From a theoretical standpoint, there is a proposed formulation that could include dark matter, that is, the “dark particles” or WIMPs, mentioned above. It consists of a special type of symmetry known as “supersymmetry,” whose most salient characteristic is that for each of the known particles there is a corresponding “supersymmetric companion.” Now, this companion must possess a specific property: its spin must be 1/2 less than that of its known partner. In other words, one will have a spin that corresponds to an integer, while the other’s will correspond to a half-integer; thus, one will be a boson (a particle with an integer spin) and the other, a fermion (particles with a semi-integer spin). In that sense, supersymmetry establishes a symmetry between bosons and fermions and therefore imposes that the laws of nature will be the same when bosons are replaced by fermions and vice versa. Supersymmetry was discovered in the early 1970s and was one of the first of a group of theories of other types that raised many hopes for unifying the four interactions—bringing gravitation into the quantum world—and thus moving past the Standard Model. That group of theories is known as string theory.2 A good summary of supersymmetry was offered by David Gross (2011: 163–164), one of the physicists who stands out for his work in this field:

Perhaps the most important question that faces particle physicists, both theorists and experimentalists, is that of supersymmetry. Supersymmetry is a marvelous theoretical concept. It is a natural, and probably unique, extension of the relativistic and general relativistic symmetries of nature. It is also an essential part of string theory; indeed supersymmetry was first discovered in string theory, and then generalized to quantum field theory. […]

In supersymmetric theories, for every particle there is a ‘superpartner’ or a ‘superparticle.’ […] So far, we have observed no superpartners […] But we understand that this is perhaps not surprising. Supersymmetry could be an exact symmetry of the laws of nature, but spontaneously broken in the ground state of the universe. Many symmetries that exist in nature are spontaneously broken. As long as the scale of supersymmetry breaking is high enough, we would not have seen any of these particles yet. If we observe these particles at the new LHC accelerator then, in fact, we will be discovering new quantum dimensions of space and time.[…]

Supersymmetry has many beautiful features. It unifies by means of symmetry principles fermions, quarks, and leptons (which are the constituents of matter), bosons (which are the quanta of force), the photon, the W, the Z, the gluons in QCD, and the graviton.

After offering other examples of the virtues of sypersymmetry, Gross refers to dark matter: “Finally, supersymmetric extensions of the standard model contain natural candidates for dark-matter WIMPs. These extensions naturally contain, among the supersymmetric partners of ordinary matter, particles that have all the hypothesized properties of dark matter.”

As Gross pointed out, the experiments at the LHC were a good place to find those “supersymmetric dark companions,” which could be light enough to be detected by the CERN accelerator, although even then they would be difficult to detect because they interact neither with electromagnetic force—they do not absorb, reflect, or emit light—nor with strong interaction, because they do not interact with “visible particles,” either. Nonetheless, they possess energy and momentum (otherwise, they would be “ghosts” with no physical entity whatsoever), which opens the doors to inferring their existence by applying the customary laws of conservation of energy-momentum to what is seen after the particles observed collide with the WIMP. All the same, no evidence of their existence has yet been found in the LHC. In any case, the problem of what dark matter actually is constitutes a magnificent example of the confluence of physics (the physics of elemental particles) with cosmology and astrophysics—yet another indication that these fields are sometimes impossible to separate.

String Theories

The string theories mentioned with regard to supersymmetry appeared before it did. According to string theories, nature’s basic particles are actually unidimensional filaments (extremely thin strings) in space with many more dimensions than the three spatial and one temporal dimension that we are aware of. Rather than saying that they “are” or “consist of” those strings, we should, however, say that they “are manifestations” of the vibrations of those strings. In other words, if our instruments were powerful enough, rather than seeing “points” with certain characteristics we call electron, quark, photon, or neutrino, for example, we would see minute, vibrating strings (whose ends can be open or closed).

The first version of string theory arose in 1968, when Gabriele Veneziano (1968) introduced a string model that appeared to describe the interaction among particles subject to strong interaction. Veneziano’s model only worked for bosons. In other words, it was a theory of bosonic strings, but it did demand a geometrical framework of twenty-six dimensions. It was Pierre Ramond (1971) who first managed—in the work mentioned in footnote 2, which introduced the idea of supersymmetry—to extend Veneziano’s idea to include “fermionic modes of vibration” that “only” required ten-dimensional spaces. Since then, string (or superstring) theory has developed in numerous directions. Its different versions seem to converge to form what is known as M-theory, which has eleven dimensions.3 In The Universe in a Nutshell, Stephen Hawking (2002: 54–57) observed:

I must say that personally, I have been reluctant to believe in extra dimensions. But as I am a positivist, the question ‘do extra dimensions really exist?’ has no meaning. All one can ask is whether mathematical models with extra dimensions provide a good description of the universe. We do not yet have any observations that require extra dimensions for their explanation. However, there is a possibility we may observe them in the Large Hadron Collider in Geneva. But what has convinced many people, including myself, that one should take models with extra dimensions seriously is that there is a web of unexpected relationships, called dualities, between the models. These dualities show that the models are all essentially equivalent; that is, they are just different aspects of the same underlying theory, which has been given the name M-theory. Not to take this web of dualities as a sign we are on the right track would be a bit like believing that God put fossils into the rocks in order to mislead Darwin about the evolution of life.

Once again we see what high hopes have been placed on the LHC, although, as I already mentioned, they have yet to be satisfied. Of course this does not mean that some string theory capable of bringing gravity into a quantum context may not actually prove true.4 They are certainly enticing enough to engage the greater public, as can be seen in the success of the one by Hawking mentioned above, or The Elegant Universe (1999), written by Brian Greene, another specialist in this field. There are two clearly distinguished groups within the international community of physicists (and mathematicians). Some think that only a version of string theory could eventually provide the possibility of fulfilling the long-awaited dream of unifying the four interactions to form a great quantum synthesis, thus surpassing the Standard Model and general relativity and discovering ways of experimentally proving that theory. Others believe that string theory has received much more attention than it deserves as it is an as yet unprovable formulation more fitting in mathematical settings than in physics (in fact, mathematics has not only strongly contributed to string theories, it has also received a great deal from them. It is hardly by chance that one of the most outstanding string-theory experts, Edward Witten, was awarded the Fields Medals in 1990, an honor considered the mathematics equivalent to the Nobel Prize). As to the future of string theory, it might be best to quote the conclusions of a recent book about them by Joseph Conlon (2016: 235–236), a specialist in that field and professor of Theoretical Physics at Oxford University:

What does the future hold for string theory? As the book has described, in 2015 ‘string theory’ exists as a large number of separate, quasi-autonomous communities. These communities work on a variety of topics range[ing] from pure mathematics to phenomenological chasing of data, and they have different styles and use different approaches. They are in all parts of the world. The subject is done in Philadelphia and in Pyongyang, in Israel and in Iran, by people with all kinds of opinions, appearance, and training. What they have in common is that they draw inspiration, ideas, or techniques from parts of string theory.

It is clear that in the short term this situation will continue. Some of these communities will flourish and grow as they are reinvigorated by new results, either experimental or theoretical. Others will shrink as they exhaust the seam they set out to mine. It is beyond my intelligence to say which ideas will suffer which fate—an unexpected experimental result can drain old subjects and create a new community within weeks.

At this point, Conlon pauses to compare string theory’s mathematical dimension to other physics theories, such as quantum field theory or gravitation, pointing out that “although they may be phrased in the language of physics, in style these problems are far closer to problems in mathematics. The questions are not empirical in nature and do not require experiment to answer.” Many—probably the immense majority of physicists—would not agree.

According to string theory, nature’s basic particles are actually unidimensional filaments (extremely thin strings) in space with many more dimensions than the three spatial and one temporal dimension that we are aware of. We should, however, say that they “are manifestations” of the vibrations of those strings

The example drawn from string theory that Conlon next presented was “AdS/CFT Correspondence,” a theoretical formulation published in 1998 by Argentinean physicist Juan Maldacena (1998) that helps, under specific conditions that satisfy what is known as the “holographic principle” (the Universe understood as a sort of holographic projection), to establish correspondence between certain quantum gravity theories and any compatible field or quantum chromodynamic theory. (In 2015, Maldacena’s article was the most frequently mentioned in high-energy physics, with over 10,000 quotes.) According to Conlon: “The validity of AdS/CFT correspondence has been checked a thousand times—but these checks are calculational in nature and are not contingent on experiment.” He continues:

What about this world? Is it because of the surprising correctness and coherence of string theories such as AdS/CFT that many people think string theory is also likely to be a true theory of nature? […]

Will we ever actually know whether string theory is physically correct? Do the equations of string theory really hold for this universe at the smallest possible scales?

Everyone who has ever interacted with the subject hopes that string theory may one day move forward into the broad sunlit uplands of science, where conjecture and refutation are batted between theorist and experimentalist as if they were ping-pong balls. This may require advances in theory; it probably requires advances in technology; it certainly requires hard work and imagination.

In sum, the future remains open to the great hope for a grand theory that will unify the description of all interactions while simultaneously allowing advances in the knowledge of matter’s most basic structure.

Entanglement and Cryptography

As we well know, quantum entanglement defies the human imagination. With difficulty, most of us eventually get used to concepts successfully demonstrated by facts, such as indeterminism (Heisenberg’s uncertainty principle of 1927) or the collapse of the wave function (which states, as mentioned above, that we create reality when we observe it; until then, that reality is no more than the set of all possible situations), but it turns out that there are even more. Another of these counterintuitive consequences of quantum physics is entanglement, a concept and term (Verschränkung in German) introduced by Erwin Schrödinger in 1935 and also suggested in the famous article that Einstein published with Boris Podolsky and Nathan Rosen that same year. Entanglement is the idea that two parts of a quantum system are in instant “communication” so that actions affecting one will simultaneously affect the other, no matter how far apart. In a letter to Max Born in 1947, Einstein called it “phantasmagorical action at a distance [spukhafte Fernwirkung].”

Quantum entanglement exists, and over the last decade it has been repeatedly proven. One particularly solid demonstration was provided in 2015 by groups from Delft University, the United States National Institute of Standards and Technology, and Vienna University, respectively

Now, quantum entanglement exists, and over the last decade it has been repeatedly proven. One particularly solid demonstration was provided in 2015 by groups from Delft University, the United States National Institute of Standards and Technology, and Vienna University, respectively. In their article (Herbst, Scheidl, Fink, Handsteiner, Wittmann, Ursin, and Zeilinger, 2015), they demonstrated the entanglement of two previously independent photons 143 kilometers apart, which was the distance between their detectors in Tenerife and La Palma.

In a world like ours, in which communications via Internet and other media penetrate and condition all areas of society, entanglement constitutes a magnificent instrument for making those transmissions secure, thanks to what is known as “quantum cryptography.” The basis for this type of cryptography is a quantum system consisting, for example, of two photons, each of which is sent to a different receptor. Due to entanglement, if one of those receptors changes something, it will instantly affect the other, even when they are not linked in any way. What is particularly relevant for transmission security is the fact that if someone tried to intervene, he or she would have to take some sort of measure, and that would destroy the entanglement, causing detectable anomalies in the system.

Strictly speaking, quantum information exchange is based on what is known as QKD, that is, “Quantum Key Distribution.”5 What is important about this mechanism is the quantum key that the entangled receptor receives and uses to decipher the message. The two parts of the system share a secret key that they then use to encode and decode messages. Traditional encryption methods are based on algorithms associated with complex mathematical operations that are difficult to decipher but not impossible to intercept. As I have pointed out, such interception is simply impossible with quantum cryptography.

Quantum entanglement augurs the possible creation of a global “quantum Internet.” It is therefore not surprising that recently created companies, such as the Swiss ID Quantique (founded in 2001 as a spinoff of Geneva University’s Applied Physics Group), the US MagiQ, or Australia’s QuintessenceLabs have taken considerable interest in quantum cryptography, as have well-established firms such as HP, IBM, Mitsubishi, NEC, NTT, and Toshiba.

In a world like ours, in which communications via Internet and other media penetrate and condition all areas of society, entanglement constitutes a magnificent instrument for making those transmissions secure, thanks to what is known as “quantum cryptography”

One problem with quantum cryptography is that when classic communications channels, such as optic fiber, are used, the signal is degraded because the photons are absorbed or diffused by the fiber’s molecules (the limit for sending quantum cryptograph messages is around one or two cities). Classic transmission also breaks down over distances, but this can be remedied with relays. That solution does not exist for quantum transmission, however, because, as mentioned above, any intermediate interaction destroys the message’s unity. The best possible “cable” for quantum communication turns out to be the space vacuum, and a significant advance was recently made in that sense. A team led by Jian-Wei Pan, at China’s University of Science and Technology, in Hefei, in collaboration with Anton Zeilinger (one of the greatest specialists in quantum communications and computation) at Vienna University, managed to send quantum messages back and forth between Xinglong and Graz—7,600 kilometers apart—using China’s Micius satellite (placed in orbit 500 kilometers from Earth in August 2016) as an intermediary.6 To broaden its range of action, they used fiber-optic systems to link Graz to Vienna and Xinglong to Beijing. That way, they held a secure seventy-five minute videoconference between the Chinese and Viennese Science Academies using two gigabytes of data, an amount similar to what cellphones required in the 1970s. Such achievements foreshadow the establishment of satellite networks that will allow secure communications of all kinds (telephone calls, e-mail, faxes) in a new world that will later include another instrument intimately related to the quantum phenomena we have already discussed: quantum computing, in which quantum overlap plays a central role.

Quantum entanglement augurs the possible creation of a global “quantum Internet.” It is therefore not surprising that well-established firms such as HP, IBM, Mitsubishi, NEC, NTT, and Toshiba have taken considerable interest in quantum cryptography

While classic computing stores information in bits (0, 1), quantum computation is based on qubits (quantum bits). Many physical systems can act as qubits, including photons, electrons, atoms, and Cooper pairs. Contemplated in terms of photons, the principle of quantum overlap means that these can be polarized either horizontally or vertically, but also in combinations lying between those two states. Consequently, you have a greater number of units for processing or storage in a computing machine, and you can carry out various operations at the same time, that is, you can operate in parallel (every bit has to be either 1 or 0, while a qubit can be 1 and 0 at the same time, which allows multiple operations to be carried out at the same time). Obviously, the more qubits a quantum computer employs, the greater its computing capacity will be. The number of qubits needed to surpass computers is believed to be fifty, and IBM recently announced that it had surpassed that threshold… although only for a few nanoseconds. It turns out to be very difficult indeed to keep the qubits entangled (that is, unperturbed) for the necessary period of time. This is extremely complicated because subatomic particles are inherently unstable. Therefore, to avoid what is called “decoherence,” one of the main areas of research in quantum computing involves finding ways to minimize the perturbing effects of light, sound, movement, and temperature. Many quantum computers are being constructed in extremely low-temperature vacuum chambers for exactly that reason, but obviously, industry and governments (especially China, at present) are making significant efforts to advance in the field of quantum computing: Google and NASA, for example, are using a quantum computer built by the Canadian firm, D-Wave Systems, Inc, which is the first to sell this type of machine capable of carrying out certain types of operations 3,600 times faster than the world’s fastest digital supercomputer.

Another possibility associated with quantum entanglement is teletransportation, which Ignacio Cirac (2011: 478) defined as “the transfer of an intact quantum state from one place to another, carried out by a sender who knows neither the state to be teletransported nor the location of the receiver to whom it must be sent.” Teletransportation experiments have already been carried out with photons, ions, and atoms, but there is still a long way to go.

Physics in an Interdisciplinary World

In previous sections, I have focused on developments in what we could call the “most fundamental physics,” but this science is not at all limited to the study of nature’s “final” components, the unification of forces or the application of the most basic principles of quantum physics. Physics is made up of a broad and varied group of fields—condensed matter, low temperatures, nuclear, optical, electromagnetism, fluids, thermodynamics, and so on—and progress has been made in all of them over the last ten years. Moreover, those advances will undoubtedly continue in the future. It would be impossible to mention them all, but I do want to refer to a field to which physics has much to contribute: interdisciplinarity.

We should keep in mind that nature is unified and has no borders; practical necessity has led humans to establish them, creating disciplines that we call physics, chemistry, biology, mathematics, geology, and so on. However, as our knowledge of nature grows, it becomes increasingly necessary to go beyond these borders and unite disciplines. It is essential to form groups of specialists—not necessarily large ones—from different scientific and technical fields but possessed of sufficient general knowledge to be able to understand each other and collaborate to solve new problems whose very nature requires such collaboration. Physics must be a part of such groups. We find examples of interdisciplinarity in almost all settings, one of which would be the processes underlying atmospheric phenomena. These involve all sorts of sciences: energy exchanges and temperature gradients, radiation received from the Sun, chemical reactions, the composition of the atmosphere, the motion of atmospheric and ocean currents, the biology of animals and plants that explains the behavior and reactions of animal and plant species, industrial processes, social modes and mechanisms of transportation, and so on and so on. Architecture and urban studies offer similar evidence. For climate change, energy limitations, atmospheric pollution, and agglomeration in gigantic cities, it is imperative to deepen cooperation among architecture, urban studies, science, and technology without overlooking the need to draw on other disciplines as well, including psychology and sociology, which are linked to the study of human character. We must construct buildings that minimize energy loss, and attempt to approach energy sufficiency, that is, sustainability, which has become a catchword in recent years. Fortunately, along with materials with new thermal and acoustic properties, we have elements developed by science and technology, such as solar panels, as well as the possibility of recycling organic waste.

Nature is unified and has no borders; practical necessity has led humans to establish them, creating disciplines that we call physics, chemistry, biology, mathematics, geology, and so on. However, as our knowledge of nature grows, it becomes increasingly necessary to go beyond those borders and unite disciplines

One particularly important example in which physics is clearly involved is the “Brain Activity Map Project” which the then president of the United States, Barack Obama, publicly announced on April 2, 2013. In more than one way, this research project is a successor to the great Human Genome Project that managed to map the genes composed by our chromosomes. It seeks to study neuron signals and to determine how their flow through neural networks turns into thoughts, feelings, and actions. There can be little doubt as to the importance of this project, which faces one of the greatest challenges of contemporary science: obtaining a global understanding of the human brain, including its self-awareness. In his presentation, Obama mentioned his hope that this project would also pave the way for the development of technologies essential to combating diseases such as Alzheimer’s and Parkinson’s, as well as new therapies for a variety of mental illnesses, and advances in the field of artificial intelligence.

It is enough to read the heading of the article in which a group of scientists presented and defended this project to recognize its interdisciplinary nature and the presence of physics therein. Published in the review Neuron in 2012, it was titled “The Brain Activity Map Project and the Challenge of Functional Connectomics” and was signed by six scientists: Paul Alivisatos, Miyoung Chun, George Church, Ralph Greenspan, Michael Roukes, and Rafael Yuste (2012).

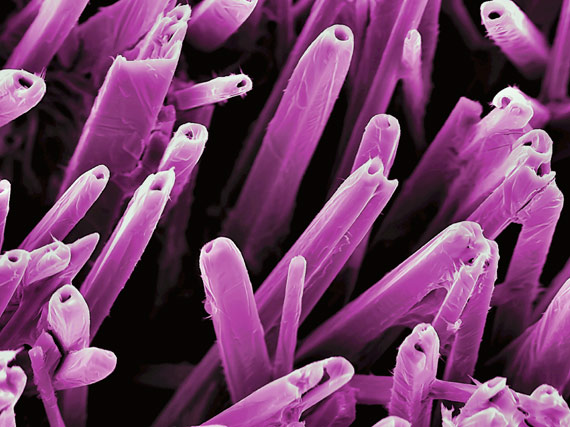

Equally worthy of note are nanoscience and nanotechnology. The atomic world is one of the meeting places for the natural sciences and technologies based on them. In the final analysis, atoms and the particles they are made of (protons, neutrons, electrons, quarks, and so on) are the “world’s building blocks.” Until relatively recently, there was not a research field—I am referring to nanotechnology and nanoscience—in which that shared base showed so many potential applications in different disciplines. Those fields of research and development owe their name to a measurement of length, the nanometer (nm), which is one billionth of a meter. Nanotechnoscience includes any branch of science or technology that researches or uses our capacity to control and manipulate matter on scales between 1 and 100 nm. Advances in the field of nanotechnoscience have made it possible to develop nanomaterials and nanodevices that are already being used in a variety of settings. It is, for example, possible to detect and locate cancerous tumors in the body using a solution of 35 nm nanoparticles of gold, as carcinogenic cells possess a protein that reacts to the antibodies that adhere to these nanoparticles, making it possible to locate malignant cells. In fact, medicine is a particularly appropriate field for nanotechnoscience, and this has given rise to nanomedicine. The human fondness for compartmentalization has led this field to frequently be divided into three large areas: nanodiagnostics (the development of image and analysis techniques to detect illnesses in their initial stages), nanotherapy (the search for molecular-level therapies that act directly on affected cells or pathogenic areas), and regenerative medicine (the controlled growth of artificial tissue and organs).

One particularly important example in which physics is clearly involved is the “Brain Activity Map Project” which the then president of the United States, Barack Obama, publicly announced on April 2, 2013. In more than one way, this research project is a successor to the great Human Genome Project that managed to map the genes composed by our chromosomes

It is always difficult and risky to predict the future, but I have no doubt that this future will involve all sorts of developments that we would currently consider unimaginable surprises. One of these may well be a possibility that Freeman Dyson (2011), who always enjoys predictions, suggested not long ago. He calls it “radioneurology” and the idea is that, as our knowledge of brain functions expands, we may be able to use millions of microscopic sensors to observe the exchanges among neurons that lead to thoughts, feelings, and so on, and convert them into electromagnetic signals that could be received by another brain outfitted with similar sensors. That second brain would then use them to regenerate the emitting brain’s thoughts. Would, or could that become a type of radiotelepathy?

Epilogue

We live between the past and the future. The present is constantly slipping between our fingers like a fading shadow. The past offers us memories and acquired knowledge, tested or yet to be tested, a priceless treasure that paves our way forward. Of course we do not really know where it will lead us, what new characteristics will appear, or if it will be an easy voyage or not. What is certain is that the future will be different and very interesting. And physics, like all of the other sciences, will play an important and fascinating role in shaping that future.

Comments on this publication