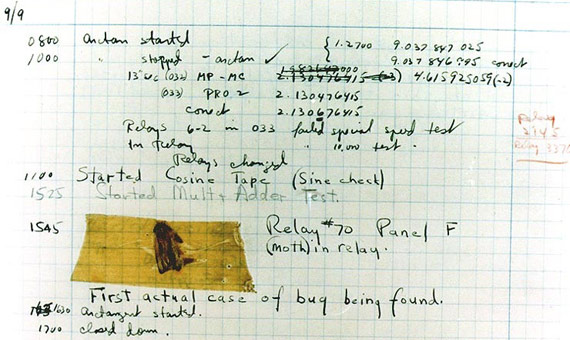

On September 9, 1947, the Mark II computer at Harvard University (USA) broke down. Upon inspection, engineers diagnosed the cause – a moth had entered the machine, perhaps attracted by the light and heat, and had shorted out relay number 70 of Panel F. The technicians recorded the incident in their notebook with an entry at 15:45 in which they attached the bug to the page with adhesive tape and noted: “First actual case of bug found.” Today the sheet is kept at the National Museum of American History of the Smithsonian Institution in Washington.

The story is so popular that, like any story often told, it has become distorted over the years. Contrary to the version circulating today, that episode was not the one that coined the term ‘bug’ for computer errors, nor the verb ‘debugging’ for their correction. The truth is that, well before this incident, these words were often used to refer to the malfunctions of machines, as evidenced by the notes of the inventor Thomas Edison in the 1870s. In fact, sources from the Institute of Electrical and Electronics Engineers (IEEE) have attributed the coining of these terms to Edison himself, and in the era of the Mark II, the computer engineers at Harvard used them regularly.

One thing can be affirmed, however; the incident served to popularize the use of “bug” being applied to computers, which nowadays is the main use of the term. And there is also no doubt about who was responsible for this: Grace Hopper (nee Murray), a mathematician born in New York in 1906, a soldier in the US Navy (she would reach the degree of Admiral) and a pioneer of information technology.

Hopper was the programmer of the Mark II and led the team that found the moth. Although she was not present during the episode, she used to draw caricatures of her “bugs”, and her retelling of the anecdote about the insect resonated so much with people that the term has become associated with her. According to what Kurt Beyer, a professor at Haas Business School of the University of California, Berkeley and author of the book Grace Hopper and the Invention of the Information Age, summarized for OpenMind, “Hopper defined the language and the terms around the computing profession.”

An interpreter to communicate with computers

One of the major contributions of this pioneer was to address the essential aspect that differentiates the first computers from those of today. In those days, a mathematician was needed to operate a computer, because the machines only understood numerical codes. Grace Hopper developed the first compiler, a program that translates the programming language into code that the machine understands. The objective was, according to her own words, “that more people should be able to use the computer and that they should be able to talk to it in plain English.” Hopper’s work was instrumental in the development of the COBOL computer language, which began to be used in 1959 and today continues to be the standard in the business world.

Hopper began her career working with the predecessor of the Mark II. Conceived in 1937 by Howard Aiken and built by IBM, the Mark I replaced the decimal system of the primitive calculators with the binary system. Its 17-metre length and 2.5-metre height contained 3,300 cogs, 1,400 switches and 800 kilometres of electric cable, all to calculate five times faster than a human. Its successor, the Mark II, replaced the cogs with 17,000 electrical relays. According to Michael R. Williams, professor emeritus at the University of Calgary (Canada) and author of A History of Computing Technology (IEEE Computer Society Press, 1997), “electrical relays are still slow, but it paved the way for other advances.” The Mark II was able to multiply in 0.75 seconds, eight times faster than its predecessor, but it took several seconds to solve more complex operations such as square roots.

From mechanical to electronic computers

Despite the innovations that they introduced, the Mark I and II were still electromechanical computers that did not define the way forward. “It was more like a dead end than any real advance,” says Williams to OpenMind. The first electronic machines would appear simultaneously, ENIAC in the US and Colossus in Britain. “The Colossus was an electronic special-purpose computer, designed specifically to decrypt Nazi radio teleprinter messages during World War II,” says computer historian David Greelish, author of Classic Computing: The Complete Historically Brewed.

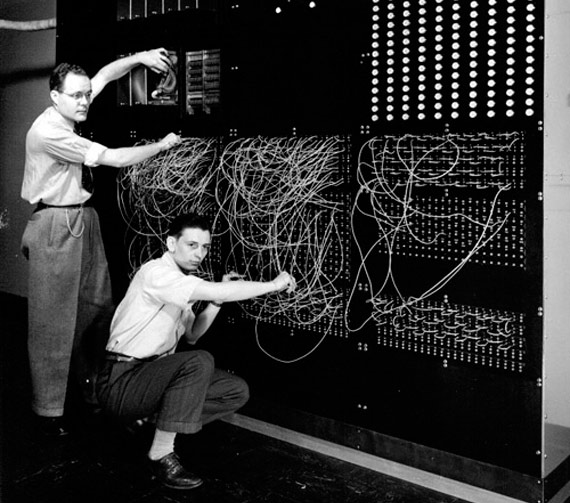

At the same time, at the Moore School at the University of Pennsylvania, J. Presper Eckert and John Mauchly built the ENIAC for the US army. This was the first computer without mechanical parts, composed of 18,000 vacuum tubes in a total area of 1,500 square metres and with the capacity to carry out 5,000 additions per second. But according to Greelish, both the ENIAC and the Colossus were still “much closer to what we would think of as a calculator today.”

The modern computer was born

The real revolution that ignited the ENIAC was the programming stored in memory. To program this machine it was necessary to change cables and switches. After the Second World War, when the computer stopped being a military secret, its creators held a course to which they invited senior engineers and scientists. During the event, Mauchly and Eckert “decided that the ENIAC was too cumbersome to easily change the wiring for a different problem, so they proposed that the control of the machine be stored in a memory,” says Williams. This advance was the “turning point” that “quickly spread the idea of the modern computer,” says the expert.

Despite the dramatic evolution in computing over the last three quarters of a century, one thing has not changed: in today’s computers there is no space for a moth, but bugs have not stopped tormenting engineers since Edison’s time. However, this situation may not last forever; today intelligent systems are already being designed that can identify their own bugs and learn how to correct them.

Discover here the 5 most infamous software bugs in history.

Comments on this publication