The fact that some people remember the past as a series of episodes full of details (episodic memory), while others store in their brains the meaning of events (semantic memory), has a lot to do with the configuration of the connections in the brain, according to a recent study published in the journal Cortex. Neuroscience is deciphering the sophisticated mechanisms of human memory to explain how we file and remember information.

– Memory’s unreliable.

– Oh please!

– No, no, really! Memory’s not perfect. (…) Memory can change the shape of a room. It can change the color of a car and memories can be distorted. Memories are just an interpretation. They’re not a record. They’re irrelevant if you have the facts.

This is the conversation between Leonard and Teddy in the key scene of the movie Memento, one of the movies that best reflects the neuroscientific knowledge about memory. Its main character suffers from anterograde amnesia, which though it allows him to remember new words, he is unable to remember the recent past.

What the director and screenwriter of the film, Christopher Nolan, did not know is that not everybody recovers the information we store about our past in the same way. Some people keep their autobiography in their brains as visual scenes (episodic memory) decorated with great detail, while others archive events as a list of facts and words that describe what happened (semantic memory). In the first case memories would be recorded as a movie, while the second would resemble a history book (but without pictures). The most interesting thing is that these two types of memories correspond to brain structures that are easily identifiable in a scanner, as demonstrated earlier this year by Signy Sheldon and his colleagues at the Canadian universities of McGill and Toronto.

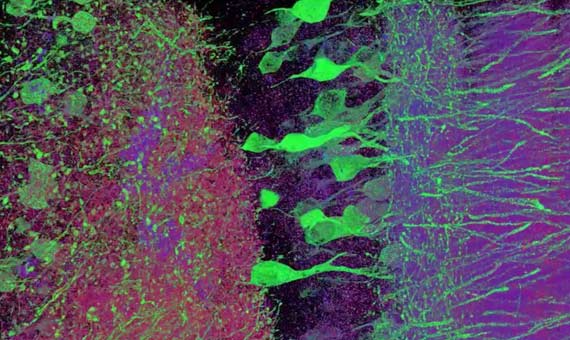

Specifically, “episodic memorizers” have more connections in the back regions of the brain where visual information and the perceptions of the senses are processed (occipital and parietal cortex), damaged in the case of Leonard. In contrast, the “semantic memorizers” show a denser neural network in the lower and middle part of the prefrontal region of the brain, predominantly conceptual and dedicated to organizing and prioritizing information.

This is not the only thing we have recently learned about how the human brain stores information. In addition to there being different types of memory, there are also different resolutions. At least two versions of each event are stored, a coarser one and a finer one, in different areas of the hippocampus, the seahorse-shaped area essential for memory and learning. And, as the occasion demands, from the same event we can recall the more general rough data or remember even the tiniest detail.

Another form of memory that has challenged neuroscientists for years is the working memory, which is “the small amount of information that we can maintain temporarily in our memory, for tasks that we carry out at a given moment, in contrast to the huge amount of data archived in our permanent memory that we access from time to time,” as defined for OpenMind by Nelson Cowan, a researcher at the University of Missouri-Columbia (USA) and one of the world’s leading experts on this form of memory. “Among other things, we need our working memory to understand what we see, hear or read, as well as to solve problems,” he explains.

One of the most interesting aspects of memory that Cowan investigates is the relationship between working memory, attention and concentration. At the moment, we know that there are areas of the brain, such as the intrapariental groove, that deal with keeping certain information in the focus of attention, and that work as arrows “that point toward areas that hold visual or verbal information for a while,” says Cowan. What is less clear is to what extent we can still remember information to which we do not pay attention and of which we are not aware, one of the questions that the researcher wishes to clarify in the coming years.

If he had to highlight one challenge for neuroscientists in the study of memory for the next decade, Cowan would choose knowing what the limits of memory really are and how to overcome them. Some years ago, in 2001, the neuroscientist published an article in which he concluded that the basic temporal retention capacity of memory is 3 or 4 items for an adult and 2 or 3 for a child. However, it is also true that “humans manage to find ways to go beyond that limit using knowledge and strategies to combine information in specialized areas that make the human mind become more powerful and flexible,” adds Cowan.

What does seem clear at this point is the total capacity of long-term memory, which would be in the range of petabytes, in other words, equivalent to the capacity of the World Wide Web, according to a study by the Salk Institute. This is a ten times greater volume of information than previously thought. What is even more interesting is the discovery that, every 2 to 20 minutes, the synapses between neurons grow and shrink between 26 different sizes, depending on the signals they receive. That makes them extremely effective from a computational perspective, and very thrifty from an energy point of view. “Our discovery suggests that hidden beneath the apparent chaos and disorder, there is a surprising precision in the size and shape of neurons that we were completely ignorant of,” specifies Terry Sejnowski, co-author of the study. “The tricks of the brain hide the keys that we need to develop more efficient computers.”

And with so much information, what dictates the ordering of priorities of memories? Charan Ranganath and colleagues at the Center for Neuroscience at the University of California demonstrated using MRI that memory learns and prioritizes the recovery of that information which is related to some reward, and therefore it is expected that “it is useful to make future decisions that provide new rewards.”

Comments on this publication