Just as Vannevar Bush foresaw multimedia, the personal computer, and so much more in his seminal 1945 article,(1) Mark Weiser predicted the Internet of Things in a similarly seminal article in 1991.(2) Whereas Bush’s article, rooted in extrapolations of World War II technology, was mainly speculative, Weiser illustrated the future that he predicted via actual working systems that he and his colleagues had developed (and were already living with) at Xerox PARC. Coming from an HCI perspective, Weiser and his team looked at how people would interact with networked computation distributed into the environments and artifacts around them. Now upstaged by the “Internet of Things,” which much more recently gestated in industrial/academic quarters enamored with evolving RFID technology, Weiser’s vision of “ubiquitous computing” resonated with many of us back then working in the domains of computer science involved with human interaction. Even within our community and before the IoT moniker dominated, this vision could take many names and flavors as factions tried to establish their own brand (we at the Media Lab called it “Things That Think,” my colleagues at MIT’s Lab for Computer Science labelled it “Project Oxygen,” others called it “Pervasive Computing,” “Ambient Computing,” “Invisible Computing,” “Disappearing Computer,” etc.), but it was all still rooted in Mark’s early vision of what we often abbreviate as “UbiComp.”

I first came to the MIT Media Lab in 1994 as a High-Energy Physicist working on elementary particle detectors (which can be considered to be extreme “sensors”) at the then-recently cancelled Superconducting Supercollider and the nascent Large Hadron Collider (LHC) at CERN. Since I had been facile with electronics since childhood and had been designing and building large music synthesizers since the early 1970s,(3) my earliest Media Lab research involved incorporating new sensor technologies into novel human-computer interface devices, many of which were used for musical control.(4) As wireless technologies became more easily embeddable, my research group’s work evolved by the late 1990s into various aspects of wireless sensors and sensor networks, again with a human-centric focus.(5) We now see most of our projects as probing ways in which human perception and intent will seamlessly connect to the electronic “nervous system” that increasingly surrounds us. This article traces this theme from a sensor perspective, using projects from my research group to illustrate how revolutions in sensing capability are transforming how humans connect with their environs in many different ways.

Inertial Sensors and Smart Garments

Humans sense inertial and gravitational reaction via proprioception at the limbs and vestibular sensing at the inner ear. Inertial sensors, the electrical equivalent, once used mainly for high-end applications like aircraft and spacecraft navigation,(6) are now commodity. MEMS (Micro Electro-Mechanical Systems) based accelerometers date back to academic prototypes in 1989 and widespread commercial chips coming from analog devices in the 1990s(7) that exploited their proprietary technique of fabricating both MEMS and electronics on the same chip. Still far too coarse for navigation applications beyond basic pedometry(8) or step-step dead reckoning,(9) they are appearing in many simple consumer products, for example, toys, phones, and exercise dosimeters and trackers, where they are mainly used to infer activity or for tilt-based interfaces, like flipping a display. Indeed, the accelerometer is perhaps now the most generically used sensor on the market and will be embedded into nearly every device that is subject to motion or vibration, even if only to wake up more elaborate sensors at very low power (an area we explored very early on, for example,(10), (11)). MEMS-based gyros have advanced well beyond the original devices made by Draper Lab(12) back in the 1990s. They have also become established, but their higher costs and higher power drain (because they need a driven internal resonator, they have not pushed below the order of 1 mA current draw and are harder to efficiently duty cycle as they take significant time to start up and stabilize(13)) preclude quite as much market penetration at this point. Nonetheless, they are becoming common on smartphones, AR headsets, cameras, and even watches and high-end wristbands, for gesture detection, rotation sensing, motion compensation, activity detection, augmented reality applications, and so on, generally paired with magnetometers that give a ground-truth orientation using Earth’s magnetic field. Triaxial accelerometers are now a default, and triaxial gyros have also been under manufacture. Since 2010 we have seen commercial products that do both, providing an entire 6-axis inertial measurement unit on a single chip together with 3-axis magnetometer (e.g., starting from Invensense with the MPU-6000/6050 and with more recent devices from Invensense, ST Microelectronics, Honeywell, etc.).(14)

The MIT Media Lab have been pioneers in applications of inertial sensing to user interface and wearable sensing(15) and many of these ideas have gestated in my group going back twenty years.(16) Starting a decade ago, piggybacking on even older projects exploring wearable wireless sensors for dancers,(17) my team has employed wearable inertial sensors for measuring biomechanical parameters for professional baseball players in collaboration with physicians from the Massachusetts General Hospital.(18) As athletes are capable of enormous ranges of motion, we need to be able to measure peak activities of up to 120 G, and 13,000°/s, while still having significant resolution within the 10 G, 300°/s range of normal motion. Ultimately, this would entail an IMU with log sensitivity, but as these are not yet available, we have integrated dual-range inertial components onto our device and mathematically blend both signals. We use the radio connection primarily to synchronize our devices to better than our 1 ms sampling rate, and write data continuously to removable flash memory, allowing us to gather data for an entire day of measurement and analyze the data to determine descriptive and predictive features of athletic performance.(19) A myriad of commercial products are now appearing for collecting athletic data for a variety of sports that range from baseball to tennis,(20) and even the amateur athlete of the future will be instrumented with wearables that will aid in automatic/self/augmented coaching.

Accelerometer power has also dropped enormously, with a modern capacitive 3-axis accelerometers drawing well under a milliamp, and special purpose accelerometers (e.g., devices originally designed for implantables(21)) pulling under a microamp. Passive piezoelectric accelerometers have also found application in UbiComp venues.(22)

Sensors of various sorts have also crept into fabric and clothing—although health care and exercise monitoring have been the main beacons calling researchers to these shores,(23) fabric-based strain gauges, bend sensors, pressure sensors, bioelectric electrodes, capacitive touch sensors, and even RFID antennae have been developed and become increasingly reliable and robust,(24) making clothing into a serious user interface endeavor. Recent high-profile projects, such as Google’s Project Jacquard,(25) led technically by Nan-Wei Gong, an alum of my research team, boldly indicate that smart clothing and weaving as electronic fabrication will evolve beyond narrow and boutique niches and into the mainstream as broad applications, perhaps centered on new kinds of interaction,(26) as they begin to emerge. Researchers worldwide, such as my MIT colleague Yoel Fink(27) and others, are rethinking the nature of “thread” as a silicon fiber upon which microelectronics are grown. Acknowledging the myriad hurdles that remain here in robustness, production, and so on, the research community is mobilizing(28) to transform these and other ideas around flexible electronics into radically new functional substrates that can impact medicine, fashion, and apparel (from recreation to spacesuits and military or hazardous environments), and bring electronics into all things stretchable or malleable.

Going beyond wearable systems are electronics that are attached directly to or even painted onto the skin. A recent postdoc in my group, Katia Vega, has pioneered this in the form of what she calls “Beauty Technology.”(29) Using functional makeup that construes conductors, sensors, and illuminating or color-changing actuators,(30) Katia and her colleagues have made skin interfaces that react to facial or tactile gesture, sending wireless messages, illuminating, or changing apparent skin color with electroactive rouge. Katia has recently explored medical implementations, such as tattoos that can change appearance with blood-borne indicators.(31)

We are living in an era that has started to technically mine the wrist, as seen in the now ubiquitous smartwatch. These devices are generally touchscreen peripherals to the smartphone, however, or work as improved activity monitors such as have been in the market since 2009. I believe that the wrist will become key to the user interface of the future, but not implemented like in today’s smartwatches. User interfaces will exploit wrist-worn IMUs and precise indoor location to enable pointing gestures,(32) and finger gesture will still be important in communicating (as we have so much sensory-motor capability in our fingers), but rather than using touch screens, we will be tracking finger gesture by sensors embedded in smart wristbands with our hands by our sides. In recent years, my team has developed several early working prototypes that do this, one using passive RFID sensors in rings worn at the upper joint of fingers interrogated by a wrist-worn reader,(33) another using a short-range 3D camera looking across the palm from the wrist to the fingers,(34) and another that uses a pressure-imaging wristband that can sense tendon displacement.(35) My student Artem Dementyev and his collaborator Cindy Kao have also made a wireless touchpad into a fake fingernail, complete with a battery that lasts a few hours of continuous use,(36) enabling digital interaction at the extremes of our digits, so to speak. Hence, I envision the commuter of the future not staring down at their smartphone in their crowded self-driving bus, but rather looking straight ahead into their head-mounted wearable, nudging their heavily context-leveraged communication via finger movements with hand resting innocuously at their side.

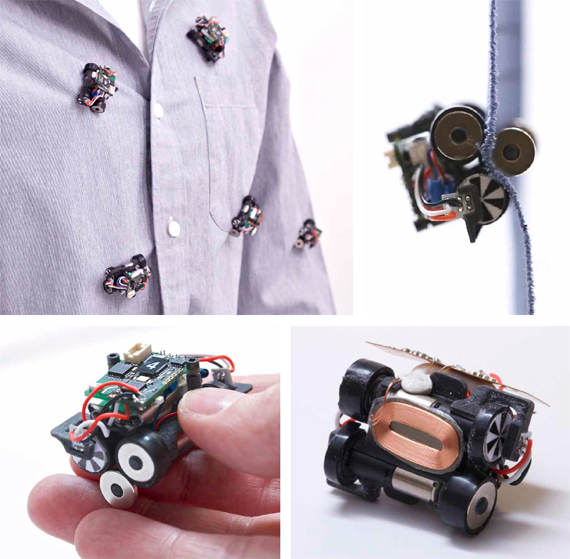

My former student Mat Laibowitz and I introduced the concept of “Parasitic Mobility” over a decade ago.(37) We proposed that low-power sensor nodes could harvest “mobility” as opposed to just garnering energy, hopping on and off moving agents in their environment. In this era of drones deployed from delivery vans, such a concept is now far from radical. Our examples used people as the mobile agents, and the nodes were little robots that could choose to be “worn” by attaching in various ways to their hosts, inspired by ticks, fleas, and so on in the natural world, then detaching at the location of their choice. My current student Artem Dementyev, together with Sean Follmer at Stanford and other collaborators, have recently pioneered a different approach to wearable robots in their “Rovables.”(38) These are very small (e.g., 2-cm) robots that navigate across a user’s clothing, choosing the best location to perform a task, ranging from a medical measurement to a dynamic display. This project displays an extreme form of human-robot closely proximate collaboration that could bear profound implication.

Everywhere Cameras and Ubiquitous Sensing

In George Orwell’s 1984,(39) it was the totalitarian Big Brother government who put the surveillance cameras on every television—but in the reality of 2016, it is consumer electronics companies who build cameras into the common set-top box and every mobile handheld. Indeed, cameras are becoming commodity, and as video feature extraction gets to lower power levels via dedicated hardware, and other micropower sensors determine the necessity of grabbing an image frame, cameras will become even more common as generically embedded sensors. The first commercial, fully integrateod CMOS camera chips came from VVL in Edinburgh (now part of ST Microelectronics) back in the early 1990s.(40) At the time, pixel density was low (e.g., the VVL “Peach” with 312 x 287 pixels), and the main commercial application of their devices was the “BarbieCam,” a toy video camera sold by Mattel. I was an early adopter of these digital cameras myself, using them in 1994 for a multi-camera precision alignment system at the Superconducting Supercollider(41) that evolved into the hardware used to continually align the forty-meter muon system at micron-level precision for the ATLAS detector at CERN’s Large Hadron Collider. This technology was poised for rapid growth: now, integrated cameras peek at us everywhere, from laptops to cellphones, with typical resolutions of scores of megapixels and bringing computational photography increasingly to the masses. ASICs for basic image processing are commonly embedded with or integrated into cameras, giving increasing video processing capability for ever-decreasing power. The mobile phone market has been driving this effort, but increasingly static situated installations (e.g., video-driven motion/context/gesture sensors in smart homes) and augmented reality will be an important consumer application, and the requisite on-device image processing will drop in power and become more agile. We already see this happening at extreme levels, such as with the recently released Microsoft HoloLens, which features six cameras, most of which are used for rapid environment mapping, position tracking, and image registration in a lightweight, battery-powered, head-mounted, self-contained AR unit. 3D cameras are also becoming ubiquitous, breaking into the mass market via the original structured-light-based Microsoft Kinect a half-decade ago. Time-of-flight 3D cameras (pioneered in CMOS in the early 2000s by researchers at Canesta(42) have evolved to recently displace structured light approaches, and developers worldwide race to bring the power and footprint of these devices down sufficiently to integrate into common mobile devices (a very small version of such a device is already embedded in the HoloLens). As pixel timing measurements become more precise, photon-counting applications in computational photography, as pursued by my Media Lab colleague Ramesh Raskar, promise to usher in revolutionary new applications that can do things like reduce diffusion and see around corners.(43)

My research group began exploring this penetration of ubiquitous cameras over a decade ago, especially applications that ground the video information with simultaneous data from wearable sensors. Our early studies were based around a platform called the “Portals”:(44) using an embedded camera feeding a TI DaVinci DSP/ARM hybrid processor, surrounded by a core of basic sensors (motion, audio, temperature/humidity, IR proximity) and coupled with a Zigbee RF transceiver, we scattered forty-five of these devices all over the Media Lab complex, interconnected through the wired building network. One application that we built atop them was “SPINNER,”(45) which labelled video from each camera with data from any wearable sensors in the vicinity. The SPINNER framework was based on the idea of being able to query the video database with higher-level parameters, lifting sensor data up into a social/affective space,(46) then trying to effectively script a sequential query as a simple narrative involving human subjects adorned with the wearables. Video clips from large databases sporting hundreds of hours of video would then be automatically selected to best fit given timeslots in the query, producing edited videos that observers deemed coherent.(47) Naively pointing to the future of reality television, this work aims further, looking to enable people to engage sensor systems via human-relevant query and interaction.

Rather than try to extract stories from passive ambient activity, a related project from our team devised an interactive camera with a goal of extracting structured stories from people.(48) Taking the form factor of a small mobile robot, “Boxie” featured an HD camera in one of its eyes: it would rove our building and get stuck, then plea for help when people came nearby. It would then ask people successive questions and request that they fulfill various tasks (e.g., bring it to another part of the building, or show it what they do in the area where it was found), making an indexed video that can be easily edited to produce something of a documentary about the people in the robot’s abode.

In the next years, as large video surfaces cost less (potentially being roll-roll printed) and are better integrated with responsive networks, we will see the common deployment of pervasive interactive displays. Information coming to us will manifest in the most appropriate fashion (e.g., in your smart eyeglasses or on a nearby display)—the days of pulling your phone out of your pocket and running an app are severely limited. To explore this, we ran a project in my team called “Gestures Everywhere”(49) that exploited the large monitors placed all over the public areas of our building complex.(50) Already equipped with RFID to identify people wearing tagged badges, we added a sensor suite and a Kinect 3D camera to each display site. As an occupant approached a display and were identified via RFID or video recognition, information most relevant to them would appear on the display. We developed a recognition framework for the Kinect that parsed a small set of generic hand gestures (e.g., signifying “next,” “more detail,” “go-away,” etc.), allowing users to interact with their own data at a basic level without touching the screen or pulling out a mobile device. Indeed, proxemic interactions(51) around ubiquitous smart displays will be common within the next decade.

The plethora of cameras that we sprinkled throughout our building during our SPINNER project produced concerns about privacy (interestingly enough, the Kinects for Gestures Everywhere did not evoke the same response—occupants either did not see them as “cameras” or were becoming used to the idea of ubiquitous vision). Accordingly, we put an obvious power switch on each portal that enabled them to be easily switched off. This is a very artificial solution, however—in the near future, there will just be too many cameras and other invasive sensors in the environment to switch off. These devices must answer verifiable and secure protocols to dynamically and appropriately throttle streaming sensor data to answer user privacy demands. We have designed a small, wireless token that controlled our portals in order to study solutions to such concerns.(52) It broadcast a beacon to the vicinity that dynamically deactivates the transmission of proximate audio, video, and other derived features according to the user’s stated privacy preferences—this device also featured a large “panic” button that can be pushed at any time when immediate privacy is desired, blocking audio and video from emanating from nearby Portals.

Rather than block the video stream entirely, we have explored just removing the privacy-desiring person from the video image. By using information from wearable sensors, we can more easily identify the appropriate person in the image,(53) and blend them into the background. We are also looking at the opposite issue—using wearable sensors to detect environmental parameters that hint at potentially hazardous conditions for construction workers and rendering that data in different ways atop real-time video, highlighting workers in situations of particular concern.(54)

Energy Management

The energy needed to sense and process has steadily declined—sensors and embedded sensor systems have taken full advantage of low-power electronics and smart power management.(55) Similarly, energy harvesting, once an edgy curiosity, has become a mainstream drumbeat that is resonating throughout the embedded sensor community.(56), (57) The appropriate manner of harvesting is heavily dependent on the nature of the ambient energy reserve. In general, for inhabited environments, solar cells tend to be the best choice (providing over 100 µA/cm2 indoors, depending on light levels, etc.). Otherwise, vibrational and thermoelectric harvesters can be exploited where there is sufficient vibration or heat transfer (e.g., in vehicles or on the body),(58), (59) and implementations of ambient RF harvesting(60) have not become uncommon either. In most practical implementations that constrain volume and surface area, however, an embedded battery can be a better solution over an anticipated device’s lifetime, as the limited power available from the harvester can already put a strong constraint on the current consumed by the electronics. Another solution is to beam RF or magnetic energy to batteryless sensors, such as with RFID systems. Popularized in our community starting with Intel’s WISP(61) (used by my team for the aforementioned finger-tracking rings(62)), commercial RF-powered systems are beginning to appear (e.g., Powercast, WiTricity, etc.), although each has its caveats (e.g., limited power or high magnetic flux density). Indeed, it is common now to see inductive “pucks” available at popular cafe’s to charge cellphones when placed above the primary coils embedded under the table, and certain phones have inductive charging coils embedded behind their case, but these seem to be seldom used as it is more convenient to use a ubiquitous USB cable/connector, and the wire eliminates position constraints that proximate inductive charging can impose. In my opinion, due to its less-than-optimal convenience, high lossiness (especially relevant in our era of conservation), and potential health concerns (largely overstated,(63) but more important if you have a pacemaker, etc.), wireless power transfer will be restricted mainly to niche and low-power applications like micropower sensors that have no access to light for photovoltaics, and perhaps special closets or shelves at home where our wearable devices can just be hung up to be charged.

The boom in energy harvesting interest has precipitated a variety of ICs dedicated to managing and conditioning the minute amounts of power these things typically produce (e.g., by major IC providers like Linear Technology and Maxim), and the dream of integrating harvester, power conditioning, sensor, processing, and perhaps wireless on a single chip nears reality (at least for extremely low duty-cycle operation or environments with large reservoirs of ambient energy). Indeed, the dream of such Smart Dust(64) has been realized in early prototypes, such as the University of Michigan wafer stack that uses its camera as an optical energy harvester(65) and the recently announced initiative to produce laser-accelerated, gram-level “Star Wisp” swarm sensors for fast interstellar exploration(66) will emphasize this area even more.

Although energy harvesting is often confused with sustainable energy sources by the general public (for example,(67)), the amount of energy harvestable in standard living environments is far too small to make any real contribution to society’s power needs. On the other hand, very low-power sensor systems, perhaps augmented by energy harvesting, minimize or eliminate the need for changing batteries, thereby decreasing deployment overhead, hence increasing the penetration of ubiquitous sensing. The information derived from these embedded sensors can then be used to intelligently minimize energy consumption in built environments, promising a better degree of conservation and utility than attainable by today’s discrete thermostats and lighting controls.

Dating back to the Smart Home of Michael Moser,(68) work of this sort has recently become something of a lightning rod in ubiquitous computing circles, as several research groups have launched projects in smart, adaptive energy management through pervasive sensing.(69) For example, in my group, my then-student Mark Feldmeier has used inexpensive chip-based piezo cantilevers to make a micropower integrating wrist-worn activity detector (before the dawn of commercial exercise-tracker wristbands) and environmental monitor for a wearable HVAC controller.(70) Going beyond subsequently introduced commercial systems such as the well-known Nest Thermostat, the wearable provides a first-person perspective that more directly relates to comfort than a static wall-mounted sensor, plus is intrinsically mobile, exerting HVAC control in any suitably equipped room or office. Exploiting analog processing, this continuous activity integrator runs at under 2 microamperes, enabling our device (together with its other components, which also measure and broadcast temperature, humidity, and light level each minute) to run for over two years on a small coin-cell battery, updating once per minute when within range of our network. We have used the data from this wrist-worn device to essentially graft onto the user’s “sense of comfort,” and customize our building’s HVAC system according to the comfort of the local users, as inferred from the wearable sensor data discriminated by “hot” and “cold” labels, obtained by pushing buttons on the device. We estimated that the HVAC system running under our framework used roughly 25% less energy, and inhabitants were significantly more comfortable.(71) Similar wireless micropower sensors for HVAC control have recently been developed by others, such as Schneider Electric in Grenoble,(72) but are used in a fixed location. Measuring temperature, humidity, and CO2 levels every minute and uploading the readings via a Zigbee network, the Schneider unit is powered by a small solar cell—one day of standard indoor light is sufficient to power this device for up to four weeks (the duration of a typical French vacation).

Lighting control is also another application that can benefit from ubiquitous computing. This is not only for energy management purposes—we also need to answer the user interface challenge that modern lighting systems pose. Although the actuation possibilities of contemporary commonly networked digitally controlled fluorescent and solid-state lights are myriad, the human interface suffers greatly, as especially commercial lighting is generally managed by cryptic control panels that make getting the lighting that you want onerous at best. As we accordingly lament the loss of the simple light switch, my group has launched a set of projects to bring it back in virtual form, led by my students Nan Zhao, and alums Matt Aldrich and Asaf Axaria. One route that we have pursued involves using feedback from a small incident light sensor that is able to isolate the specific contribution of each nearby light fixture to the overall lighting at the sensor, as well as to estimate external, uncontrolled light.(73)

Our control algorithm, based around a simple linear program, is able to dynamically calculate the energy-optimal lighting distribution that delivers the desired illumination at the sensor location, effectively bringing back the local light switch as a small wireless device.

Our ongoing work in this area expands on this idea by also incorporating cameras as distributed reflected-light sensors and as a source of features that can be used for interactive lighting control. We have derived a set of continuous control axes for distributed lighting via principle-component analysis that is relevant to human perception,(74) enabling easy and intuitive adjustment of networked lighting. We are also driving this system from the wearable sensors in Google Glass, automatically adjusting the lighting to optimize illumination of surfaces and objects you are looking at as well as deriving context to set overall lighting; for instance, seamlessly transitioning between lighting appropriate for a working or a casual/social function.(75) Our most recent work in this area also incorporates large, soon-to-be ubiquitous displays, changing both images/video, sound, and lighting in order to serve the user’s emotive/attentive state as detected by wearable sensors and nearby cameras(76) to healthy and productive levels.

Radio, Location, Electronic “Scent,” and Sensate Media

The last decade has seen a huge expansion in wireless sensing, which is having a deep impact in ubiquitous computing. Mixed signal IC layout techniques have enabled the common embedding of silicon radios on the same die as capable microprocessors, which have ushered in a host of easy-to-use smart radio ICs, most of which support common standards like Zigbee running atop 802.15.4. Companies like Nordic, TI/Chipcon, and more recently Atmel, for example, make commonly used devices in this family. Although a plethora of sensor-relevant wireless standards are in place that optimize for different applications (e.g., ANT for low duty-cycle operation, Savi’s DASH-7 for supply chain implementation, Wireless Hart for industrial control, Bluetooth Low Energy for consumer devices, Lorawan for longer-range IoT devices, and low-power variants of Wi-Fi), Zigbee has had a long run as a well-known standard for low-power RF communication, and Zigbee-equipped sensor modules are easily available that can run operating systems derived from sensor net research (e.g., dating to TinyOS(77), or custom-developed application code. Multihop operation is standard in Zigbee’s routing protocol, but battery limitations generally dictate that routing nodes need to be externally powered. Although it could be on the verge of being replaced by more recently developed protocols (as listed above), my team has been basing most of its environmental and static sensor installations on modified Zigbee software stacks.

Outdoor location within a several meters has long been dominated by GPS, and more precise location is provided by differential GPS, soon to be much more common via recent low-cost devices like the RTK (Real Time Kinematic) GPS embedded radios.(78) Indoor location is another story. It is much more difficult, as GPS is shielded away, and indoor environments present limited lines-of-sight and excessive multipath. There seems to be no one clear technology winner for all indoor situations (as witnessed by the results of the Microsoft Indoor Location Competition run yearly at recent IPSN Conferences(79), but the increasing development being thrown at it promises that precise indoor location will soon be a commonplace feature in wearable, mobile, and consumer devices.

Networks running 802.15.4 (or 802.11 for that matter) generally offer a limited location capability that utilizes RSSI (received signal strength) and radio link quality. These essentially amplitude-based techniques are subject to considerable error due to dynamic and complex RF behavior in indoor environments. With sufficient information from multiple-base stations and exploitation of prior state and other constraints, these “fingerprinting” systems claim to be able to localize mobile nodes indoors to within three to five meters.(80) Time-of-flight RF systems, however, promise to make this much better. Clever means of utilizing the differential phase of RF beats in simultaneously transmitting outdoor radios has been shown to be able to localize a grid of wireless nodes to within three centimeters across a football field,(81) and directional antennae achieve circa thirty centimeters or better accuracy with Nokia’s HAIP system,(82) for example.

However, the emerging technology of choice in lightweight precision RF location is low-power, impulse-based UltraWideBand, where short radio impulses at around 5-8 GHz are precisely timed, and cm-level accuracy is promised. High-end commercial products have been available for a while now (e.g., UbiSense for indoor location, and Zebra Technology radios for stadium-scale [sports] location), but emerging chipsets from companies like Decawave and Qualcomm (via their evolving “Peanut” radio) indicate that this technology might soon be in everything—and as soon as every device can be located to within a few centimeters, profound applications will arise (e.g., geofencing and proximate interfaces(83) are obvious, but this capability will impact everything).

One example that Brian Mayton in my team has produced leveraging this technology was the “WristQue” wristband.(84) Equipped with precise indoor location (via the UbiSense system) as well as a full 9-axis IMU and sensors to implement the smart lighting and HVAC systems discussed above, the WristQue also enables control of devices by simple pointing and arm gesture. Pointing is an intuitive way by which humans communicate, and camera-based systems have explored this ever since the legacy “Put That There” demo was developed at the MIT Media Lab in the 1980s.(85) But as the WristQue knows the position of the wrist to within centimeters via soon-to-be-ubiquitous radio location and the wrist angle via the IMU (calibrated for local magnetic distortion), we can easily extrapolate the arm’s vector to determine what a user is pointing to without the use of cameras.

Different locations also exhibit specific electromagnetic signatures or “scents,” as can be detected via simple capacitive, inductive, RF, and optical sensors. These arise from parasitic emissions from power lines and various local emanations from commonly modulated lighting and other electrical equipment—these signals can also be affected by building wiring and structure. Such background signals carry information relevant to energy usage and context (e.g., what appliance is doing what and how much current it is drawing) and also location (as these near-field or limited-radius emanations attenuate quickly). Systems that exploit these passive background signals, generally through a machine-learning process, have been a recent fashion in UbiComp research, and have seen application in areas like non-intrusive load management,(86) tracking hands across walls,(87) and inferring gesture around fluorescent lamps.(88) DC magnetic sensors have also been used to precisely locate people in buildings, as the spatial orientation of the Earth’s magnetic field changes considerably with position in the building.(89)

My team has leveraged using ambient electronic noise in wearables, analyzing characteristics of capacitively coupled pickup to identify devices touched and infer their operating modes.(90) My then-student Nan-Wei Gong and I (in collaboration with Microsoft Research in the UK) have developed a multimodal electromagnetic sensor floor with pickups and basic circuitry printed via an inexpensive roll-roll process.(91) The networked sensing cells along each strip can track people walking above via capacitive induction of ambient 60 Hz hum, as well as track the location of an active GSM phone or near-field transmitter via their emanations. This is a recent example of what we have termed “Sensate Media”(92)—essentially the integration of low-power sensor networks into common materials to make them sensorially capable—essentially a scalable “electronic skin.”

Our most recent work in this area has produced SensorTape,(93) a roll of networked embedded sensors on a flexible substrate in the form factor of common adhesive tape. Even though our sensor tape is many meters long it can be spooled off, cut, and rejoined as needed and attached to an object or surface as desired to give it sensing capability (our current tape embeds devices like IMUs and proximity sensors at a circa 1” pitch for distributed orientation and ranging). We have also explored making versions of SensorTape that can exploit printed sensors and be passively powered,(94) enabling it to be attached to inaccessible surfaces and building materials, then wirelessly interrogated by, for example, NFC readers. In cases where sensors cannot be printed, they can also be implemented as stickers with conductive adhesive, such as recently pioneered by my student Jie Qi and Media Lab alum Bunny Huang for craft and educational applications(95) in their commercially available Chibitronics kits.

Aggregating and Visualizing Diverse Sensor Data

We have witnessed an explosion of real-time sensor data flowing through our networks—and the rapid expansion of smart sensors at all scales should soon cause real-time sensor information to dominate network traffic. At the moment, this sensor information is still fairly siloed—for example, video is for video conferencing, telephony, and webcams; traffic data shows up on traffic websites, and so on. A grand challenge for our community is to break down the walls between these niches, and develop systems and protocols that seamlessly bridge these categories to develop a virtual sensor environment, where all relevant data is dynamically collated and fused. Several initiatives have begun to explore these ideas of unifying data. Frameworks based on SensorML, Home Plug & Play, and DLNA, for example, have been aimed at enabling online sensors to easily provide data across applications. Patchube (now Xively(96)) is a proprietary framework that enables subscribers to upload their data to a broad common pool that can then be provided for many different applications. These protocols, however, have had limited penetration for various reasons, such as the desire to own all of your data and charge revenue or limited buy-in from manufacturers. Recent industrial initiatives, such as the Qualcomm-led AllJoyn(97) or the Intel-launched Open Interconnect Alliance with IoTivity(98) are more closely aimed at the Internet of Things and are less dominated by consumer electronics. Rather than wait for these somewhat heavy protocols to mature, my student Spencer Russell has developed our own, called CHAIN-API.(99), (100) CHAIN is a RESTful (REpresentational State Transfer), web-literate framework, based on JSON data structures, hence is easily parsed by tools commonly available to web applications. Once it hits the Internet, sensor data is posted, described, and linked to other sensor data in CHAIN. Exploiting CHAIN, applications can “crawl” sensor data just as they now crawl linked static posts, hence related data can be decentralized and live under diverse servers (I believe that in the future no entity will “own” your data, hence it will be distributed). Crawlers will constantly traverse data linked in protocols like CHAIN, calculating state and estimating other parameters derived from the data, which in turn will be re-posted as other “virtual” sensors in CHAIN. As an example of this, my student David Ramsay has implemented a system called “LearnAir,”(101) in which he has used CHAIN to develop a protocol for posting data from air quality sensors, as well as building a machine-learning framework that corrects, calibrates, and extrapolates this data by properly combining ubiquitous data from inexpensive sensors with globally measured parameters that can affect its state (e.g., weather information), as well as grounding it with data from high-quality sensors (e.g., EPA certified) depending on proximity. With the rise of Citizen Science in recent years, sensors of varying quality will collect data of all sorts everywhere. Rather than drawing potentially erroneous conclusions from misleading data, LearnAir points to a future where data of all sorts and of varying quality will be combined dynamically and properly conditioned, allowing individuals to contribute to a productive data commons that incorporates all data most appropriately.

Browsers for this ubiquitous, multimodal sensor data will play a major role, for example, in the setup/debugging(102) of these systems and building/facility management. An agile multimodal sensor browser capability, however, promises to usher in revolutionary new applications that we can barely speculate about at this point. A proper interface to this artificial sensoria promises to produce something of a digital omniscience, where one’s awareness can be richly augmented across temporal and spatial domains—a rich realization of McLuhan’s characterization of electronic media extending the human nervous system.(103) We call this process “Cross Reality”(104)—a pervasive, everywhere augmented reality environment, where sensor data fluidly manifests in virtual worlds that can be intuitively browsed. Although some researchers have been adding their sensor data into structures viewable through Google Earth (for example, (105)), we have been using a 3D game engine to browse the data coming from our own building—as game engines are built for efficient graphics, animation, and interaction, they are perfectly suited to developing such architecturally referenced sensor manifestations (our prior work used the communal shared-reality environment SecondLife,(106) which proved much too restrictive for our aims).

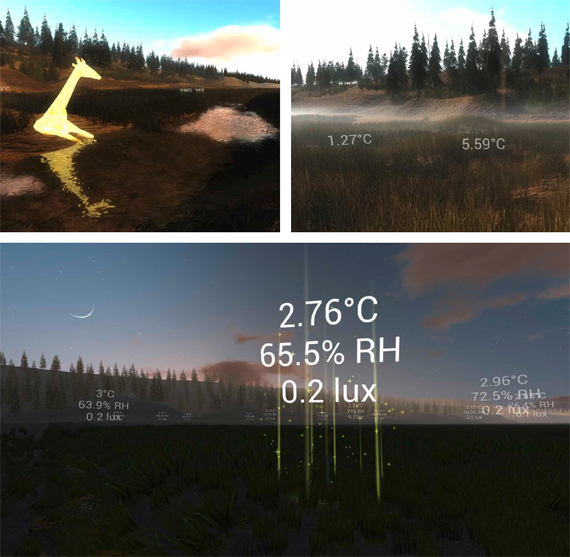

Our present system, called “DoppelLab”(107) and led by my student Gershon Dublon, makes hundreds of diverse sensor and information feeds related to our building visible via animations appearing in a common virtual world. Browsing in DoppelLab, we can see the building HVAC system work, see people moving through the building via RFID badges and motion sensors, see their public Tweets emanating from their offices, and even see real-time ping pong games played out on a virtual table.(108)

We have also incorporated auditory browsing in DoppelLab by creating spatialized audio feeds coming from microphones placed all around our building, referenced to the position of the user in the virtual building. Random audio grains are reversed or deleted right at the sensor node, making conversations impossible to interpret for privacy’s sake (it always sounds like people are speaking a foreign language), but vocal affect, laughing, crowd noise, and audio transients (e.g., elevators ringing, people opening doors, etc.) come through fairly intact.(109) Running DoppelLab with spatialized audio of this sort gives the user the feeling of being a ghost, loosely coupled to reality in a tantalizing way, getting the gist and feeling associated with being there, but removed from the details. Growing up in the late-1960s era of McLuhan, this concept fascinated me, and as an elementary-school child, I wired arrays of microphones hundreds of meters away to mixers and amplifiers in my room. At the time, despite the heavy influence of then-in-vogue spy movies, I had no desire to snoop on people. I was instead just fascinated with the idea of generalizing presence and bringing remote outdoor sound into dynamic ambient stereo mixes that I would experience for hours on end. DoppelLab and the Doppelmarsh environment described below have elevated this concept to a level I could have never conceived of in my analog youth.

We had planned to evolve DoppelLab into many tangent endeavors, including two-way interaction that allows virtual visitors to also manifest into our real space in different ways via distributed displays and actuators. Most of our current efforts in this line of research, however, have moved outdoors, to a 242.8-hectare (600-acre) retired cranberry bog called “Tidmarsh” located an hour south of MIT in Plymouth Massachusetts.(110) This property is being restored to its natural setting as a wetland, and to document this process, we have installed hundreds of wireless sensors measuring a variety of parameters, such as temperature, humidity, soil moisture and conductivity, nearby motion, atmospheric and water quality, wind, sound, light quality, and so on. Using our low-power Zigbee-derived wireless protocol and posting data in CHAIN, these sensors, designed by my student Brian Mayton, last for two years on AA batteries (posting data every twenty seconds) or live perpetually when driven by a small solar cell. We also have thirty real-time audio feeds coming from an array of microphones distributed across Tidmarsh, with which we are exploring new applications in spatialized audio, real-time wildlife recognition with deep learning,(111) and so on.

In addition to using this rich, dense, data archive to analyze the progress of restoration in collaboration with environmental scientists, we are also using the real-time CHAIN-posted data stream in a game-engine visualization. Within this “Doppelmarsh” framework, you can float across the re-synthesized virtual landscape (automatically updated by information gleaned by cameras so the virtual appearance tracks real-world seasonal and climatic changes), and see the sensor information realized as situated graphics, animations, and music. The sounds in Doppelmarsh come from both the embedded microphones (spatialized relative to the user’s virtual position) and music driven by sensor data (for example, you can hear the temperature, humidity, activity, and so on, coming from nearby devices). We have built a framework for composers to author musical compositions atop the Tidmarsh data(112) and have so far hosted four musical mappings, with more to come shortly.

Through our virtual Tidmarsh, we have created a new way to remotely experience the real landscape while keeping something of the real aesthetic. In Doppelmarsh, you can float across boggy land that is now very difficult to traverse (free of the guaranteed insect bites), hearing through nearby microphones and seeing metaphorical animations from real-time sensors (including flora and fauna that “feed” upon particular sensor data, hence their appearance and behavior reflects the history of temperature, moisture, and so on, in that area). We are also developing a wearable “sensory prosthetic” to augment the experience of visitors to the Tidmarsh property. Based on a head-mounted wireless position/orientation-tracking bone-conduction headphone system we have developed called HearThere,(113) we will estimate where or on what users are focusing attention via an array of wearable sensors, and manifest sound from related/proximate microphones and sensors appropriately. Users can look across a stream, for example, and hear audio from microphones and sensors there, then look down into the water and hear sound from hydrophones and sensors there: if they focus on a log equipped with sensitive accelerometers, they can hear the insects boring inside. In the future, all user interfaces will leverage attention in this fashion—relevant information will manifest itself to us in the most appropriate way and leverage what we are paying attention to in order to enhance perception and not distract.

I see artists as playing a crucial role in shaping how the augmented world appears to us. As large data sources emanating from everyday life are now accessible everywhere, and tools to extract structure from them increasingly available, artists, composers, and designers will sculpt the environments and realizations that present this information to us in ways that are humanly relevant. Indeed, a common mantra in my research group now states that “Big Data is the Canvas for Future Artists” and we have illustrated this via the creative musical frameworks we have made available for both Doppelmarsh(114) and real-time physics data coming from the ATLAS detector at CERN’s Large Hadron Collider.(115) I can see a near future where a composer can do a piece for a smart city, where the traffic, weather, air quality, pedestrian flow, and so on are rendered in music that changes with conditions and virtual user location. These are creative constructs that never end, always change, and can be perceived to relate to real things happening in real places, giving them imminence and importance.

Conclusions

Moore’s Law has democratized sensor technology enormously over the past decade—this paper has touched on a few of the very many modes that exist for sensing humans and their activities.(116) Ever more sensors are now integrated into common products (witness mobile phones, which have become the Swiss Army Knives of the sensor/RF world), and the DIY movement has also enabled custom sensor modules to be easily purchased or fabricated through many online and crowd-sourced outlets.(117) As a result, this decade has seen a huge rush of diverse data flowing into the network. This will surely continue in the following years, leaving us the grand challenge of synthesizing this information into many forms—for example, grand cloud-based context engines, virtual sensors, and augmentation of human perception. These advances not only promise to usher in true UbiComp, they also hint at radical redefinition of how we experience reality that will make today’s common attention-splitting between mobile phones and the real world look quaint and archaic.

We are entering a world where ubiquitous sensor information from our proximity will propagate up into various levels of what is now termed the “cloud” then project back down into our physical and logical vicinity as context to guide processes and applications manifesting around us. We will not be pulling our phones out of our pocket and diverting attention into a touch UI, but rather will encounter information brokered between wearables and proximate ambient displays. The computer will become more of a partner than experienced as a discrete application that we run. My colleague and founding Media Lab director Nicholas Negroponte described this relationship as a “digital butler” in his writings from decades back(118)—I see this now as more of an extension of ourselves rather than the embodiment of an “other.” Our relationship with computation will be much more intimate as we enter the age of wearables. Right now, all information is available on many devices around me at the touch of a finger or the enunciation of a phrase. Soon it will stream directly into our eyes and ears once we enter the age of wearables (already forseen by then Media Lab students like Steve Mann(119) and Thad Starner(120) who were living in an early version of this world during the mid-1990s). This information will be driven by context and attention, not direct query, and much of it will be pre-cognitive, happening before we formulate direct questions. Indeed, the boundaries of the individual will be very blurry in this future. Humanity has pushed these edges since the dawn of society. Since sharing information with each other in oral history, the boundary of our mind expanded with writing and later the printing press, eliminating the need to mentally retain verbatim information and keep instead pointers into larger archives. In the future, where we will live and learn in a world deeply networked by wearables and eventually implantables, how our essence and individuality is brokered between organic neurons and whatever the information ecosystem becomes is a fascinating frontier that promises to redefine humanity.

Acknowledgments

The MIT Media Lab work described in this article has been conducted by my graduate students, postdocs, and visiting affiliates over the past decade, many of whom were explicitly named in this article and others represented in the cited papers and theses—readers are encouraged to consult the included references for more information on these projects along with a more thorough discussion of related work. I thank the sponsors of the MIT Media Lab for their support of these efforts.

Notes

1. V. Bush, “As we may think,” Atlantic Monthly 176 (July 1945): 101–108.

2. M. Weiser, “The computer for the 21st century,” Scientific American 265(3) (September 1991): 66–75.

3. J. Paradiso, “Modular synthesizer,” in Timeshift, G. Stocker, and C. Schopf (eds.), (Proceedings of Ars Electronica 2004), (Ostfildern-Ruit: Hatje Cantz Verlag, 2004): 364–370.

4. J. A. Paradiso, “Electronic music interfaces: New ways to play,” IEEE Spectrum 34(12) (December 1997): 18–30.

5. J. A. Paradiso, “Sensor architectures for interactive environments,” in Augmented Materials & Smart Objects: Building Ambient Intelligence Through Microsystems Technologies, K. Deleaney (ed.), (Springer, 2008): 345–362.

6. D. MacKenzie, Inventing Accuracy: A Historical Sociology of Nuclear Missile Guidance (Cambridge, MA: MIT Press, 1990).

7. P. L. Walter, “The history of the accelerometer,” Sound and Vibration (January 2007, revised edition): 84–92.

8. H. Weinberg, “Using the ADXL202 in pedometer and personal navigation applications,” Analog Devices Application Note AN-602 (Analog Devices Inc., 2002).

9. V. Renaudin, O. Yalak, and P. Tomé, “Hybridization of MEMS and assisted GPS for pedestrian navigation,” Inside GNSS (January/February 2007): 34–42.

10. M. Malinowski, M. Moskwa, M. Feldmeier, M. Laibowitz, and J. A. Paradiso, “CargoNet: A low-cost micropower sensor node exploiting quasi-passive wakeup for adaptive asychronous monitoring of exceptional events,” Proceedings of the 5th ACM Conference on Embedded Networked Sensor Systems (SenSys’07) (Sydney, Australia, November 6–9, 2007): 145–159.

11. A. Y. Benbasat, and J. A. Paradiso, “A Framework for the automated generation of power-efficient classifiers for embedded sensor nodes,” Proceedings of the 5th ACM Conference on Embedded Networked Sensor Systems (SenSys’07) (Sydney, Australia, November 6–9, 2007): 219–232.

12. J. Bernstein, “An overview of MEMS inertial sensing technology,” Sensors Magazine (February 1, 2003).

13. Benbasat, and Paradiso, “Framework for the automated generation,” op. cit.: 219–232.

14. N. Ahmad, A. R. Ghazilla, N. M. Khain, and V. Kasi, “Reviews on various inertial measurement (IMU) sensor applications,” International Journal of Signal Processing Systems 1(2) (December 2013): 256–262.

15. C. Verplaetse, “Inertial proprioceptive devices: self-motion-sensing toys and tools,” IBM Systems Journal 35(3–4) (1996): 639–650.

16. J. A. Paradiso, “Some novel applications for wireless inertial sensors,” Proceedings of NSTI Nanotech 2006 3 (Boston, MA, May 7–11, 2006): 431–434.

17. R. Aylward, and J. A. Paradiso, “A compact, high-speed, wearable sensor network for biomotion capture and interactive media,” Proceedings of the Sixth International IEEE/ACM Conference on Information Processing in Sensor Networks (IPSN 07) (Cambridge, MA, April 25–27, 2007): 380–389.

18. M. Lapinski, M. Feldmeier, and J. A. Paradiso, “Wearable wireless sensing for sports and ubiquitous interactivity,” IEEE Sensors 2011 (Dublin, Ireland, October 28–31, 2011).

19. M. T. Lapinski, “A platform for high-speed biomechanical data analysis using wearable wireless sensors,” PhD Thesis, MIT Media Lab (August 2013).

20. K. Lightman, “Silicon gets sporty: Next-gen sensors make golf clubs, tennis rackets, and baseball bats smarter than ever,” IEEE Spectrum (March 2016): 44–49.

21. For an early example, see the data sheet for the Analog Devices AD22365.

22. M. Feldmeier, and J. A. Paradiso, “Personalized HVAC control system,” Proceedings of IoT 2010 (Tokyo, Japan, November 29–December 1, 2010).

23. D. De Rossi, A. Lymberis (eds.), IEEE Transactions on Information Technology in Biomedicine, Special Section on New Generation of Smart Wearable Health Systems and Applications 9(3) (September 2005).

24. L. Buechley, “Material computing: Integrating technology into the material world,” in The SAGE Handbook of Digital Technology Research, S. Price, C. Jewitt, and B. Brown (eds.), (New York: Sage Publications Ltd., 2013).

25. I. Poupyrev, N.-W. Gong, S. Fukuhara, M. E. Karagozler, C. Schwesig, K. Robinson, “Project Jacquard: Interactive digital textiles at scale,” Proceedings of CHI (2016): 4216–4227.

26. V. Vinge, Rainbow’s End (New York: Tor Books, 2006).

27. C. Hou, X. Jia, L. Wei, S.-C. Tan, X. Zhao, J. D. Joanopoulos, and Y. Fink, “Crystalline silicon core fibres from aluminium core performs,” Nature Communications 6 (February 2015).

28. D. L. Chandler, “New institute will accelerate innovations in fibers and fabrics,” MIT News Office (April 1, 2016).

29. K. Vega, Beauty Technology: Designing Seamless Interfaces for Wearable Computing (Springer, 2016).

30. X. Liu, K. Vega, P. Maes, and J. A. Paradiso, “Wearability factors for skin interfaces,” Proceedings of the 7th Augmented Human International Conference 2016 (AH ’16) (New York: ACM, 2016)

31. K. Vega, N. Jing, A. Yetisen, V. Kao, N. Barry, and J. A. Paradiso, “The dermal abyss: Interfacing with the skin by tattooing biosensors,” submitted to TEI 2017.

32. B. D. Mayton, N. Zhao, M. G. Aldrich, N. Gillian, and J. A. Paradiso, “WristQue: A personal sensor wristband,” Proceedings of the IEEE International Conference on Wearable and Implantable Body Sensor Networks (BSN ’13) (2013): 1–6.

33. R. Bainbridge, and J. A. Paradiso, “Wireless hand gesture capture through wearable passive tag sensing,” International Conference on Body Sensor Networks (BSN ’11) (Dallas, TX, May 23–25, 2011): 200–204.

34. D. Way, and J. Paradiso, “A usability user study concerning free-hand microgesture and wrist-worn sensors,” Proceedings of the 2014 11th International Conference on Wearable and Implantable Body Sensor Networks (BSN ’14) (IEEE Computer Society, June 2104): 138–142.

35. A. Dementyev, and J. Paradiso, “WristFlex: Low-power gesture input with wrist-worn pressure sensors,” Proceedings of UIST 2014, the 27th annual ACM symposium on User Interface Software and Technology (Honolulu, Hawaii, October 2014): 161–166.

36. H.-L. Kao, A. Dementyev, J. A. Paradiso, and C. Schmandt, “NailO: Fingernails as an input surface,” Proceedings of the International Conference on Human Factors in Computing Systems (CHI’15) (Seoul, Korea, April 2015).

37. M. Laibowitz, and J. A. Paradiso, “Parasitic mobility for pervasive sensor networks,” in Pervasive Computing, H. W. Gellersen, R. Want, and A. Schmidt (eds.), Proceedings of the Third International Conference, Pervasive 2005 (Munich, Germany, May 2005) (Berlin: Springer-Verlag): 255–278.

38. A. Dementyev, H.-L. Kao, I. Choi, D. Ajilo, M. Xu, J. Paradiso, C. Schmandt, and S. Follmer, “Rovables: Miniature on-body robots as mobile wearables,” Proceedings of ACM UIST (2016).

39. G. Orwell, 1984 (London: Secker and Warburg, 1949).

40. E. R. Fossum, “CMOS image sensors: Electronic camera-on-a-chip,” IEEE Transactions On Electron Devices 44(10) (October 1997): 1689–1698.

41. J. A. Paradiso, “New technologies for monitoring the precision alignment of large detector systems,” Nuclear Instruments and Methods in Physics Research A386 (1997): 409–420.

42. S. B. Gokturk, H. Yalcin, and C. Bamji, “A time-of-flight depth sensor – system description, issues and solutions,” CVPRW ’04, Proceedings of the 2004 Conference on Computer Vision and Pattern Recognition Workshop 3: 35–45.

43. A. Velten, T. Wilwacher, O. Gupta, A. Veeraraghavan, M. G. Bawendi, and R. Raskar, “Recovering three-dimensional shape around a corner using ultra-fast time-of-flight imaging,” Nature Communications 3 (2012).

44. J. Lifton, M. Laibowitz, D. Harry, N.-W. Gong, M. Mittal, and J. A. Paradiso, “Metaphor and manifestation – cross reality with ubiquitous sensor/actuator networks,” IEEE Pervasive Computing Magazine 8(3) (July–September 2009): 24–33.

45. M. Laibowitz, N.-W. Gong, and J. A. Paradiso, “Multimedia content creation using societal-scale ubiquitous camera networks and human-centric wearable sensing,” Proceedings of ACM Multimedia 2010 (Florence, Italy, 25–29 October 2010): 571–580.

46. J. A. Paradiso, J. Gips, M. Laibowitz, S. Sadi, D. Merrill, R. Aylward, P. Maes, and A. Pentland, “Identifying and facilitating social interaction with a wearable wireless sensor network,” Springer Journal of Personal & Ubiquitous Computing 14(2) (February 2010): 137–152.

47. Laibowitz, Gong, and Paradiso, “Multimedia content creation,” op. cit.: 571–580.

48. A. Reben, and J. A. Paradiso, “A mobile interactive robot for gathering structured social video,” Proceedings of ACM Multimedia 2011 (October 2011): 917–920.

49. N. Gillian, S. Pfenninger, S. Russell, and J. A. Paradiso, “Gestures Everywhere: A multimodal sensor fusion and analysis framework for pervasive displays,” Proceedings of The International Symposium on Pervasive Displays (PerDis ’14), Sven Gehring (ed.), (New York, NY: ACM, June 2014): 98–103.

50. M. Bletsas, “The MIT Media Lab’s glass infrastructure: An interactive information system,” IEEE Pervasive Computing (February 2012): 46–49.

51. S. Greenberg, N. Marquardt, T. Ballendat, R. Diaz-Marino, and M. Wang, “Proxemic interactions: the new UbiComp?,” ACM Interactions 18(1) (January 2011): 42–50.

52. N.-W. Gong, M. Laibowitz, and J. A. Paradiso, “Dynamic privacy management in pervasive sensor networks,” Proceedings of Ambient Intelligence (AmI) 2010 (Malaga, Spain, 25–29 October 2010): 96–106.

53. G. Dublon, “Beyond the lens: Communicating context through sensing, video, and visualization,” MS Thesis, MIT Media Lab (September 2011).

54. B. Mayton, G. Dublon, S. Palacios, and J. A. Paradiso, “TRUSS: Tracking risk with ubiquitous smart sensing,” IEEE SENSORS 2012 (Taipei, Taiwan, October 2012).

55. A. Sinha, and A. Chandrakasan, “Dynamic power management in wireless sensor networks,” IEEE Design & Test of Computers (March–April 2001): 62–74.

56. J. A. Paradiso, and T. Starner, “Energy scavenging for mobile and wireless electronics,” IEEE Pervasive Computing 4(1) (February 2005): 18–27.

57. E. Boyle, M. Kiziroglou, P. Mitcheson, and E. Yeatman, “Energy provision and storage for pervasive computing,” IEEE Pervasive Computing (October–December 2016).

58. P. D. Mitcheson, T. C. Green, E. M. Yeatman, A. S. Holmes, “Architectures for vibration-driven micropower generators,” Journal of Microelectromechanical Systems 13(3) (June 2004): 429–440.

59. Y. K. Ramadass, and A. P. Chandrakasan, “A batteryless thermoelectric energy-harvesting interface circuit with 35mV startup voltage,” Proceedings of ISSCC (2010): 486–488.

60. H. Nishimoto, Y. Kawahara, and T. Asami, “Prototype implementation of wireless sensor network using TV broadcast RF energy harvesting,” UbiComp’10 (Copenhagen, Denmark, September 26–29, 2010): 373–374.

61. J. R. Smith, A. Sample, P. Powledge, A. Mamishev, and S. Roy. “A wirelessly powered platform for sensing and computation,” UbiComp 2006: Eighth International Conference on Ubiquitous Computing (Orange County, CA, 17–21 September, 2006): 495–506.

62. Bainbridge, and Paradiso, “Wireless hand gesture capture,” op. cit.: 200–204.

63. R. L. Park, Voodoo Science: The Road from Foolishness to Fraud (Oxford: OUP, 2001).

64. B. Warneke, M. Last, B. Liebowitz, and K. S. J. Pister, “Smart Dust: Communicating with a cubic-millimeter computer,” IEEE Computer 34(1) (January 2001): 44–51.

65. Y. Lee, “Ultra-low power circuit design for cubic-millimeter wireless sensor platform,” PhD Thesis, University of Michigan (EE Dept) (2012).

66. D. Overbyte, “Reaching for the stars, across 4.37 light-years,” The New York Times (April 12, 2016).

67. M. G. Richard, “Japan: Producing electricity from train station ticket gates,” see http://www.treehugger.com/files/2006/08/japan_ticket_gates.php.

68. M. Mozer, “The neural network house: An environment that adapts to its inhabitants,” Proceedings of the American Association for Artificial Intelligence Spring Symposium on Intelligent Environments (Menlo Park, CA, 1998): 110–114.

69. J. Paradiso, P. Dutta, H. Gellerson, E. Schooler (eds.), Special Issue on Smart Energy Systems, IEEE Pervasive Computing Magazine 10(1) (January–March 2011).

70. M. Feldmeier, and J. A. Paradiso, “Personalized HVAC control system,” Proceedings of IoT 2010 (Tokyo, Japan, November 29–Dec. 1, 2010).

71. Ibid.

72. G. Chabanis, “Self-powered wireless sensors in buildings: An ideal solution for improving energy efficiency while ensuring comfort to occupant,” Proceedings of Energy Harvesting & Storage Europe 2011, IDTechEx (Munich, Germany, June 21–22, 2011).

73. M. Aldrich, N. N. Zhao, and J. A. Paradiso, “Energy efficient control of polychromatic solid-state lighting using a sensor network,” Proceedings of SPIE (OP10) 7784 (San Diego, CA, August 1–5, 2010).

74. M. Aldrich, “Experiential lighting – development and validation of perception-based lighting controls,” PhD Thesis, MIT Media Lab (August 2014).

75. N. Zhao, M. Aldrich, C. F. Reinhart, and J. A. Paradiso, “A multidimensional continuous contextual lighting control system using Google Glass,” 2nd ACM International Conference on Embedded Systems For Energy-Efficient Built Environments (BuildSys 2015) (November 2015).

76. A. Axaria, “INTELLIGENT AMBIANCE: Digitally mediated workspace atmosphere, augmenting experiences and supporting wellbeing,” MS Thesis, MIT Media Lab (August 2016).

77. P. Levis et al., “TinyOS: An operating system for sensor networks,” in Ambient Intelligence (Berlin: Springer, 2005): 115–148.

78. E. Gakstatter, “Finally, a list of public RTK base stations in the U.S.,” GPS World (January 7, 2014).

79. See: https://www.microsoft.com/en-us/research/event/microsoft-indoor-localization-competition-ipsn-2016.

80. E. Elnahrawy, X. Li, and R. Martin, “The limits of localization using signal strength: A comparative study,” Proceedings of the 1st IEEE International Conference on Sensor and Ad Hoc Communications and Networks (Santa Clara, CA, October 2004).

81. M. Maróti et al., “Radio interferometric geolocation,” Proceedings of SENSYS (2005): 1–12.

82. F. Belloni, V. Ranki, A. Kainulainen, and A. Richter, “Angle-based indoor positioning system for open indoor environments,” Proceedings of WPNC (2009).

83. Greenberg, Marquardt, Ballendat, et al., “Proxemic interactions,” op. cit.: 42–50.

84. Mayton, Zhao, Aldrich, et al., “WristQue: A personal sensor wristband,” op. cit.: 1–6.

85. R. Bolt, “‘Put-that-there’: Voice and gesture at the graphics interface,” ACM SIGGRAPH Computer Graphics 14(3) (July 1980): 262–270.

86. J. Froehlich et al., “Disaggregated end-use energy sensing for the smart grid,” IEEE Pervasive Computing (January–March 2010): 28–39.

87. G. Cohn, D. Morris, S. Patel, and D. S. Tan, “Your noise is my command: Sensing gestures using the body as an antenna,” Proceedings of CHI (2011): 791–800.

88. S. Gupta, K.-Y. Chen, M. S. Reynolds, and S. N. Patel, “LightWave: Using compact fluorescent lights as sensors,” Proceedings of UbiComp (2011): 65–70.

89. J. Chung et al., “Indoor location sensing using geo-magnetism,” Proceedings of MobiSys’11 (Bethesda, MD, June 28–July 1, 2011): 141–154.

90. N. Zhao, G. Dublon, N. Gillian, A. Dementyev, and J. Paradiso, “EMI spy: Harnessing electromagnetic interference for low-cost, rapid prototyping of proxemic interaction,” International Conference on Wearable and Implantable Body Sensor Networks (BSN ’15) (June 2015).

91. N.-W. Gong, S. Hodges, and J. A. Paradiso, “Leveraging conductive inkjet technology to build a scalable and versatile surface for ubiquitous sensing,” Proceedings of UbiComp (2011): 45–54.

92. J. A. Paradiso, J. Lifton, and M. Broxton, “Sensate Media – multimodal electronic skins as dense sensor networks,” BT Technology Journal 22(4) (October 2004): 32–44.

93. A. Dementyev, H.-L. Kao, and J. A. Paradiso, “SensorTape: Modular and programable 3D-aware dense sensor network on a tape,” Proceedings of ACM UIST (2015).

94. N.-W. Gong, C.-Y. Wang, and J. A. Paradiso, “Low-cost sensor tape for environmental sensing based on roll-to-roll manufacturing process,” IEEE SENSORS 2012 (Taipei, Taiwan, October 2012).

95. J. Qi, A. Huang, and J. Paradiso, “Crafting technology with circuit stickers,” Proceedings of ACM IDC ’15 (14th International Conference on Interaction Design and Children) (2015): 438–441.

96. See https://www.xively.com/xively-iot-platform/connected-product-management.

97. See https://allseenalliance.org/framework.

98. See https://www.iotivity.org.

99. S. Russell, and J. A. Paradiso, “Hypermedia APIs for sensor data: A pragmatic approach to the Web of Things,” Proceedings of ACM Mobiquitous 2014 (London, UK, December 2014): 30–39.

100. See https://github.com/ResEnv/chain-api.

101. D. Ramsay, “LearnAir: Toward intelligent, personal air quality monitoring,” MS Thesis, MIT Media Lab (August 2016).

102. M. Mittal, and J. A. Paradiso, “Ubicorder: A mobile device for situated interactions with sensor networks,” IEEE Sensors Journal, Special Issue on Cognitive Sensor Networks 11(3) (2011): 818–828.

103. M. McLuhan, Understanding Media: The Extensions of Man (New York: McGraw-Hill, 1964).

104. Lifton, Laibowitz, Harry, et al., “Metaphor and manifestation,” op. cit.: 24–33.

105. N. Marquardt, T. Gross, S. Carpendale, and S. Greenberg, “Revealing the invisible: visualizing the location and event flow of distributed physical devices,” Proceedings of the Fourth International Conference on Tangible, Embedded, and Embodied Interaction (TEI) (2010): 41–48.

106. J. Lifton, and J. A. Paradiso, “Dual reality: Merging the real and virtual,” Proceedings of the First International ICST Conference on Facets of Virtual Environments (FaVE) (Berlin, Germany, 27–29 July 2009), (Springer LNICST 33): 12–28.

107. G. Dublon, L. S. Pardue, B. Mayton, N. Swartz, N. Joliat, P. Hurst, and J. A. Paradiso, “DoppelLab: Tools for exploring and harnessing multimodal sensor network data,” Proceedings of IEEE Sensors 2011 (Limerick, Ireland, October 2011).

108. G. Dublon, and J. A. Paradiso, “How a sensor-filled world will change human consciousness,” Scientific American (July 2014): 36–41.

109. N. Joliat, B. Mayton, and J. A. Paradiso, “Spatialized anonymous audio for browsing sensor networks via virtual worlds,” The 19th International Conference on Auditory Display (ICAD) (Lodz, Poland, July 2013): 67–75.

110. See http://tidmarsh.media.mit.edu.

111. C. Duhart, “Ambient sound recognition,” in Toward Organic Ambient Intelligences – EMMA (Chapter 8), PhD Thesis, University of Le Havre, France (June 2016).

112. E. Lynch, and J. A. Paradiso, “SensorChimes: Musical mapping for sensor networks,” Proceedings of the NIME 2016 Conference (Brisbane, Australia, July 11–15, 2016).

113. S. Russell, G. Dublon, and J. A. Paradiso, “HearThere: Networked sensory prosthetics through auditory augmented reality,” Proceedings of the ACM Augmented Human Conference (Geneva, Switzerland, February 2016).

114. Lynch, and Paradiso, “SensorChimes,” op. cit.

115. J. Cherston, E. Hill, S. Goldfarb, and J. Paradiso, “Musician and mega-machine: Compositions driven by real-time particle collision data from the ATLAS detector,” Proceedings of the NIME 2016 Conference (Brisbane, Australia, July 11–15, 2016).

116. T. Teixeira, G. Dublon, and A. Savvides, “A survey of human-sensing: Methods for detecting presence, count, location, track, and identity,” ACM Computing Surveys 5(1) (2010): 59–69.

117. J. A. Paradiso, J. Heidemann, and T. G. Zimmerman, “Hacking is pervasive,” IEEE Pervasive Computing 7(3) (July–September 2008): 13–15.

118. N. Negroponte, Being Digital (New York: Knopf, 1995).

119. S. Mann, “Wearable computing: A first step toward personal imaging,” IEEE Computer (February 1997): 25–32.

120. T. Starner, “The challenges of wearable computing, Parts 1 & 2,” IEEE Micro 21(4) (July 2001): 44–67.

Comments on this publication